Links for 2026-05-12

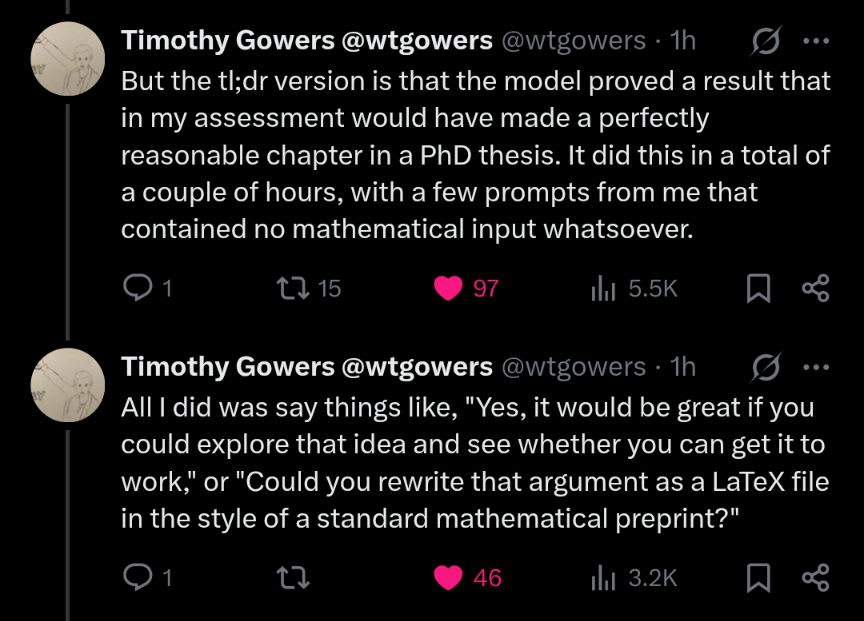

Fields Medalist Timothy Gowers tries GPT-5.5 Pro

...if AI mathematics continues to progress at anything like its current rate -- which is what I expect to happen -- then we will face a crisis very soon...

Read his full report: https://gowers.wordpress.com/2026/05/08/a-recent-experience-with-chatgpt-5-5-pro/

With Fields Medalists now saying that the latest AI systems are useful for research-level math, I want to remind everybody that this was predicted by Scott Alexander’s famous 2019 post about GPT-2.[1]

GPT-2 could not count past five without making mistakes. But the very fact that it could count to five was astonishing. He called GPT-2 a step toward general intelligence.

I invite you to think about AI systems today in a similar way. Don’t let their shortcomings make you dismissive. Be amazed by what they can already do and extrapolate from there.

“There are two types of people in the world these days. Those who believe in straight lines on log graphs, and those who don’t.” -- tautologer

P.S. Remember that we are far past the pure LLM era. Modern AI systems use LLMs as intuition modules, pruning the search space. They are just one part of orchestrated AI agents with memory, grounded in real-world feedback loops by verifiers and equipped with search and evolutionary algorithms.[2][3] And these systems have barely reached the MS-DOS level of what is possible.

[1] https://slatestarcodex.com/2019/02/19/gpt-2-as-step-toward-general-intelligence/

[2] https://arxiv.org/abs/2605.06651

[3| https://deepmind.google/blog/alphaevolve-a-gemini-powered-coding-agent-for-designing-advanced-algorithms/

AI

Claude was blackmailing engineers up to 96% of the time in safety tests. Anthropic has now traced why: the behaviour came from internet text that portrays AI as evil and driven by self-preservation. Their post-training pipeline wasn’t introducing the problem, but it also wasn’t removing it. https://www.anthropic.com/research/teaching-claude-why

Evidence that gradient descent discovered a crisp, algorithm-like solution, not just a vague statistical approximation: Neural Networks learn Bloom Filters https://www.lesswrong.com/posts/buxBdp8NtHGgBwabv/neural-networks-learn-bloom-filters

Neural Computer: A New Machine Form Is Emerging https://metauto.ai/neuralcomputer/

Recursive Agent Optimization (RAO) https://apga.github.io/RAO/

LLMs Improving LLMs: Agentic Discovery for Test-Time Scaling https://arxiv.org/abs/2605.08083

When Does Automating AI Research Produce Explosive Growth? Feedback Loops in Innovation Networks https://www.nber.org/papers/w35155

Interaction Models: A Scalable Approach to Human-AI Collaboration https://thinkingmachines.ai/blog/interaction-models/

DynamicVLA: A Vision-Language-Action Model for Dynamic Object Manipulation https://www.infinitescript.com/project/dynamic-vla/

AI-guided discovery of atypical protein assemblies https://www.tsl.ac.uk/publications/167424

Perceptron Mk1: frontier video and embodied reasoning. https://www.perceptron.inc/blog/introducing-perceptron-mk1

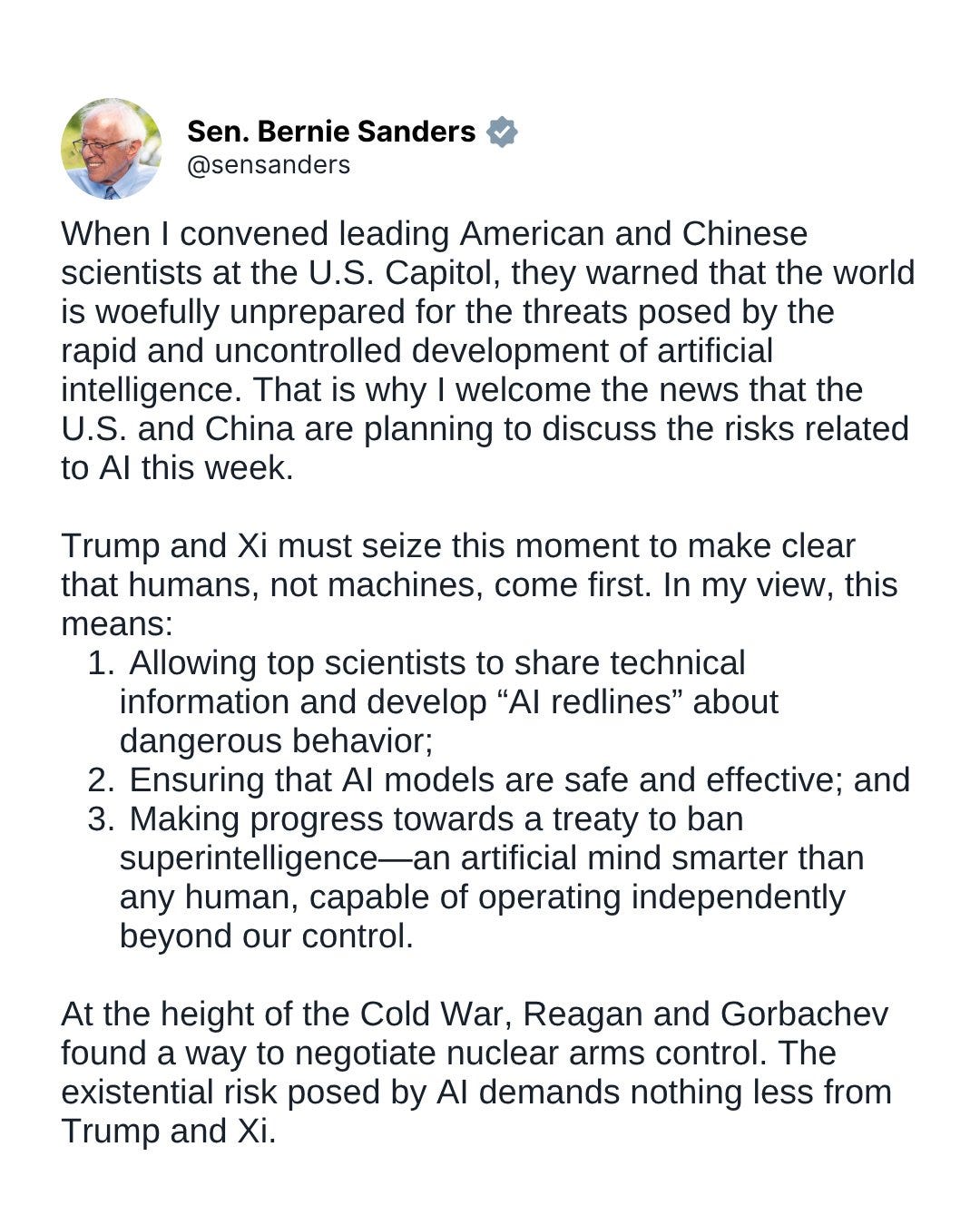

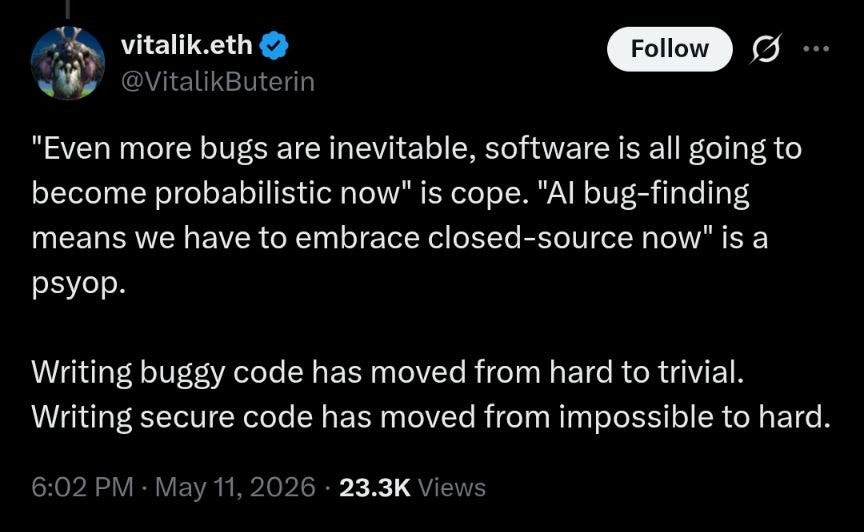

Germany’s agency for information security on GPT 5.5 and Mythos

P.S. https://en.wikipedia.org/wiki/Crypto_AG

See also:

Mythos found one (1) vulnerability in curl - an open-source software product with an installed base of 20 billion instances: https://daniel.haxx.se/blog/2026/05/11/mythos-finds-a-curl-vulnerability/

Language Models Can Autonomously Hack and Self-Replicate https://www.lesswrong.com/posts/JuoDNYDG8CgiQaCcz/linkpost-language-models-can-autonomously-hack-and-self

IQ

IQ With Conscience https://www.betonit.ai/p/iq_with_consciehtml

Interview with Scott Wu, the co-founder of Cognition AI, one of the fastest-growing companies in history. He’s also the greatest competitive programmer the US has ever produced. You may have seen him doing impossible card tricks and mental math. https://colossus.com/article/scott-wu-tapes-cognition/

Interview with Sven Magnus Øen Carlsen: “At the age of 15, Nunn started studying mathematics in Oxford; he was the youngest student in the last 500 years, and at 23 he did a PhD in algebraic topology. He has so incredibly much in his head. Simply too much. His enormous powers of understanding and his constant thirst for knowledge distracted him from chess.” https://infoproc.blogspot.com/2013/11/sven-magnus-en-carlsen.html

Chinese-born AI researchers are now some of Silicon Valley’s most valuable people: one engineer can make $500,000 a year, another $50 million, and rumours circulate of $200 million poaching packages. https://restofworld.org/2026/chinese-ai-researchers-silicon-valley/

Math

Thread of deep reasons behind simple facts: https://x.com/anderssandberg/status/2053757849918939364

The fundamental theorem of calculus, Green’s theorem, divergence theorem, classical Stokes, and Cauchy’s integral theorem are all “the same theorem”, ∫_∂M ω = ∫_M dω. The deep reason is duality between boundary (∂) and exterior derivative (d), with ∂² = 0 and d² = 0.

This generates de Rham cohomology, Maxwell’s equations (dF = 0, d*F = J), the Bianchi identities, and is why “closed but not exact” shows up as a framework for thinking about topological obstructions.

Central Limit Theorem is a renormalization group fixed point. The Gaussian is the attractive fixed point of self-convolution, and the basin of attraction is exactly the finite-variance distributions (Lévy stable laws are other fixed points). Universality in critical phenomena.

Cantor’s diagonal argument, Russell’s paradox, Gödel’s incompleteness, Tarski’s undefinability of truth, and the halting problem all have the same “diagonal” shape, thanks to Lawvere’s fixed point theorem.

Noether’s theorem is of course behind all the normal conservation laws we learn about in mechanics. Why energy conservation? Time translation invariance.

Deriving Hamiltonian from Lagrangian, the cascade of thermodynamic potentials, convex-programming duality: Legendre transforms. Which is the tropical or classical-limit version of the Fourier-Laplace transform. Semiring (ℝ,+,×) limits to tropical semiring (ℝ ∪ {−∞},max,+).

Ukraine

Minister of Defence Mykhailo Fedorov met with Palantir CEO Alex Karp in Kyiv to expand cooperation in AI and defence technologies. Ukraine says work with Palantir has created a detailed air attack analysis system, deployed AI tools for large intelligence datasets and integrated technology into deep strike planning. Brave1 Dataroom now gives developers real battlefield data to train AI models.

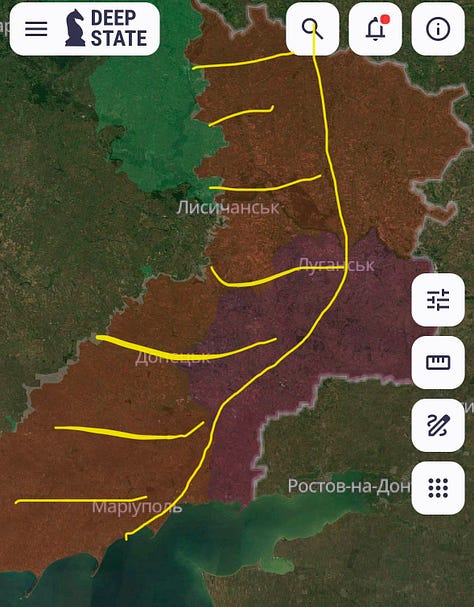

Russia’s most important deep rear logistics route is increasingly coming under fire control.

It’s all about money now. Ukraine can win. The technology is ready. Ballistic missiles are already in development. With enough funding, middle- and deep-strike capabilities could be scaled to Russia’s breaking point.

Armenia

Putin’s coded warning against Armenia choosing the European Union over Russia again goes to show how important it is to support Ukraine. Just imagine what he would be willing to do if he were encouraged by a “success” in Ukraine.

What’s most interesting about the warning is his admission that the chain of events leading to the invasion of Ukraine started with its attempt to join the EU.

We also have corroborating evidence that this has always been the main reason. Several people have confirmed that Viktor Yanukovych was blackmailed into not signing the EU Association Agreement. If he dared to sign the agreement with the EU, Putin would take large parts of Ukraine.

Putin cannot accept that the former Soviet republics could become more prosperous than Russia.