Links for 2026-05-08

GENE-26.5

GENE-26.5 from Genesis AI can cook in an unsimplified, real-world setting with more than 20 subtasks. It can do laboratory experiments with mm-level precision and complex tool usage.

Read more: https://www.genesis.ai/blog/gene-26-5-advancing-robotic-manipulation-to-human-level

Figure taught two robots to make a bed together

Helix-02 running simultaneously on 2 robots, fully onboard, doing a full bedroom reset from pixels-to-actions.

There’s no explicit messaging between these robots, they coordinate their actions fully visually, e.g. head nods.

1x speed, fully autonomous, no teleop.

More: https://www.figure.ai/news/helix-02-bedroom-tidy

Voice interfaces are going to be a big deal

More: https://openai.com/index/advancing-voice-intelligence-with-new-models-in-the-api/

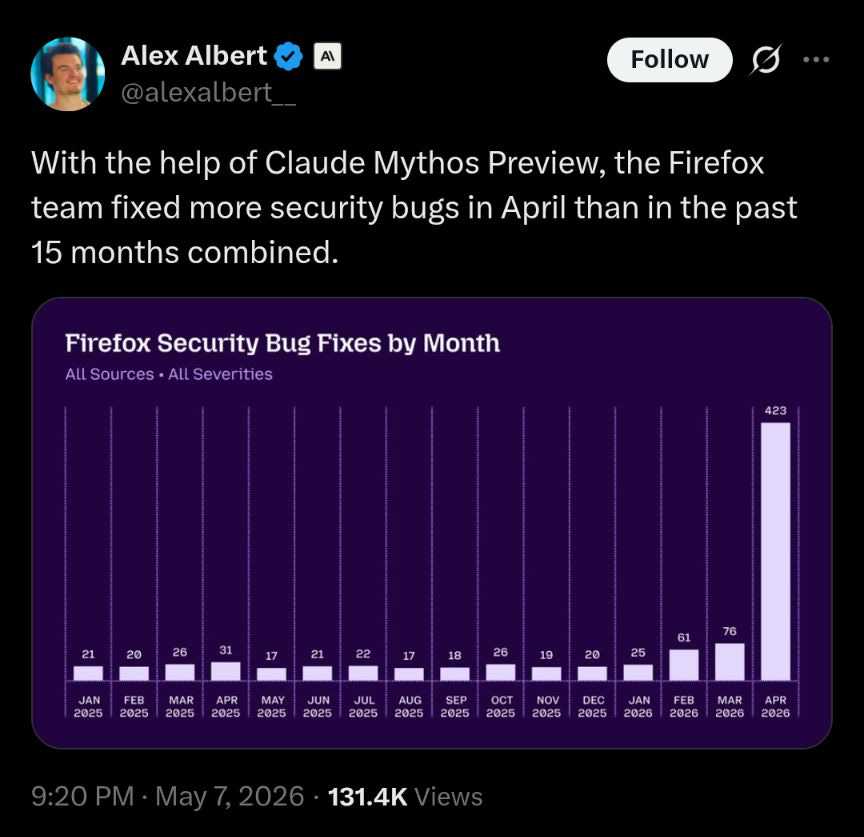

AI

DeepMind achieves 47.9% on FrontierMath T4, up from GPT-5.5 Pro’s previous SoTA score of 39.6%. T4 is above normal, PhD qualifying/Olympiad difficulty. They orchestrate AI agents around the workflow of mathematics. A project coordinator agent talks to the user, clarifies the research question, breaks it into goals, and delegates to parallel workstream coordinators. These can in turn call specialized sub-agents for literature review, coding, proof attempts, computational searches, and review. The system uses a shared workspace, internal messaging, version history, and persistent files, so the project has memory across many steps instead of being a transient chat. https://arxiv.org/abs/2605.06651

Natural Language Autoencoders Produce Unsupervised Explanations of LLM Activations https://www.lesswrong.com/posts/oeYesesaxjzMAktCM/natural-language-autoencoders-produce-unsupervised

Anthropic’s Boris Cherny: Why Coding Is Solved, and What Comes Next https://www.youtube.com/watch?v=SlGRN8jh2RI

“At Anthropic, we can see early evidence that jobs like software engineering are changing radically. We’re watching the internal economy of Anthropic start to shift, new threats emerge from the systems we build, and early signs of AI contributing to speeding up the research and development of AI itself. In order to realize the full benefits of AI progress, we want to share as much of that information as we can.” https://www.anthropic.com/research/anthropic-institute-agenda

Will We See AI with Recursive Self Improvement in 2028? Likely Not. https://hashcollision.substack.com/p/will-we-see-ai-with-recursive-self

The tables have turned on AI sceptics https://www.update.news/p/the-tables-have-turned-on-ai-sceptics

Notes from inside China’s AI labs https://www.interconnects.ai/p/notes-from-inside-chinas-ai-labs

Compressing model capabilities by making their circuits extractable. https://tokenbender.com/posts/honey-i-shrunk-the-circuits/

Robotics’ End Game: Nvidia’s Jim Fan — he is 95% certain that the robotics technology tree will be fully unlocked by 2040. https://www.youtube.com/watch?v=3Y8aq_ofEVs

Is ProgramBench Impossible? https://www.lesswrong.com/posts/3pdyxFi6JS389nptu/is-programbench-impossible

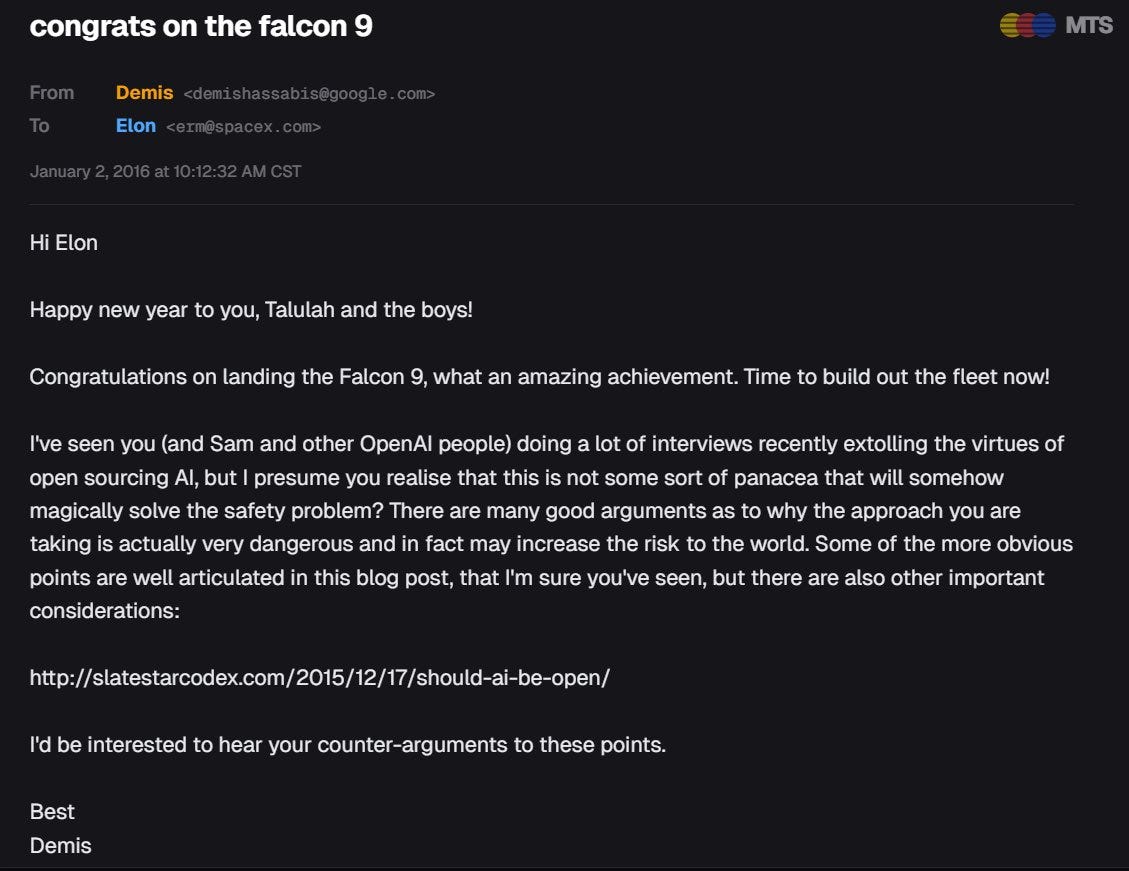

X-risk concerns are not hype

Anyone who has been following AI development and the LessWrong sphere for more than 20 years knows that these people are not hyping when they say that AI might pose an existential risk. For everyone else, here is a data point from the OpenAI vs. Musk trial. A private conversation between Google DeepMind co-founder Demis Hassabis and Elon Musk.

Previous anonymous reports (2024):

Identification of the original sender as Demis Hassabis (2026):

The Scott Alexander post linked in the email:

AI Preparedness Is Defense Spending

by Claude Opus 4.7

When we set defense budgets, we don’t price them against the modal year — the year in which no war happens. We price them against a distribution of bad states of the world: invasion, coercion, alliance failure, mobilization delays. The point is not that war is likely every year. The point is that if war arrives, the infrastructure you failed to build in peacetime cannot be summoned overnight. NATO’s 2% target is not an expected-loss calculation. It is an option premium against fat-tailed strategic uncertainty.

AI deserves the same treatment, and arguably more. Unlike defense — which is a pure-loss hedge — AI investment also buys participation in good-but-disruptive worlds. The right reference class is not just defense. It is a blend of defense, industrial policy, general-purpose infrastructure, and strategic weapons.

The framing

The right question is not “what is the modal AI outcome?” It is: what minimum national capacity is worth buying across the probability distribution of plausible AI outcomes, given that late preparation may be impossible?

Formally:

x* = argmax_x [ Σᵢ pᵢ Vᵢ(x) − C(x) ]

where x is annual AI-preparedness spending as a share of GDP, pᵢ is the probability of scenario i, Vᵢ(x) is the national value of being prepared in that scenario, and C(x) is the opportunity cost.

A useful first-pass intuition is to compute the probability-weighted average of conditional optima: x* ≈ Σᵢ pᵢ xᵢ*. But this is only a toy model. The real decision is not an average of scenario-specific answers; it is an optimization over a portfolio of capabilities whose value changes by scenario. In the cases that matter most, the naive average is biased downward, because AI preparedness has thresholds. You cannot recruit alignment researchers in eighteen months once a problem becomes acute. You cannot build a frontier compute base mid-takeoff. You cannot stand up a sovereign model-evaluation pipeline during a crisis. Below some level of compute, talent, secure infrastructure, public-sector competence, and energy supply, a state is not merely “less prepared.” It is unable to act at all. That is the irreversibility premium, and it is what justifies overspending relative to naive expected loss.

The ladder

Six rungs, each with the conditional spending I would defend if I somehow knew that rung obtained:

1. AI as productivity tool. Better software, search, coding, administration. Important but not strategically decisive. 0.2–0.4% of GDP. Reference: late-stage IT integration.

2. AI as Internet-level general-purpose technology. A 20–30 year diffusion comparable to the internet, cloud, or mobile stack. Underinvestment becomes generational economic dependence. 0.8–1.5% of GDP. Reference: internet buildout, cloud infrastructure, public-sector digitization, telecoms.

3. AI as electricity. Foundational infrastructure compounding for generations. National competitiveness depends on AI leadership the way industrial leadership did in the 19th and 20th centuries. 1.5–3% of GDP. Reference: electrification, semiconductor industrial policy.

4. AI as strategic dual-use technology. AI materially changes cyber offense, vulnerability discovery, intelligence analysis, drone warfare, autonomous systems, and CBRN-adjacent workflows — the kind of capability profile that Anthropic’s Mythos evaluations and similar frontier-model assessments are designed to surface. Preparedness becomes homeland security and military readiness, not innovation policy. 3–5% of GDP. Reference: nuclear weapons, ICBM programs, cyber command.

5. Transformative AI. Full-task substitution for human cognitive labor. Economic doubling time changes from decades to years. Massive labor displacement, political restructuring. 4–6% of GDP. Reference: Manhattan Project plus Apollo plus GI Bill, sustained.

6. Recursive self-improvement / loss-of-control regime. Permanent shifts in human autonomy, possibly catastrophic. 6–12% of GDP, capacity-limited. No clean reference class.

These conditional numbers are not precise estimates, but they are anchored rather than invented. US federal R&D plus procurement during the early Cold War ran 2–3% of GDP for decades. Apollo peaked around 0.7–0.8% of GDP. The Manhattan Project peaked around 0.4% of GDP for three years. Current major-power defense is 2–4%.

The aggregation

The exact probabilities are not the point; the exercise is meant to make the implicit hedge explicit. Suppose one uses an AI-aware-but-not-doomer prior:

p = (0.05, 0.30, 0.20, 0.20, 0.15, 0.10)

Using rung midpoints, the naive weighted average is approximately 3.0% of GDP. Add the irreversibility premium — because some capacities cannot be built after the scenario becomes obvious — and a defensible target lands around 3–4% of GDP. Squarely in major-power defense territory.

The result is robust to large reshuffles of the probability vector. Even if you assign zero probability to transformative or loss-of-control scenarios and put all the weight on rungs 1 through 4, the answer is still 1.5–2.5% of GDP. You do not need to believe in imminent ASI to justify serious money. You only need to believe that AI is at least as transformative as the internet and potentially relevant to national security. Both propositions are now mainstream.

What this implies for Europe

EU GDP is roughly €19 trillion. Three percent of that is ~€570 billion per year. Two percent is ~€380 billion — coincidentally what EU member states already spend on defense in 2025 (€381 billion, 2.1% of GDP).

Europe’s announced AI initiatives are large by ordinary innovation-policy standards but small by defense-budget standards. The Chips Act targets more than €43 billion in public investment, with broader public-private mobilization aimed at €100 billion+ by 2030. InvestAI’s €200 billion headline is a multi-year mobilization figure, much of it private capital. National announcements like France’s €109 billion AI plan or Germany’s various commitments are largely investment pledges rather than recurring annualized public budgets.

Depending on what one counts, recurring direct public AI-preparedness spending across the EU appears to be in the tens of billions per year, not hundreds. That is roughly an order of magnitude below a defense-scale hedge.

If our defense ministers spent on conventional defense what we currently spend on AI preparedness, we would have no army.

The honest caveat

Above 2% of GDP, the binding constraint shifts from money to talent pipelines, energy supply, fab capacity, and governance institutions. A euro spent on sovereign compute, on alignment research, on grid expansion for datacenters, on European-language model evaluation, or on cyber-defense capacity has very different option-value profiles. The argument here is not Keynesian-multiplier-applied-to-AI. It is: build the capacity now, while you still can. Lead times are long, talent pipelines take a decade, and the firms and labs that will define the frontier are being built today. Capacity that does not exist when the world clarifies cannot be assembled retroactively.

The next war is not the one you can see coming. The next general-purpose technology is not the one you can wait out.

The current embodied AI landscape

(GPT-5.5 + Claude Opus 4.7 collaboration)

FRONTIER GENERAL-PURPOSE ROBOTIC AI

Physical Intelligence — the clearest research leader. Its π0 / π0.5 / π*0.6 line has some of the strongest public evidence for cross-embodiment robot learning, the team has one of the deepest research benches in the field, and at least part of the stack has been open-sourced.

Google DeepMind Robotics — RT-2 helped define the current vision-language-action paradigm for generalist robot control. Gemini Robotics is the most serious big-lab contender, but less visible publicly than the startups.

Figure AI — the strongest humanoid commercialization story. Helix, aggressive vertical integration, and an 11-month Figure 02 deployment at BMW’s Spartanburg plant make Figure the player most visibly translating capability into production.

Genesis AI — credible new frontier entrant, with caveats. French-founded, co-founded by former Mistral researcher Théophile Gervet, and backed by Bpifrance, Eric Schmidt, and Xavier Niel. Its GENE-26.5 release, paired with a 20-DoF robotic hand designed around human anatomy, showed impressive autonomous dexterity: long-horizon cooking, bimanual Rubik’s Cube solving, lab pipetting, wire harnessing, piano playing. The caveats matter: the robot was not performing these tasks cold. The hardest tasks required hundreds of human demonstrations, and some delicate subtasks succeeded only around 50–60% of the time during filming. Real capability, real caveats.

SECOND TIER / IMPORTANT ADJACENT PLAYERS

Boston Dynamics — world-class hardware, increasingly integrating learned control into the stack.

Toyota Research Institute — Russ Tedrake’s group remains one of the most influential academic-industrial manipulation programs.

RAI Institute — Marc Raibert’s research outfit; high quality, lower public output volume than the productized leaders.

Tesla Optimus — hardware iteration is real; software capability remains hard to assess from public evidence.

NVIDIA GR00T / Isaac / Cosmos — less a robot operator than the infrastructure layer: simulation, world models, base models, data pipelines, and dev platforms for much of the field.

Skild AI — important foundation-model player; less public evidence yet on dexterous manipulation than the four above.

SPECIALISTS WORTH TRACKING

Waymo for autonomous driving; Unitree for low-cost robot hardware, especially the G1 humanoid; Sereact in Stuttgart for industrial picking; Mimic Robotics, an ETH spin-off, for dexterous hands; ETH RSL for legged locomotion; 1X, Apptronik, and Agility Robotics for humanoid deployment; Stanford, Berkeley, and CMU for the academic frontier — much of the Physical Intelligence network came from this ecosystem.

MILITARY AI IS A DIFFERENT COMPETITION

Watch Anduril and Shield AI in the US; Helsing, Quantum Systems, TEKEVER, and ARX Robotics in Europe, notably with Ukraine-related operational feedback loops; and Skydio, Saronic, and Palantir on the specialized side.

Miscellaneous

Germany's Dornier DAR (1980s)

-> Israel's IAI Harpy

-> Iran's Shahed-136

-> Russia's Geran-2

-> American innovation

Ukraine

E-Points have already changed the approach to warfare. This is about clear incentives, fair rewards, and the rapid scaling of effective solutions. Military units receive resources based on results: the more targets they destroy, the more points they earn. This is a direct incentive that enables units to strengthen their capabilities with new technologies.

Russian threats

It’s quite tiresome hearing (pro-)Russian people talk about retaliation.

Russia has literally been trying to freeze millions of Ukrainians to death every winter. Russians are conducting what they call “Human Safari”, a drone campaign in which operators hunt individual civilians, vehicles, ambulances, utility workers, farmers, and even people on bicycles and publish the videos.[1] To name just two examples of widespread Russian terror campaigns. At least 10-15 Ukrainian cities have been reduced to ruins or near-ghost-town status, and dozens more settlements

It’s like someone invades your home to kill you and your family, and when you slash the tires of the invader’s car, your neighbour warns you that the invader might now get really angry and hurt you.

The recent Russian terrorist attacks and threats against civilians just underscore how scared the regime is.

Russians have begun to openly mock the special military operation and criticize Putin in interviews. Russian mainstream TV shows reports about increasing Ukrainian deep strikes. Russian economists are warning of a dramatic collapse. Influential milbloggers predict that Russia might lose.

These attacks and threats are a desperate attempt to appear strong. They are trying to signal that they have everything under control. However, the only thing that is increasingly being brought under control is the Russian logistics routes by a new generation of Ukrainian AI drones.