Links for 2026-03-27

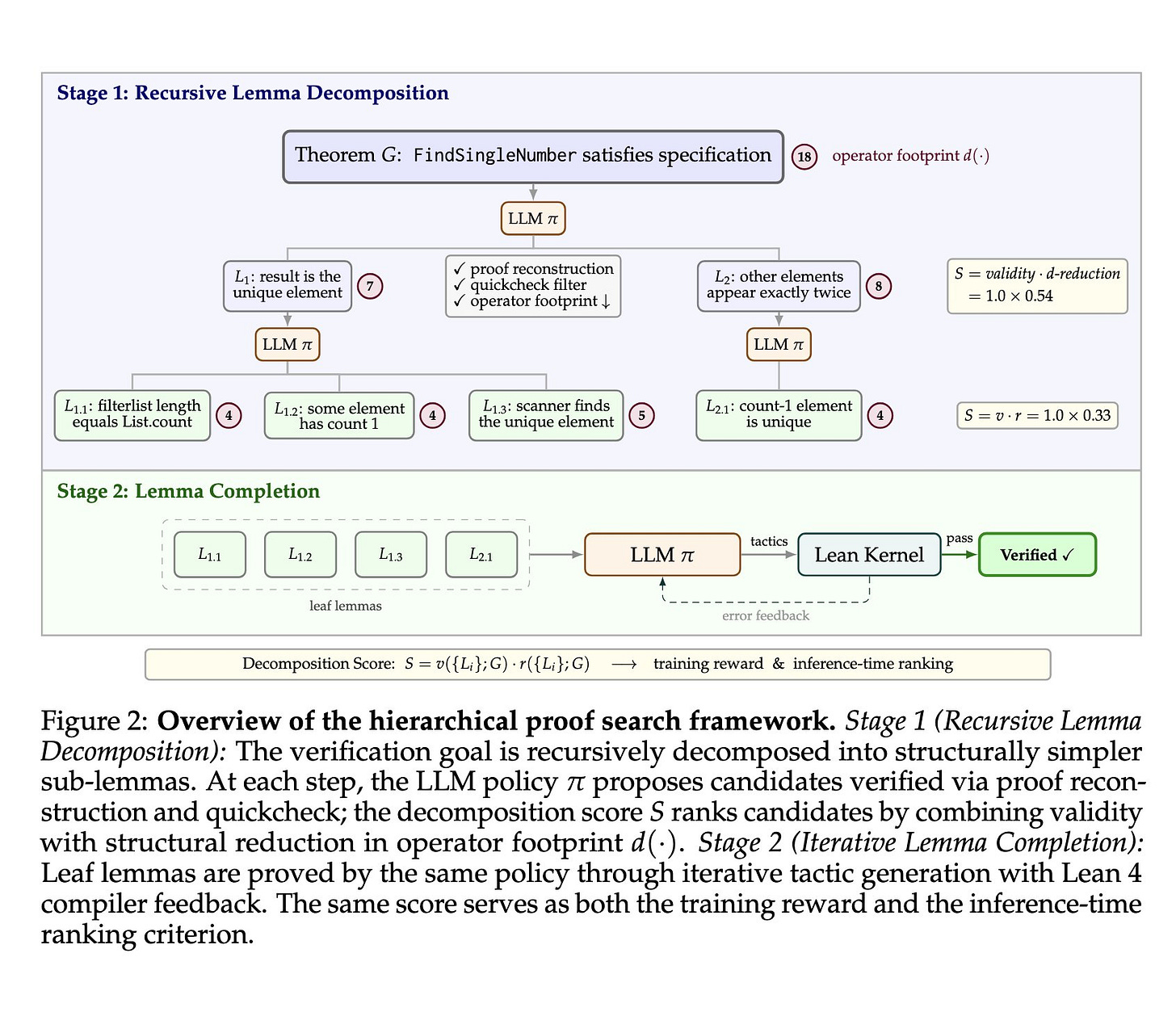

Goedel-Code-Prover: Hierarchical Proof Search for Open State-of-the-Art Code Verification

Given a program and its specification in Lean 4, Goedel-Code-Prover automatically synthesizes formal proofs of correctness. The model first decomposes a big verification goal into smaller lemmas, and then proves those lemmas one by one.

This research direction might eventually enable AI coding agents to create high-assurance software.

Paper, model, code: https://goedelcodeprover.github.io/

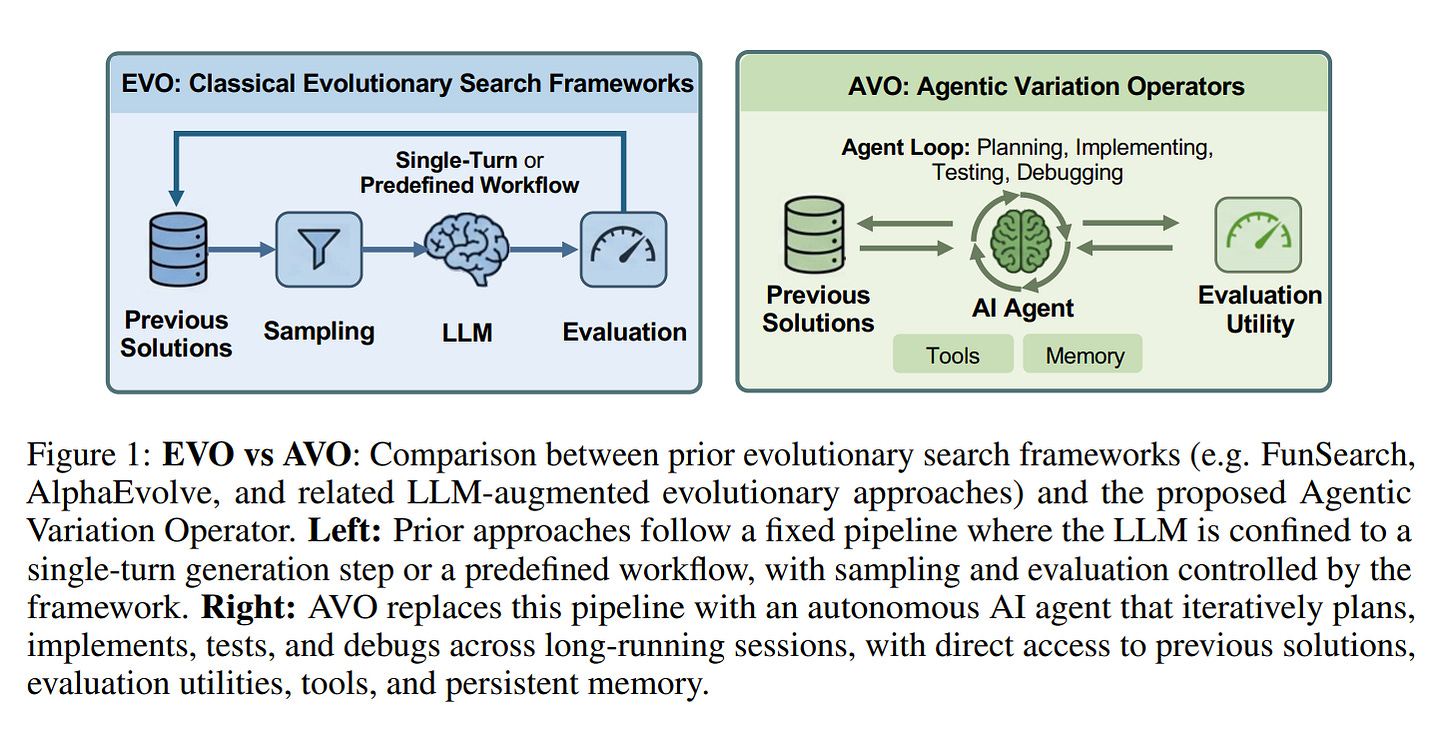

Agentic Variation Operators (AVO): A new way to do evolutionary search with AI

On some of the most optimized attention workloads, agents can now outperform almost all human GPU experts by searching continuously for 7 days with no human intervention inside the optimization loop.

A notable result is that the learned optimizations were not brittle. After evolving a multi-head attention kernel, the authors asked the same agent to adapt it to grouped-query attention (GQA), and it did so in about 30 minutes without human guidance.

Paper: https://arxiv.org/abs/2603.24517

AI

The Terrarium https://www.lesswrong.com/posts/znbfRXHq285nS7NAh/the-terrarium

TRIBE v2 (Trimodal Brain Encoder): A foundation model trained to predict how the human brain responds to almost any sight or sound. The observed log-linear scaling of encoding accuracy mirroring power laws in both artificial intelligence and neuroscience suggests that the ceiling for predicting human brain activity is yet to be reached. https://aidemos.atmeta.com/tribev2/

OmniReset: Emergent Dexterity via Diverse Resets and Large-Scale Reinforcement Learning https://weirdlabuw.github.io/omnireset/

LeWorldModel: Stable End-to-End Joint-Embedding Predictive Architecture from Pixels https://arxiv.org/abs/2603.19312v1

Someone took Karpathy’s automated research loop and let Claude loose on their old computer vision code while doing weekend chores—it found a major bug and cut error rates in half. https://ykumar.me/blog/eclip-autoresearch/

A language-agnostic “thinking space”? LLMs seem to have a Universal Language: they convert Chinese/English into a universal representation in the first few Transformer layers! https://dnhkng.github.io/posts/rys-ii/

316 ARC-AGI tasks solved with zero learning. No neural net, no training, no DSL — just 19th-century projective geometry. Encode grid cell relationships as Plücker lines in P³, find transversals via Schubert calculus, score candidates by geometric incidence. 95% solve rate on the eval set (of non-timeout tasks). Single C file, runs in seconds. https://github.com/khalildh/transversal-arc-solver

TurboQuant: Redefining AI efficiency with extreme compression https://research.google/blog/turboquant-redefining-ai-efficiency-with-extreme-compression/

Can you run RL rollouts across datacenters thousands of kilometers apart? Can you run distributed RL training for 1T parameter model? https://fireworks.ai/blog/frontier-rl-is-cheaper-than-you-think

“[Using LLM] trained on short aspirational essays written at age 11, we accurately predict [cognitive test scores]... to a similar degree as teacher assessments, and better than genomic data.” [published in 2025] https://www.nature.com/articles/s44271-025-00274-x

Is Gemini 3 Scheming in the Wild? https://www.lesswrong.com/posts/HZn9AZeD2jfXXD2hH/is-gemini-3-scheming-in-the-wild

US intelligence elevates AI as a top global threat in new report https://www.defenseone.com/threats/2026/03/AI-intelligence-new-global-threat/412232/

These Mini Brains Just Learned to Solve a Classic Engineering Problem https://singularityhub.com/2026/03/24/these-mini-brains-just-learned-to-solve-a-classic-engineering-problem/

China names AI tokens after the yuan. Should the US worry for the dollar? https://www.scmp.com/news/china/science/article/3347887/china-names-trillions-ai-token-after-yuan-should-us-worry-dollar

The rise of China’s hottest new commodity: AI tokens https://www.ft.com/content/2567877b-9acc-4cf3-a9e5-5f46c1abd13e?syn-25a6b1a6=1 [no paywall: https://archive.is/DGFYV]

For every dollar hyperscalers earn from AI today, they’re spending twelve dollars to build more capacity. https://tomtunguz.com/12x-bet-on-ai/

Anthropic

Anthropic won its injunction in the court battle with the Trump administration. This injunction temporarily prevents the Trump administration from enforcing its blacklist-like measures against Anthropic while the case continues.

The specific measures blocked by the judge include the Pentagon’s designation of Anthropic as a “supply-chain risk” and the enforcement of Trump’s directive that federal agencies stop using Anthropic and Claude.

In other news, Anthropic seems to have unintentionally leaked preliminary materials regarding an unreleased model via a misconfigured public CMS. Early-access customers describe the new model as a “step change.” Anthropic confirmed that it is testing a new early-access model, which it claims is a significant improvement over previous Claude models, particularly in terms of reasoning, coding, and cybersecurity.

Quote from the leaked document:

Mythos is also a large, compute-intensive model. It’s very expensive for us to serve, and will be very expensive for our customers to use. We’re working to make the model much more efficient before any general release.

For those reasons, we’re taking a slower, more gradual approach to releasing Mythos than we have with our other models. We’re beginning with a small number of early-access customers, who will explore the model’s cybersecurity applications and report back what they find.

Sources:

Anthropic Wins Injunction in Court Battle With Trump Administration https://www.wsj.com/politics/policy/anthropic-wins-injunction-in-court-battle-with-trump-administration-4cc93351 [no paywall: https://archive.is/ZqwNv]

Anthropic vs. DoW #6: The Court Rules https://www.lesswrong.com/posts/jdWHwsj8GvwgJSxF7/anthropic-vs-dow-6-the-court-rules

Dispatch from Anthropic v. Department of War Preliminary Injunction Motion Hearing https://www.lesswrong.com/posts/CCDQ7PdYHXsJAE5bi/dispatch-from-anthropic-v-department-of-war-preliminary

Exclusive: Anthropic left details of an unreleased model, invite-only CEO retreat, sitting in an unsecured data trove in a significant security lapse https://fortune.com/2026/03/26/anthropic-leaked-unreleased-model-exclusive-event-security-issues-cybersecurity-unsecured-data-store/

Ukraine

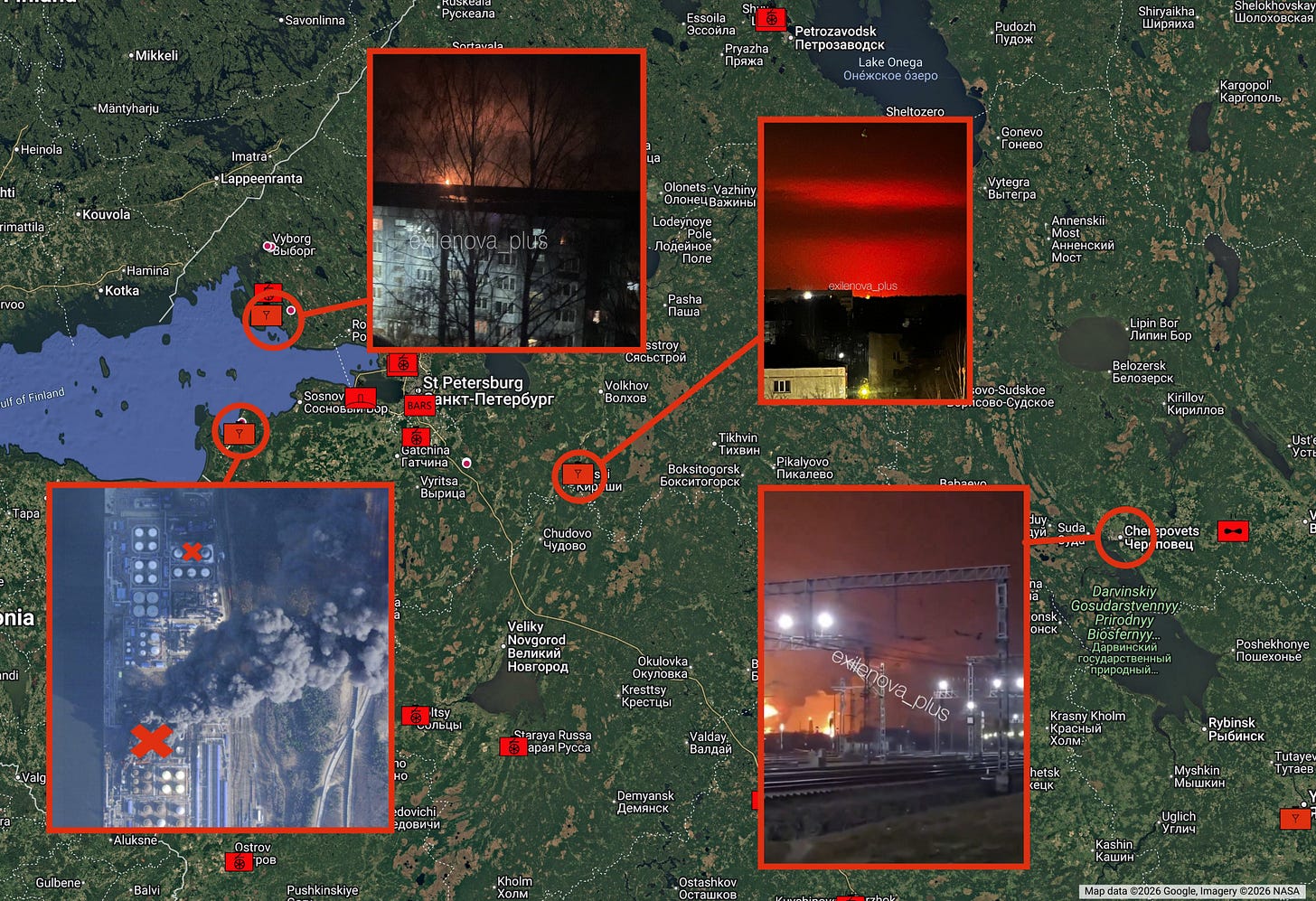

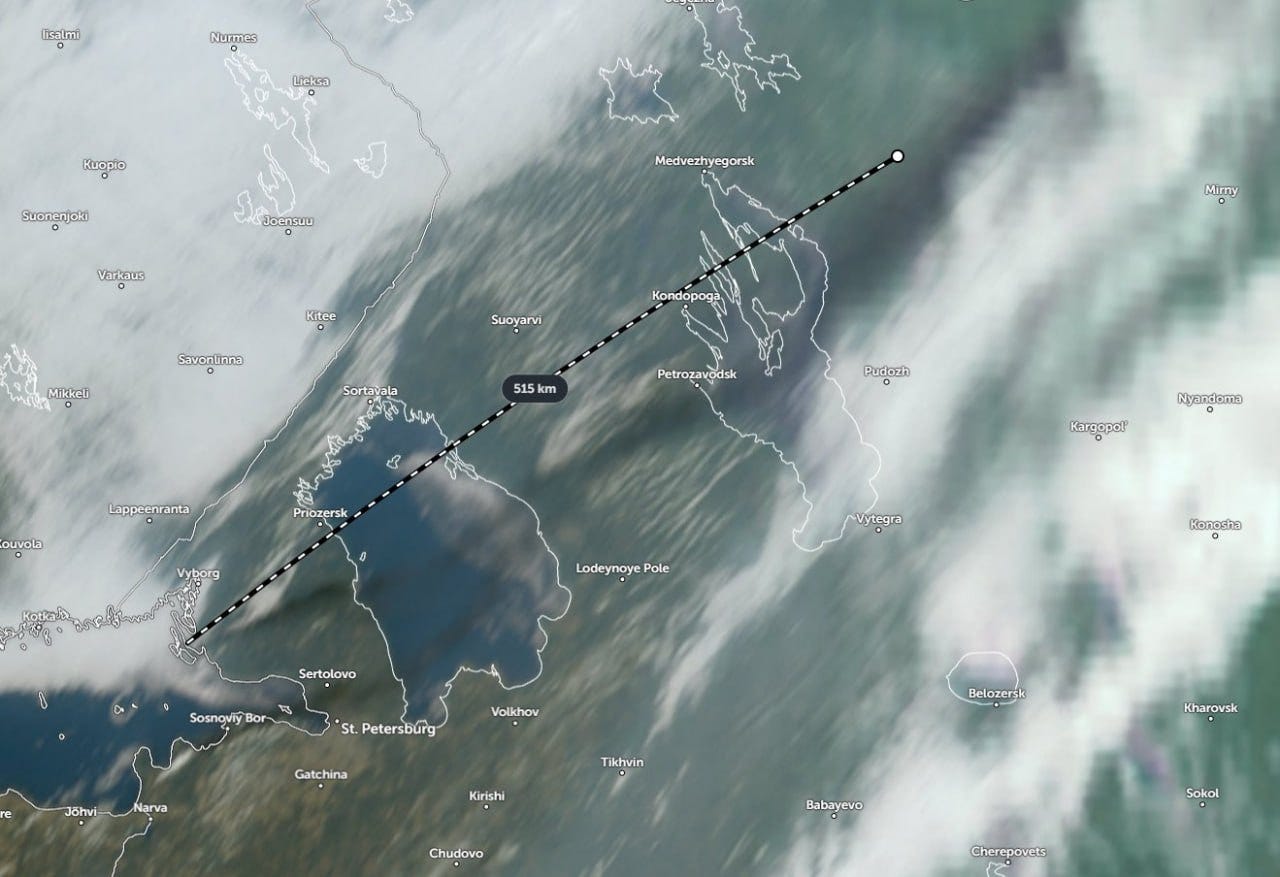

In recent days, Ukraine has shifted from hitting isolated Russian refineries to striking the Baltic export chain itself: Primorsk, Ust-Luga, and the Kirishi refinery tied into that system. That matters because it targets the point where Russian oil becomes cash. Instead of merely damaging processing capacity, Ukraine is hitting export terminals, loading infrastructure, and the logistics that move fuel oil to foreign buyers. Reuters reports that the disruption, together with other recent blows, has halted at least 40% of Russia’s oil export capacity for now, while Transneft is trying to reroute flows and damage at Ust-Luga could force cuts at several refineries.

Zelensky explicitly said Ukraine was intensifying strikes on Russian energy infrastructure after pressure on Russian oil eased because of the Iran war, framing the attacks as Ukraine’s own form of sanctions.

More:

Blinding the Bear and Pulling Its Fangs: Ukraine’s Long-Range Campaign Against the Russian Air Defence https://tochnyi.info/2026/03/blinding-the-bear-and-pulling-its-fangs-ukraines-long-range-campaign-against-the-russian-air-defence/

Gone Fishing: Nordsint and The Insider Reveal the Scheme Supplying Russia with Critical Drone Components from China https://nordsint.org/2026/03/24/gone-fishing-nordsint-and-the-insider-reveal-the-scheme-supplying-russia-with-critical-drone-components-from-china/

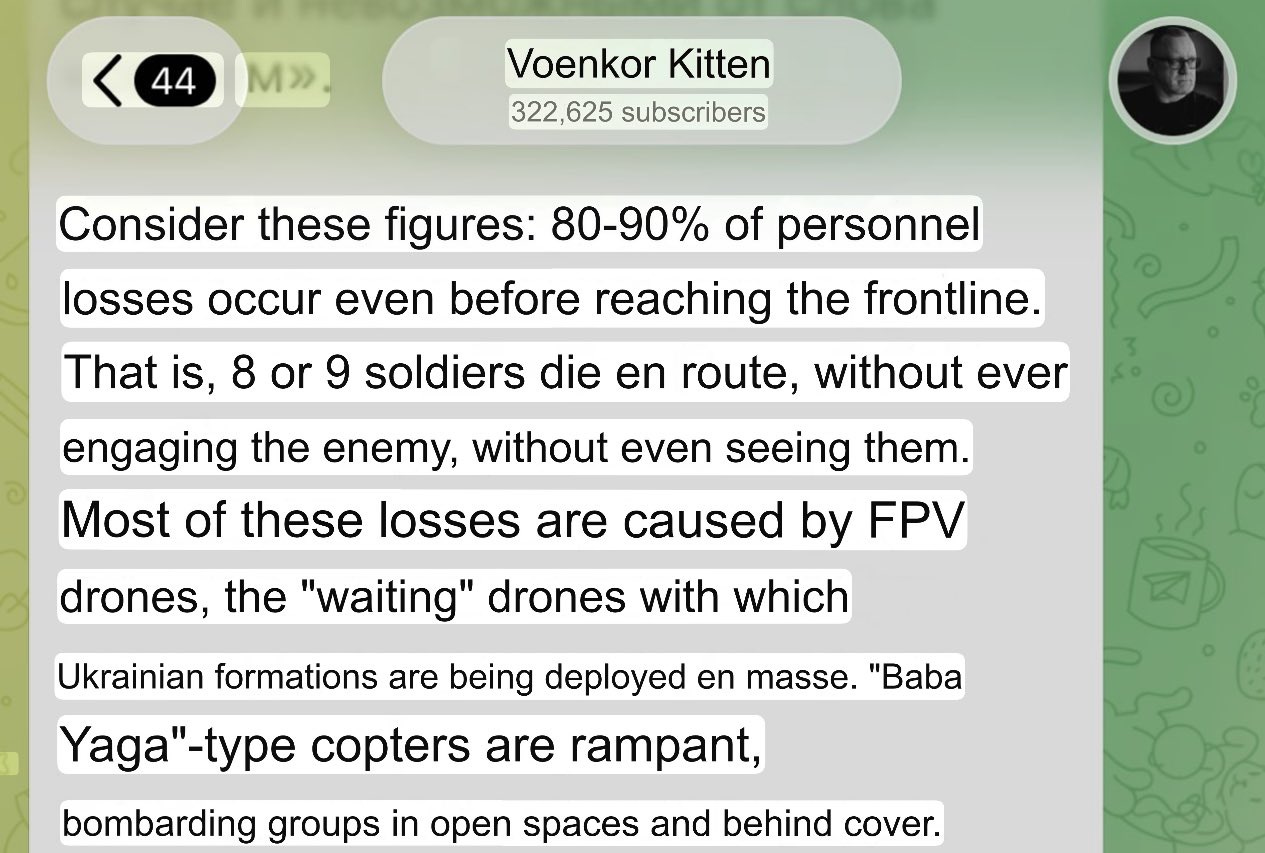

How the Russian Army Fights – Using One Unit as an Example https://dossier.center/minus27/