Links for 2026-03-18

AI

LATENT: Learning Athletic Humanoid Tennis Skills from Imperfect Human Motion Data https://zzk273.github.io/LATENT/

GPT-5.4 Pro solved the first Open Frontier Math problem. It was then autoformalized by Gauss. FrontierMath open problems are open problems from research mathematics that professional mathematicians have tried and failed to solve. Paper and Lean formalization: https://github.com/math-inc/FrontierMathOpen-Hypergraphs

Leonard Susskind: Finally I thank the chatbot who gave me the definition of scaffold in section 1.3. It was better than anything I was able to do. https://arxiv.org/abs/2603.12434

Letting Claude do Autonomous Research to Improve SAEs https://www.lesswrong.com/posts/rbqJoxFZtae9x93mx/letting-claude-do-autonomous-research-to-improve-saes

“How well can AI agents post-train language models? We built a benchmark to find out.” https://posttrainbench.thoughtfullab.com/

Multi-Agent Memory from a Computer Architecture Perspective: Visions and Challenges Ahead https://arxiv.org/abs/2603.10062

XSkill: Continual Learning from Experience and Skills in Multimodal Agents https://arxiv.org/abs/2603.12056

FALCON: Fast-Weight Attention for Continual Learning https://yifanzhang-pro.github.io/FALCON/

You can’t imitation-learn how to continual-learn https://www.lesswrong.com/posts/9rCTjbJpZB4KzqhiQ/you-can-t-imitation-learn-how-to-continual-learn

Learning to Reason without External Rewards https://arxiv.org/abs/2505.19590

EvoX: Meta-Evolution for Automated Discovery https://arxiv.org/abs/2602.23413

Simple Recipe Works: Vision-Language-Action Models are Natural Continual Learners with Reinforcement Learning https://arxiv.org/abs/2603.11653

“We trained Composer to self-summarize through RL instead of a prompt.” https://cursor.com/blog/self-summarization

AdaEvolve: Adaptive LLM-Driven Zeroth-Order Optimization https://skydiscover-ai.github.io/blog-adaevolve.html

Trajectory-Informed Memory Generation for Self-Improving Agent Systems https://arxiv.org/abs/2603.10600

Language Model Teams as Distributed Systems https://arxiv.org/abs/2603.12229

Temporal Straightening for Latent Planning https://agenticlearning.ai/temporal-straightening/

Matching Features, Not Tokens: Energy-Based Fine-Tuning of Language Models https://arxiv.org/abs/2603.12248

Attention Residuals: Rethinking depth-wise aggregation https://github.com/MoonshotAI/Attention-Residuals/blob/master/Attention_Residuals.pdf

Online Experiential Learning for Language Models https://arxiv.org/abs/2603.16856

The First Open-Source Agentic AI Physicist https://theinnermostloop.substack.com/p/the-first-open-source-agentic-ai

Terence Tao: “Damek Davis and I have launched a "distillation challenge", to see how well the 22 million implications in universal algebra generated by the Equational Theories Project can be condensed down to a single "cheat sheet" prompt that a low-powered LLM can use to answer these questions as accurately as possible.” https://terrytao.wordpress.com/2026/03/13/mathematics-distillation-challenge-equational-theories/

Measuring progress toward AGI: A cognitive framework https://blog.google/innovation-and-ai/models-and-research/google-deepmind/measuring-agi-cognitive-framework/

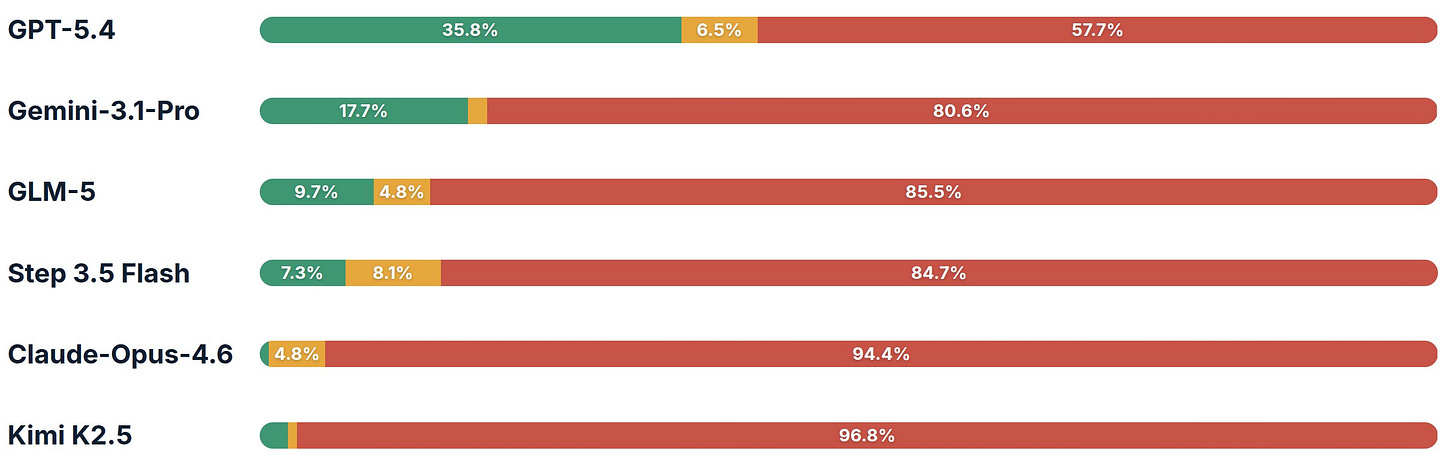

“How do frontier AI agents fail when given the same task multiple times? We ran Claude Opus 4.5, Gemini 2.5 Pro, and GPT 5.4 on GAIA’s 165 real-world tasks with multiple repetitions per model, then examined cases where agents gave wrong answers, disagreed with themselves, or broke under tool failures and input perturbations. Below are the most instructive examples.” https://hal.cs.princeton.edu/reliability/benchmark/gaia/analysis/

Nvidia and Palantir have partnered to create new “AI operating system” https://www.palantir.com/sovereignaios/

Neural Thickets: Diverse Task Experts Are Dense Around Pretrained Weights https://thickets.mit.edu/

LLMs as Giant Lookup-Tables of Shallow Circuits https://www.lesswrong.com/posts/a9KqqgjN8gc3Mzzkh/llms-as-giant-lookup-tables-of-shallow-circuits

Terrified Comments on Corrigibility in Claude’s Constitution https://www.lesswrong.com/posts/K2Ae2vmAKwhiwKEo5/terrified-comments-on-corrigibility-in-claude-s-constitution

Neuroscience and Psychology

Inside the mind of a top superforecaster This piece profiles Malcolm Murray, a Good Judgment superforecaster, and shows how he structures questions, updates probabilities, and uses base rates to beat intuition. https://goodjudgment.substack.com/p/meet-superforecaster-malcolm-murray

Scientists revive activity in frozen mouse brains for the first time https://www.nature.com/articles/d41586-026-00756-w [no paywall: https://archive.is/kUHDL]

Belgian Startup ReVision Implant Wins FDA Breakthrough Status for Visual BCI https://insidebci.com/news/2026-03-16-revision-implant-secures-fda-breakthrough-device-designation-for-visual-cortical-prosthesis/

Brain Implants Let Paralyzed People Type Nearly as Fast as Smartphone Users https://singularityhub.com/2026/03/17/brain-implants-let-paralyzed-people-type-with-thought-alone-nearly-as-fast-as-smartphone-users/

Three anesthesia drugs all have the same effect in the brain, MIT researchers find https://news.mit.edu/2026/three-anesthesia-drugs-all-have-same-effect-brain-0317

Statement by the President of the Brain Preservation Foundation “regarding the misleading EON Systems “fly upload” video” https://x.com/KennethHayworth/status/2032604687212392562

Miscellaneous

Rats and mice exposed to cell phone radiation at doses 10 to 100 times stronger than a mobile phone — nine hours a day, for two years — didn’t get more cancer. They lived longer, and they got less disease. That’s the core finding of an independent reanalysis of the raw data from the US National Toxicology Program’s $30 million studies, the most expensive animal radiation experiments ever conducted. https://github.com/zanekoch/airpods-go-brrrrr

Game Theory (Open Access textbook with 165 solved exercises) https://arxiv.org/abs/1512.06808

The paradox of derivatives and integrals https://statmodeling.stat.columbia.edu/2026/03/14/the-paradox-of-derivatives-and-integrals/