Links for 2026-02-04

AI

Microsoft introduces RPG-Encoder, a system that improves how AI understands complex code repositories. In the SWE-bench Verified benchmark, it achieved a state-of-the-art 93.7% accuracy in localizing bugs (Acc@5). https://arxiv.org/abs/2602.02084

Teaching Models to Teach Themselves: Reasoning at the Edge of Learnability https://arxiv.org/abs/2601.18778

Semi-Autonomous Mathematics Discovery with Gemini: A Case Study on the Erdős Problems https://arxiv.org/abs/2601.22401

Recent Advances in LLMs for Mathematics — OpenAI built a scaffold for GPT-5 to solve a particular complex mathematical problem that enabled the model to think for *two days*(!) https://www.youtube.com/watch?v=MH3lG7V7SuU

A small gallery of AlphaEvolve experiments https://alphaevolve-examples.web.app/ae/gallery

Synthetic pretraining https://vintagedata.org/blog/posts/synthetic-pretraining

OpenClaw (formerly MoltBot, formerly ClawdBot) gives LLMs persistence and memory in a way that allows any computer to serve as an always-on agent carrying out your instructions. The memory and personal details are stored locally. You can run popular models remotely through APIs locally if you have enough hardware. You communicate with it using any of the popular messaging tools (WhatsApp, Telegram, and so on), so it can be used remotely. https://www.lesswrong.com/posts/aQKBMEvTj3Heidoir/unless-that-claw-is-the-famous-openclaw

New Anthropic paper: The longer the model has to reason, the more unpredictable it becomes: not consistently wrong, not completely random, just pursuing strange goals that are neither systematically aligned nor misaligned. There is an inconsistent relationship between model intelligence and incoherence. But smarter models are often more incoherent. https://alignment.anthropic.com/2026/hot-mess-of-ai/

Anthropic’s “Hot Mess” paper overstates its case: The paper's abstract says that "in several settings, larger, more capable models are more incoherent than smaller models", but in most settings they are more coherent. https://www.lesswrong.com/posts/ceEgAEXcL7cC2Ddiy/anthropic-s-hot-mess-paper-overstates-its-case-and-the-blog

The Dragon Hatchling: The Missing Link between the Transformer and Models of the Brain https://arxiv.org/abs/2509.26507

“Dash is a self-learning data agent that grounds its answers in 6 layers of context and improves with every run.” https://github.com/agno-agi/dash

POPE: Learning to Reason on Hard Problems via Privileged On-Policy Exploration https://arxiv.org/abs/2601.18779

What did we learn from the AI Village in 2025? https://www.lesswrong.com/posts/iv3hX2nnXbHKefCRv/what-did-we-learn-from-the-ai-village-in-2025

China’s genius plan to win the AI race is already paying off https://www.ft.com/content/68f60392-88bf-419c-96c7-c3d580ec9d97 [no paywall: https://archive.is/mQWjj]

Moltbook: After The First Weekend https://www.astralcodexten.com/p/moltbook-after-the-first-weekend

If the Superintelligence were near fallacy https://www.lesswrong.com/posts/tkA9J8RxoEckH7Pop/if-the-superintelligence-were-near-fallacy

“OpenAI chief research officer Mark Chen tells Forbes that in the year ahead it hopes to develop an AI researcher ‘intern’ that can help his team accelerate its ideas. ‘We are heading toward a system that will be capable of doing innovation on its own,’ Altman says. ‘I don’t think most of the world has internalized what that’s going to mean.’” https://www.forbes.com/sites/richardnieva/2026/02/03/sam-altman-explains-the-future/ [no paywall: https://archive.is/FrX0R]

“I bet with full confidence that 2026 will mark the first year that Large World Models lay real foundations for robotics, and for multimodal AI more broadly.” https://x.com/DrJimFan/status/2018754323141054786

“The vision of human-level machine intelligence laid out by Alan Turing in the 1950s is now a reality. Eyes unclouded by dread or hype will help us to prepare for what comes next” https://www.nature.com/articles/d41586-026-00285-6 [no paywall: https://archive.is/ozUOy]

SpaceX acquires xAI, plans to launch a massive satellite constellation to power it https://arstechnica.com/ai/2026/02/spacex-acquires-xai-plans-1-million-satellite-constellation-to-power-it/

Samsung, SK Hynix Exceed Value of Chinese Duo as AI Boom Shifts https://www.bloomberg.com/news/articles/2026-02-03/samsung-sk-hynix-to-top-value-of-chinese-duo-as-ai-boom-shifts [no paywall: https://archive.is/UQLcJ]

Inside an AI start-up’s plan to scan and dispose of millions of books https://www.washingtonpost.com/technology/2026/01/27/anthropic-ai-scan-destroy-books/ [no paywall: https://archive.is/s7Ld8]

US stocks drop on fears AI will hit software and analytics groups https://www.ft.com/content/48ec5657-c2e7-4111-a236-24a96a8d49e7 [no paywall: https://archive.is/ORjiw]

Miscellaneous

The Meta-Anthropic Argument https://www.lesswrong.com/posts/SgxkGoT8tvxREszoA/the-meta-anthropic-argument

How a unique class of neurons may set the table for brain development https://news.mit.edu/2026/how-neurons-may-set-table-for-brain-development-0202

“In 2024, the total installed electricity capacity of the planet—every coal, gas, hydro, and nuclear plant and all of the renewables—was about 10 terawatts. The Chinese solar supply chain can now pump out 1 terawatt of panels every year.” https://www.wired.com/story/china-renewable-energy-revolution/ [no paywall: https://archive.is/xzEyw]

Richard Ngo proposes reframing the goals of intelligent agents in terms of “goal-models” rather than the traditional utility functions. https://www.lesswrong.com/posts/MEkafPJfiSFbwCjET/on-goal-models

Basics of How Not to Die https://www.lesswrong.com/posts/dHFrKjgTC3zPfpodr/basics-of-how-not-to-die

A review of Ada Palmer’s 2025 pop-history book, Inventing the Renaissance. https://www.lesswrong.com/posts/YZS6f32CgNqTzb7Zn/inventing-the-renaissance-review

Polynomial equations are programs

Any computer program can be hidden inside a single polynomial equation.

If you force an integer polynomial to equal zero, you can “wire together” logical conditions in a way that behaves like code.

Logic using only “= 0”

Think of a statement A as “this integer expression equals 0”.

A OR B

If a product is zero, at least one factor must be zero:

A * B=0

A AND B

A square is never negative, so the only way the sum of two squares is zero is if both are zero:

A^2 + B^2 = 0

Example: “x is even AND (x is 5 OR 6)”

“x is even” means: there exists an integer k with x=2k, i.e.

(x - 2k) = 0

“x is 5 OR 6” means:

(x-5)(x-6) = 0

Combine with AND:

(x - 2k)^2 + ((x-5)(x-6))^2 = 0

This single equation has an integer solution exactly when x equals 6.

How do we scale this up?

A program run is a long list of configurations (state + memory). Since an equation can’t run a program, we encode the entire history of a run (the “trace”) as data.

Then the polynomial acts like a strict auditor. It bundles thousands of AND-conditions that check:

Did step 1 follow the rules to reach step 2?

Did step 2 correctly lead to step 3?

…

Did some step reach a halting state?

The equation doesn’t “execute” anything. It asks a yes/no question: Does there exist a complete trace that passes every check?

If yes, the polynomial can be made to equal zero. If no, it can’t.

P.S. How do you fit a whole computer memory into a single number?

Think of prime numbers as “slots.” Math guarantees that every integer has a unique prime-factor signature. So to store the list (3,1,4), you can compute 2^3 * 3^1 * 5^4 = 15000. Because prime factorization is unique, 15000 can only ever be decoded back into 3,1,4. One integer can perfectly store a whole structured state.

P.P.S. This was used to solve Hilbert’s tenth problem: https://en.wikipedia.org/wiki/Hilbert%27s_tenth_problem

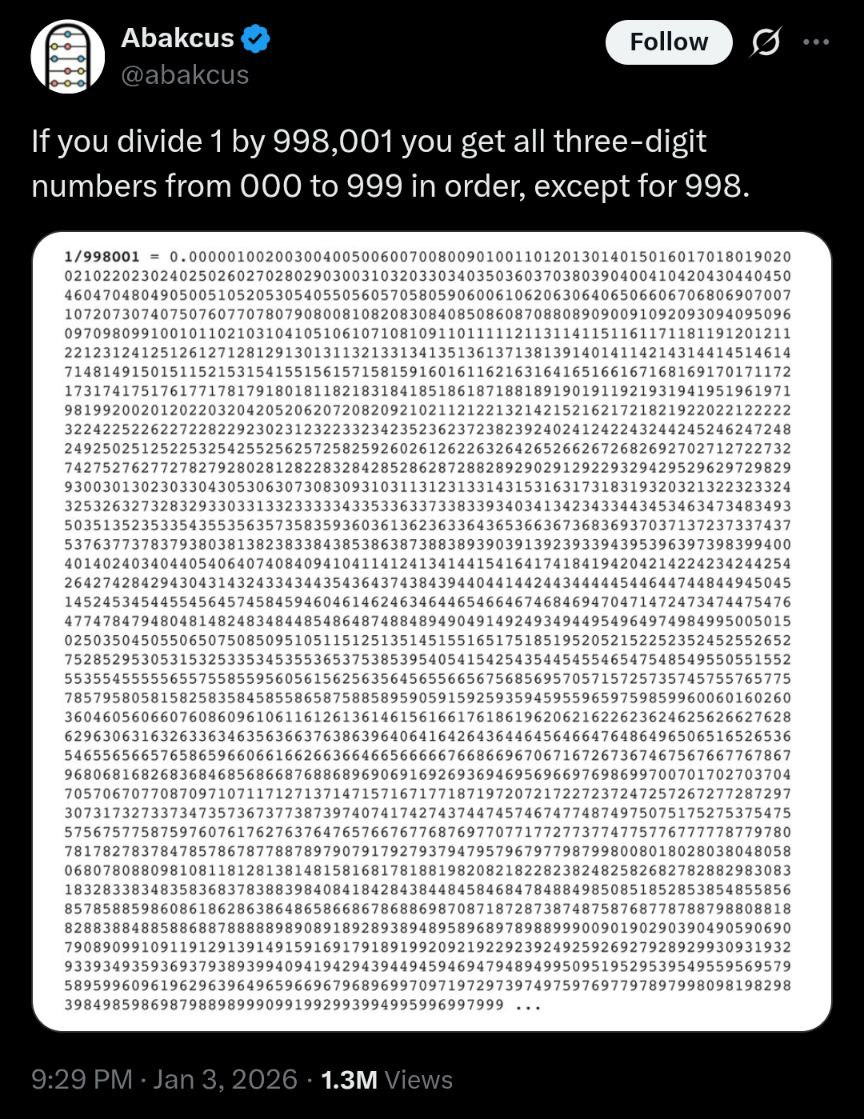

1/998001

998001 = 999^2 = (1000-1)^2

Base-1000: 0.(000)(001)(002)(003)(004)... = sum k>=1 d_k*1000^(-k) where d_k is an element of {0,1,...,999} and is printed with three digits.

Geometric series: 1/999 = 1/(1000-1) = (1/1000)(1/(1-(1/1000))) = sum n>=1 1000^(-n) = 0.(001)(001)(001)...

Square it: (sum n>=1 1000^(-n))^2 = sum m,n>=1 1000^(-(m+n)).

For k>=2, the coefficient of 1000^(-k) is the number of pairs (m,n) with m+n=k, which is k-1.

1/(999^2) = sum k>=2 (k-1)1000^(-k)

then (d_1, d_2, d_3,...) = (0,1,2,...) (note: d_1 is 0 because the sum starts at k=2).

This hits 1000 when k=1001 and the carry propagates back to turn 998 into 999.

The Map That Rewrites the Territory

A thermostat varies power usage to keep a room at a constant temperature. The power causes the heat, yet the power can show no correlation with indoor temperature because the thermostat keeps the temperature essentially constant.

If a stock reliably dips every Tuesday, traders will buy earlier to profit from the rebound. Their front-running pushes the price up before Tuesday, and the “Tuesday dip” gets competed away.

If every trader uses the same risk model and it flags “sell when volatility rises,” then volatility spikes can trigger synchronized selling, making the model’s warning come true.

If people believe a bank is in trouble, they rush to withdraw cash, and the rush itself creates the trouble they feared.

When a market assigns a startup a high probability of success, it can attract talent, capital, and partnerships, creating the conditions that make success more likely.

If a weather report says “huge lines at the ski resort tomorrow,” many people stay home, and the lines never happen.

If employees know their keystrokes are being monitored, their typing patterns change, so the measurement stops reflecting normal work.

If a call center is judged by “shortest average call time,” agents learn to end calls quickly, and the metric improves while customer problems get worse.

If teachers’ pay depends heavily on test scores, schools start teaching to the test (or gaming who gets tested), and the scores stop meaning what they used to mean.

If a government announces a fuel tax for next month, drivers change their behavior today. The historical correlation between price and consumption breaks immediately because people react to their expectations of the future, rendering the old model obsolete before the tax even starts.

Move a little toward swing voters to win the next election. Winning rewards the move, shifting the party’s baseline. Repeat, and you end up far from where you started.

Click slightly edgier content for novelty. The algorithm shows more of it, and your tastes adapt. Repeat, and both you and the feed “learn” a stronger version of the same preference.

Grant temporary powers for a crisis. Agencies, tools, and expectations form around them, making rollback harder than renewal. Repeat, and the “temporary” becomes normal.

The more widely an antibiotic is used to kill bacteria, the more it selects for resistant strains. The act of using the “cure” eventually renders it ineffective.

The British government offered a bounty for every dead cobra in Delhi to reduce the population. Locals began breeding cobras to collect the bounty. When the program ended, they released the snakes, increasing the total population.

The Minimal Atoms

On a flat plane, some transformations can move shapes around without stretching them at all. These are the distance-preserving motions (isometries): translations, rotations, reflections (and the slightly less famous glide reflection).

It turns out that reflections alone generate all of them. In fact, any plane isometry can be done with at most three reflections.

Step 0: Why triangles pin down motion

Take three points A, B, C that aren’t collinear (they form a triangle). Then there cannot be two different points P != Q that have the same distances to all three.

Why? If a point X has equal distance to P and Q, then X lies on the perpendicular bisector of segment PQ. So if A, B, C were all equally far from P and Q, they’d all lie on that same bisector, meaning they’d be collinear. Contradiction.

So, a point is uniquely determined by its distance to three non-collinear points. That means: once you know where an isometry sends a triangle’s three vertices, you know what it does to every point.

The 3-reflection construction

Suppose we have a blue triangle ABC and a red triangle A’B’C’ of the same shape and size (congruent). We want a distance-preserving motion that maps the blue triangle onto the red one.

We’ll build it using reflections only:

Reflection 1:

Reflect across the perpendicular bisector of segment AA’.

This sends A exactly to A’.

Reflection 2:

Now reflect across the perpendicular bisector of the current position of B and B’.

Crucial point: since distances are preserved and the triangles are congruent, A’ is the same distance from those two points. So A’ lies on that bisector, and is not moved by this reflection.

Result: B lands exactly on B’ and A’ stays put.

Reflection 3:

If C still doesn’t match C’, reflect across the perpendicular bisector of the current C and C’.

Again, because distances are preserved and now A’ and B’ already match, both A’ and B’ are equidistant from C and C’, so they lie on the bisector line and don’t move.

Result: C lands on C’, with A’, B’ unchanged.

So we’ve matched the whole triangle using at most 3 reflections.

This “minimal set generates a whole world” idea is everywhere:

In Boolean logic, you don’t need {AND, OR, NOT}. A single operator like NAND can express everything.

In number theory, every integer >1 has a unique factorization into primes.

In signal processing, Fourier analysis builds signals out of sine waves.

In linear algebra, a basis generates a whole vector space.

Any permutation of n objects can be built from repeatedly swapping two items. And amazingly, for n >= 3, you can generate every shuffle using just two moves: “rotate everyone one step” plus “swap two items”.

A CPU that can only subtract two numbers and jump to another step if the result is negative can still be fully programmable. Everything else (addition, loops, if-statements) can be built from that.

In human vision, the brain reduces the continuous spectrum to three cone cell types and reconstructs color from that.

Small toolkits. Infinite consequences.

The polynomial encoding section is wild. The idea that computation can be reframed as existence proofs for integer solutions is one of those things that feels obvious in hindsight but totally reframes how we think about programs. I've been working with verification systems lately and teh connection to Hilbert's tenth problem makes me wonder if theres untapped potential in using polynomial constraints for proving program correctness.