Links for 2026-01-21

Societies of Agents

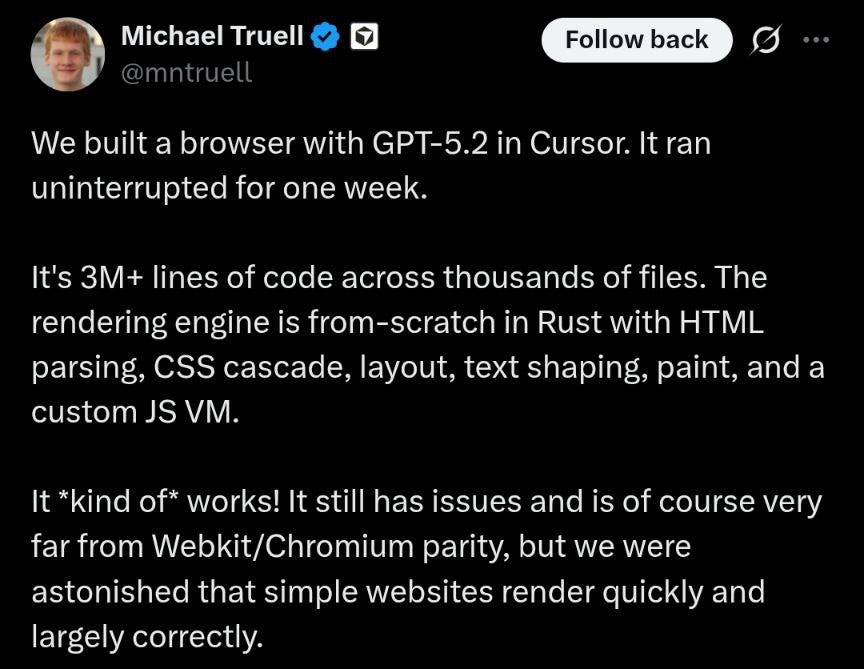

Cursor autonomously coded a web browser from scratch by running hundreds of concurrent coding agents for weeks.

They achieved this by using specialized agents they call planners, workers, and judges:

Planners: Continuously explore the codebase, create tasks, and spawn sub-planners for specific areas.

Workers: Focus purely on executing assigned tasks without worrying about broader coordination.

Judges: Determine when to continue or restart cycles to prevent tunnel vision.

Model Selection: GPT-5.2 excelled at long-running tasks and planning compared to Opus 4.5 (which took shortcuts) or GPT-5.1-codex.

Read more: https://cursor.com/blog/scaling-agents

Societies of Thought

How do reasoning models achieve their performance? A new paper by Google shows that they generate internal multi-agent debates the authors call “societies of thought.”

Reasoning models spontaneously develop conversational behaviors in which they question themselves, shift perspectives, engage in conflict, and reconcile opposing views.

The simulated personas within these debates exhibit very different personalities and background expertise.

Paper: https://arxiv.org/abs/2601.10825

AI

Dario Amodei (Anthropic) on reaching “a model that can do everything a human can do at a level of a Nobel laureate across many fields”, aka AGI: It’s very hard for me to see how it could take longer than a few years. https://www.youtube.com/watch?v=mmKAnHz36v0

‘What I see is this smooth exponential line. Similar to Moore’s law for compute, we basically have a Moore’s law for Intelligence, where the model is getting more and more cognitively capable every few months. That march has just been constant.’ https://www.youtube.com/watch?v=K7F6ohcBJus

China just ‘months’ behind U.S. AI models, Google DeepMind CEO says https://www.cnbc.com/amp/2026/01/16/google-deepmind-china-ai-demis-hassabis.html

Math Inc’s agent, Gauss, just autoformalized the proof of the Riemann Hypothesis for curves https://github.com/math-inc/RiemannHypothesisCurves

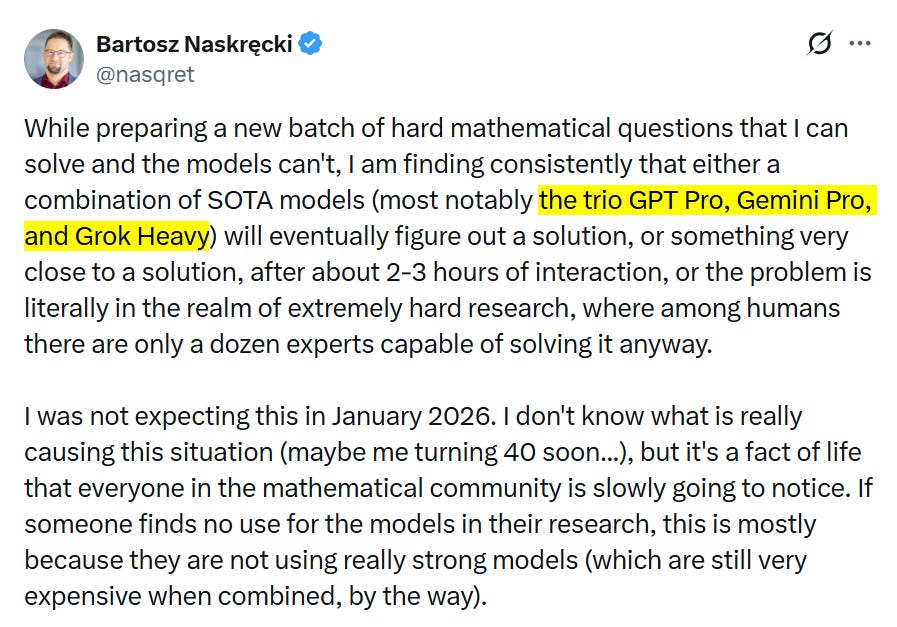

AI models are starting to crack high-level math problems https://techcrunch.com/2026/01/14/ai-models-are-starting-to-crack-high-level-math-problems/

A business that scales with the value of intelligence: “As intelligence moves into scientific research, drug discovery, energy systems, and financial modeling, new economic models will emerge. Licensing, IP-based agreements, and outcome-based pricing will share in the value created.” https://openai.com/index/a-business-that-scales-with-the-value-of-intelligence/

Active Context Compression: Autonomous Memory Management in LLM Agents https://arxiv.org/abs/2601.07190

Flow Equivariant World Models: Memory for Partially Observed Dynamic Environments https://flowequivariantworldmodels.github.io/

Long-Horizon Model-Based Offline Reinforcement Learning Without Conservatism https://arxiv.org/abs/2512.04341

Cautious Weight Decay (CWD), a one-line, optimizer-agnostic modification that applies weight decay only to parameter coordinates whose signs align with the optimizer update. https://arxiv.org/abs/2510.12402

A physics-informed GNN that learns Newton’s laws from data—and extrapolates to systems 35× larger https://www.nature.com/articles/s41467-025-67802-5

A self-correcting multi-agent LLM framework for language-based physics simulation and explanation https://www.nature.com/articles/s44387-025-00057-z

The assistant axis: situating and stabilizing the character of large language models https://www.anthropic.com/research/assistant-axis

AI’s Hacking Skills Are Approaching an ‘Inflection Point’ https://www.wired.com/story/ai-models-hacking-inflection-point/ [no paywall: https://archive.is/nW59t]

How Econ 101 makes us blinder on trade, morals, jobs with AI – and on marginal costs https://www.lesswrong.com/posts/DATWTYBs6sALbrnvi/how-econ-101-makes-us-blinder-on-trade-morals-jobs-with-ai

Nvidia And This Bill Gates-Backed Startup Are Building Fusion Power With AI https://www.forbes.com/sites/the-prototype/2026/01/09/nvidia-and-this-bill-gates-backed-startup-are-building-fusion-power-with-ai/

As AI Investments Surge, CEOs Take the Lead https://www.bcg.com/publications/2026/as-ai-investments-surge-ceos-take-the-lead

Anthropic’s Claude Cowork Is an AI Agent That Actually Works https://www.wired.com/story/anthropic-claude-cowork-agent/ [no paywall: https://archive.is/eESdQ]

The release and viral success of an AI-powered office agent pushed down software stocks and showed how much AI startups are challenging the incumbent tech sector. https://www.bloomberg.com/news/articles/2026-01-18/-no-reasons-to-own-software-stocks-sink-on-fear-of-new-ai-tool [no paywall: https://archive.is/yiriO]

“In his latest court filing, Elon cherry-picks and publishes snippets from Greg Brockman’s private journal entries (obtained as part of legal discovery) which, when read with the surrounding context, tell a very different story from what Elon claims.” https://openai.com/index/the-truth-elon-left-out/

Is METR Underestimating LLM Time Horizons? https://www.lesswrong.com/posts/kNHxuusznCR3rhqkf/is-metr-underestimating-llm-time-horizons

“Software That Debugs Itself While I Sleep” https://tomtunguz.com/implicit-feedback-loops/

Universal agent collaboration to solve reasoning and search intensive problems https://github.com/dust-tt/srchd

“I spent a few hundred dollars on Anthropic API credits and let Claude individually research every current US congressperson's position on AI. This is a summary of my findings.” https://www.lesswrong.com/posts/WLdcvAcoFZv9enR37/what-washington-says-about-agi

Precedents for the Unprecedented: Historical Analogies for Thirteen Artificial Superintelligence Risks https://www.lesswrong.com/posts/kLvhBSwjWD9wjejWn/precedents-for-the-unprecedented-historical-analogies-for-1

When the LLM isn’t the one who’s wrong https://www.lesswrong.com/posts/Cd8tRgpWnKPuNhZ2r/when-the-llm-isn-t-the-one-who-s-wrong

Food for thought: These tools together cost at least $500/month. In other words, we're now at the point where the total intelligence of a person or company depends on how much they can afford to spend.

Of course, nobody will be able to beat the AI labs, regardless of how rich they are, as internal SOTA is far superior to commercial SOTA. They're obviously keeping the best tools for themselves as they are strategically important. Even commercially available SOTA-AI is guarded against competitors (Anthropic recently cut off xAI’s access to Claude Code).

(Artificial)Cognition

Physics of foam strangely resembles AI training https://phys.org/news/2026-01-physics-foam-strangely-resembles-ai.html

Deep Learning as Program Synthesis — This reframes the central mystery of AI from “Why does deep learning work?” to “How does gradient descent manage to find structured, algorithmic solutions in a continuous parameter space?” https://www.lesswrong.com/posts/Dw8mskAvBX37MxvXo/deep-learning-as-program-synthesis-1

Cognition spaces: natural, artificial, and hybrid https://arxiv.org/abs/2601.12837

Neuroscience

A new research lab pursuing long-horizon R&D in ultrasound-based neural technology, with $252 million in funding from OpenAI, Bain Capital, Gabe Newell, and others. https://www.essentialtechnology.blog/p/announcing-merge-labs

New synaptic formation in adolescence challenges conventional views of brain development https://www.eurekalert.org/news-releases/1112080

The 1,000 Neuron Challenge is a competition that explicitly prohibits brute force approaches to neural models and thus may advance both AI and neuroscience! https://www.thetransmitter.org/computational-neuroscience/the-1000-neuron-challenge/

To flexibly organize thought, the brain makes use of space https://news.mit.edu/2026/to-flexibly-organize-thought-the-brain-makes-use-of-space-0120

Miscellaneous

At extreme pressures and temperatures, water becomes superionic — a solid that behaves partly like a liquid and conducts electricity. https://www.sciencedaily.com/releases/2026/01/260112214308.htm

Scientists demonstrate low-cost, high-quality lenses for super-resolution microscopy https://www.optica.org/about/newsroom/news_releases/2026/scientists_demonstrate_low-cost_high-quality_lenses_for_super-resolution_microscopy/

One should not dismiss “insane-looking problems” just because the proposed explanation sounds impossible. https://www.lesswrong.com/posts/A57YTGLytFT6NaDJY/story-of-the-insane-problem-of-vanilla-ice-cream-causing-the

Irrationality as a Defense Mechanism for Reward-hacking https://www.lesswrong.com/posts/H8uoAmbeqjD2PG2jm/irrationality-as-a-defense-mechanism-for-reward-hacking

A "natural experiment" on the peer-review system, proving that high-status researchers can publish statistically significant nonsense if they simply massage the data enough, even when the historical facts flatly contradict their model. https://statmodeling.stat.columbia.edu/2024/12/07/jpe/

Politics

China and Russia dominate nuclear power push with 90% of new reactors https://asia.nikkei.com/business/energy/china-and-russia-dominate-nuclear-power-push-with-90-of-new-reactors [no paywall: https://archive.is/1u6Zk]

Why Ukraine’s Deadly Drone Operation Runs Like a ‘McDonald’s’ https://www.youtube.com/watch?v=9hzIMI2DLys