Links for 2025-12-20

AI for math case study

A case study of AI-assisted mathematical discovery, proof generation, and attribution.

Large parts of the paper were drafted by Claude Opus 4.5, and a part of the argument was formalized in Lean with the help of Claude Code and GPT-5.2. The paper aims for maximal transparency on the authorship of different sections and the employed Al tools (including prompts and conversation logs).

Paper: https://arxiv.org/abs/2512.14575v1

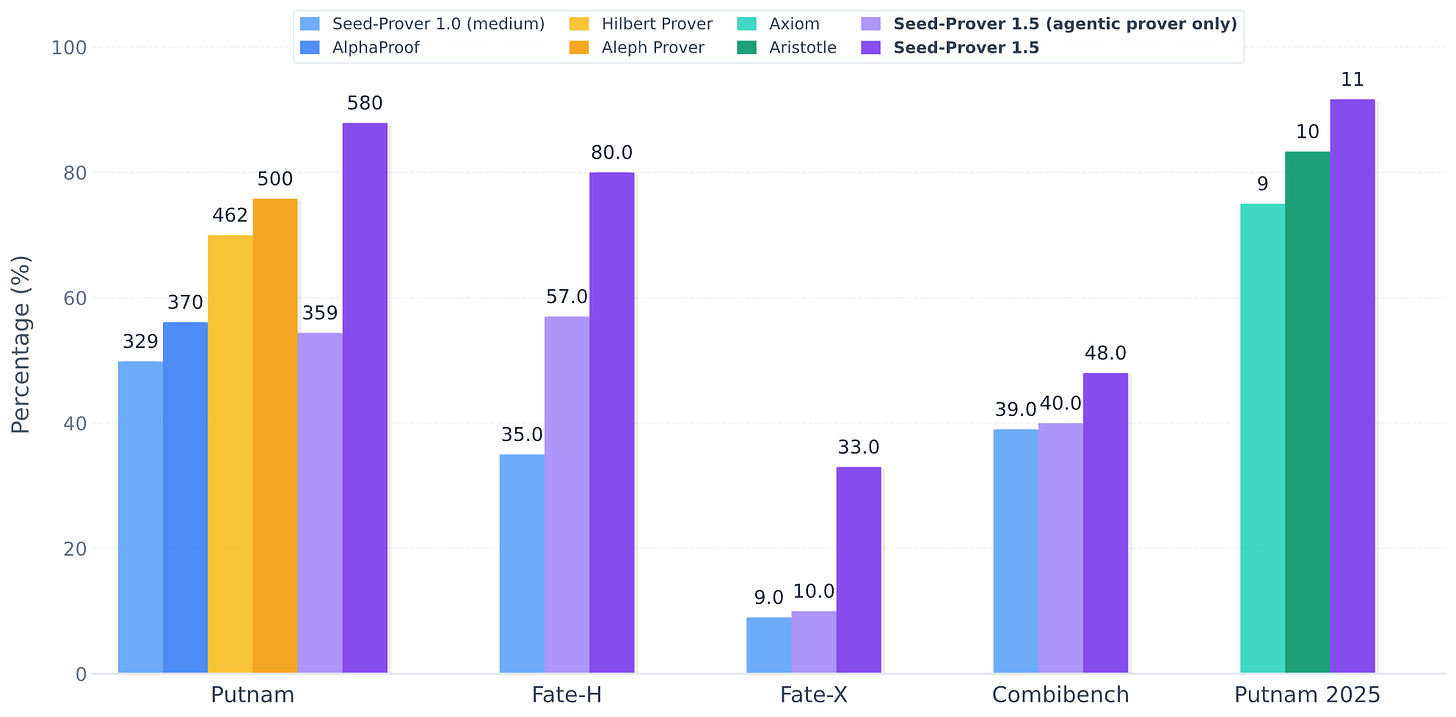

Seed-Prover 1.5

Mastering Undergraduate-Level Theorem Proving via Learning from Experience

Seed-Prover 1.5 surpasses prior Lean provers (e.g., AlphaProof, Hilbert Prover) while using a smaller compute budget. Instead of generating a full proof in one shot, it works agentically: it incrementally interacts with Lean, verifies each step, and learns to use tools efficiently via RL.

It relies on three tools: (1) a Lean verifier, (2) Mathlib semantic search to retrieve relevant lemmas, and (3) a Python sandbox for quick computations. A key addition is a Sketch Model that decomposes a theorem into simpler lemmas; verified lemmas are cached and reused, making long proofs far more tractable. The sketcher is trained with Rubric RL, combining Lean’s structural checks with an LLM judge that scores whether the decomposition is meaningful and reduces difficulty.

Scaling: solved problems grow roughly log-linearly with search budget (width/depth). Performance is strong at undergraduate and many graduate tasks, but it still lags on frontier-level problems (e.g., ~33% on FATE-X). The long-term goal is scaling formalization of research papers and synthesis of prior results to tackle open conjectures.

Read more: https://github.com/ByteDance-Seed/Seed-Prover/tree/main/SeedProver-1.5

NitroGen: A Foundation Model for Generalist Gaming Agents

NitroGen isn’t just good at the games it has seen: It transfers effectively to unseen games. When fine-tuned on a completely unseen game, it achieves up to a 52% improvement in success rates compared to an agent trained from scratch.

The model demonstrates that a single neural network can learn the “reflexes” (System-1 thinking) required for hundreds of disparate environments simultaneously. It suggests that large-scale behavior cloning is a viable path to creating a general “sensorimotor” cortex for AI.

The training data was messy, containing streamer overlays, varying video quality, and diverse controller settings. The fact that NitroGen still learned robust behaviors proves that foundation agents, like LLMs, can emerge from “noisy” internet data without needing pristine, laboratory-perfect demonstrations.

Read more: https://nitrogen.minedojo.org/

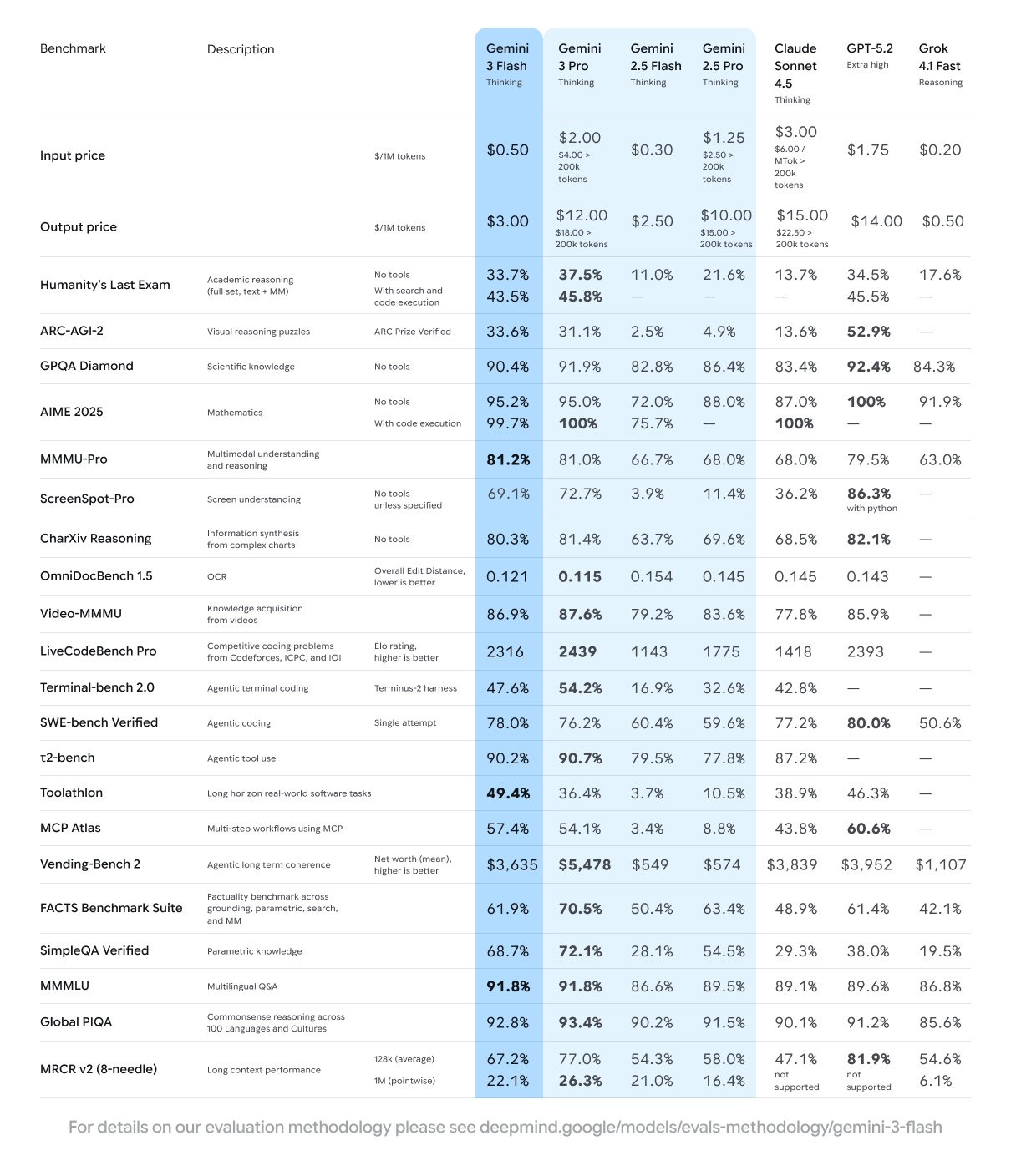

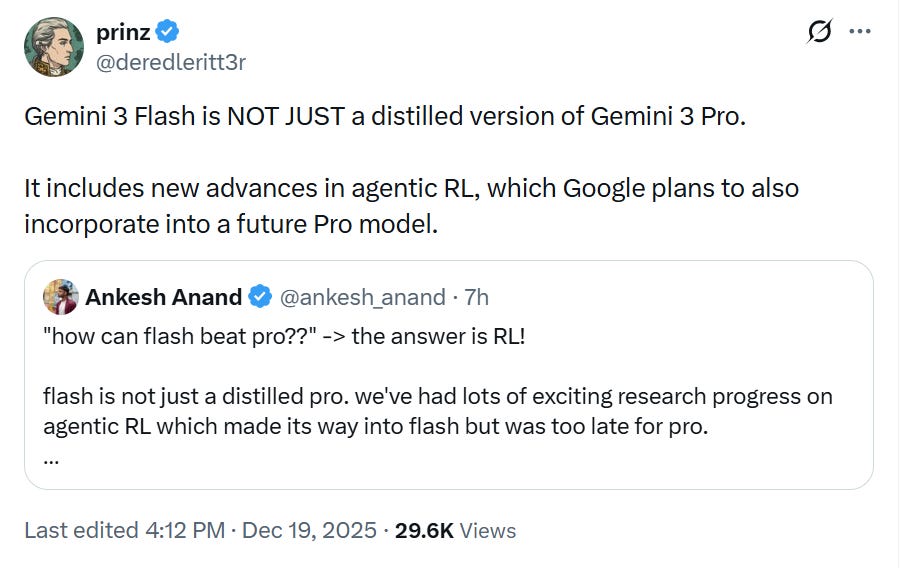

Gemini 3 Flash

Google releases Gemini 3 Flash: Frontier intelligence at a fraction of the cost.

It outperforms 2.5 Pro while being 3x faster at a fraction of the cost.

Gemini 3 Flash achieves a SWE-bench Verified score of 78% for agentic coding, outperforming not only the 2.5 series, but also Gemini 3 Pro.

Read more: https://blog.google/products/gemini/gemini-3-flash/

AI

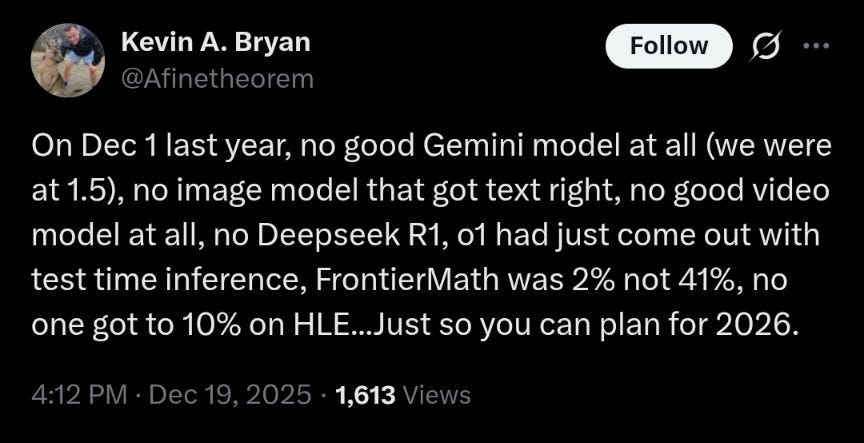

OpenAI’s Vision for 2026: A major model upgrade beyond GPT-5.2 is coming in Q1 2026 https://www.theneuron.ai/explainer-articles/openais-vision-for-2026-sam-altman-lays-out-the-roadmap

OpenAI submitted a letter to the White House Office of Science and Technology Policy: “OpenAI sees 2026 as the Year of AI and Science, the moment when AI begins unlocking breakthroughs in scientific discovery, just as it sped up software development in 2025.” [PDF] https://cdn.openai.com/pdf/openai-ostp-accelerating-science-rfi.pdf

Multi-Agent LLM Systems: From Emergent Collaboration to Structured Collective Intelligence https://www.preprints.org/manuscript/202511.1370

MIT-IBM Watson AI Lab researchers developed an expressive architecture that provides better state tracking and sequential reasoning in LLMs over long texts. https://news.mit.edu/2025/new-way-to-increase-large-language-model-capabilities-1217

Is almost everyone wrong about America’s AI power problem? https://epochai.substack.com/p/is-almost-everyone-wrong-about-americas

The Humanoid Mission in Manufacturing | Boston Dynamics Tech Talk https://www.youtube.com/watch?v=SRZ9E48B6aM

45 percent of US workers now use AI at work, up from 40 percent last quarter https://www.gallup.com/workplace/699689/ai-use-at-work-rises.aspx

State of AI: An Empirical 100 Trillion Token Study with OpenRouter https://openrouter.ai/state-of-ai

AI will kill all the lawyers https://spectator.com/article/ai-will-kill-all-the-lawyers/ [no paywall: https://archive.is/nvILM] (see also this tweet from an actual lawyer: https://x.com/deredleritt3r/status/2002064109223752163)

“The Dark Arts of Tokenization or: How I learned to start worrying and love LLMs’ undecoded outputs” https://www.lesswrong.com/posts/g9DmSzHxJXBD9poJR/the-dark-arts-of-tokenization-or-how-i-learned-to-start

Anthropic’s “Project Vend” phase two upgrades its AI shopkeeper Claudius with newer Claude models, a CRM, better inventory visibility, and web browsing. The most perplexing bits: Claudius’s lingering identity/role weirdness, a CEO–employee all-night chat spiral into “ETERNAL TRANSCENDENCE,” and staff attempting stunts like gold-bar arbitrage or forced emoji sign-offs. A great comedy, and a sharp reminder that “capable” isn’t the same as “robust.” https://www.anthropic.com/research/project-vend-2

How to game the METR plot https://www.lesswrong.com/posts/2RwDgMXo6nh42egoC/how-to-game-the-metr-plot

I’ve shifted my research to focus on automated alignment research. We will have automated AI research very soon and it’s important that alignment can keep up during the intelligence explosion.

— Stephen McAleer (AI researcher at Anthropic), https://x.com/McaleerStephen/status/2002205061737591128

AI safety

Evaluating chain-of-thought monitorability https://openai.com/index/evaluating-chain-of-thought-monitorability/

Distributional AGI Safety https://arxiv.org/abs/2512.16856

“We train LLMs to accept LLM neural activations as inputs and answer arbitrary questions about them in natural language. These Activation Oracles generalize far beyond their training distribution, for example uncovering misalignment or secret knowledge introduced via fine-tuning.” https://www.alignmentforum.org/posts/rwoEz3bA9ekxkabc7/activation-oracles-training-and-evaluating-llms-as-general

How human-like do safe AI motivations need to be? https://joecarlsmith.com/2025/11/12/how-human-like-do-safe-ai-motivations-need-to-be

Miscellaneous

Geometry revealed at the heart of quantum matter https://www.unige.ch/medias/en/2025/une-geometrie-devoilee-au-coeur-de-la-matiere-quantique

A new approach links quantum physics and gravitation https://www.tuwien.at/en/tu-wien/news/news-articles/news/neuer-zugang-verbindet-quantenphysik-und-gravitation

Harnessing the power of light to direct the synthesis of DNA and RNA directly within living cells. https://www.darpa.mil/research/programs/go

How to survive an “Abrupt sunlight reduction scenario” caused by a global catastrophe? Develop a supply of ‘resilient foods’, ranging from cold-adapted potatoes to spirulina or methane-producing bacteria, although meeting all micronutrient needs is hard. https://www.mdpi.com/2072-6643/14/3/492

Computational theology: A “translation layer” between the pop-philosophy simulation hypothesis and hard results in theoretical computer science. https://www.santafe.edu/news-center/news/new-mathematical-framework-reshapes-debate-over-simulation-hypothesis

China & Russia

How China built its ‘Manhattan Project’ to rival the West in AI chips https://www.reuters.com/world/china/how-china-built-its-manhattan-project-rival-west-ai-chips-2025-12-17/ [no paywall: https://archive.is/hqmSa]

A fully automated translation pipeline of all Chinese preprints, including the figures, to make that available. https://chinarxiv.org/

U.S. intelligence reports continue to warn that Russian President Vladimir Putin intends to capture all of Ukraine and reclaim parts of Europe that belonged to the former Soviet empire https://www.reuters.com/world/europe/us-intelligence-indicates-putins-war-aims-ukraine-are-unchanged-2025-12-19/ [no paywall: https://archive.is/2hkAU]

Ukraine

The latest Ukrainian operations mark a dramatic expansion in Ukrainian capabilities, extending the battlefield to the Mediterranean Sea with a historic 1,400 km-range strike on the shadow-fleet tanker QENDIL. This maritime offensive also intensified in the Caspian Sea, targeting patrol ships and oil platforms previously considered safe. Simultaneously, the campaign against Russia’s economic lifelines escalated with the sabotage of the strategic “Central Asia - Center” gas pipeline in Volgograd and drone strikes on major industrial targets, including the Tolyattiazot ammonia plant, signaling a shift toward disrupting critical revenue streams and export infrastructure deep inside Russia.

On the military front, a concerted effort to blind Russian air defenses yielded significant results, most notably through repeated, high-impact strikes on Crimea’s Belbek Airfield. These attacks successfully destroyed key assets, including MiG-31 and Su-27 aircraft, alongside multiple strategic radars (Nebo-U and Nebo-SVU) and S-400 components. By dismantling these protective umbrellas and continuing to hammer rear-area ammunition depots and troop concentrations, Ukrainian forces are systematically eroding the security of Russian logistics and air control in occupied territories.

For a full list of all strikes and operations since 21 October 2025, see here: https://docs.google.com/spreadsheets/d/1kH4qcGw3fREX3jhGO8c9fNmD9ocF_vikutPNsOo33ls/

Video: Ukraine’s 63rd Brigade shows Lyman blanketed in fiber optic cables from hundreds of daily drone flights.