Links for 2025-11-23

Just today I was reading a text from Demis and he was saying that pre-training and post-training are fully intact. And Gemini 3 takes advantage of the scaling laws and received a huge jump in model performance.

— Jensen Huang on the NVIDIA earnings call

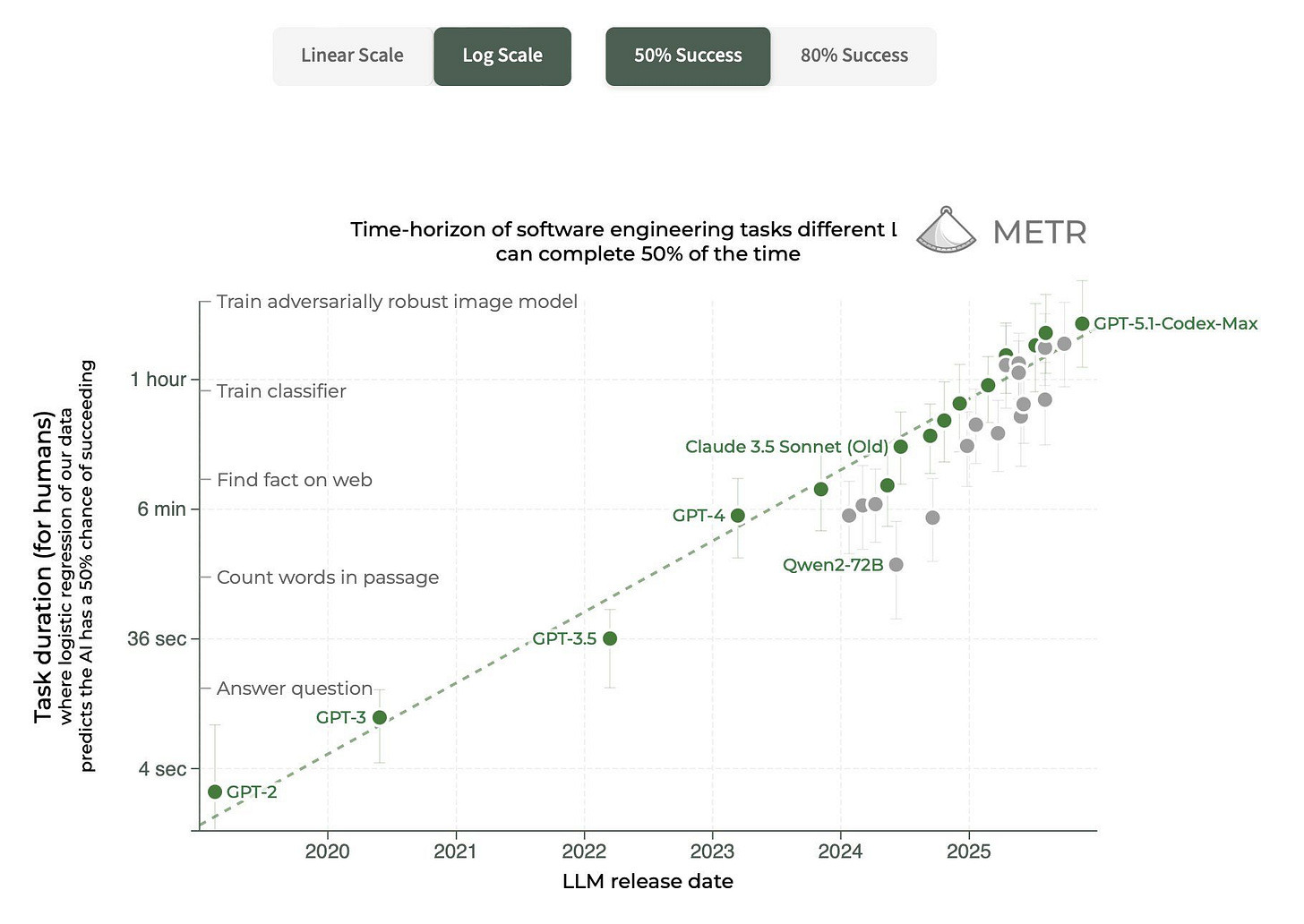

Pretraining hasn’t hit a wall, and neither has test-time compute.

I find it boring when people point out that our methods are expensive. They won’t be very soon! Or, you’ll get exponentially more out of the methods for the same cost.

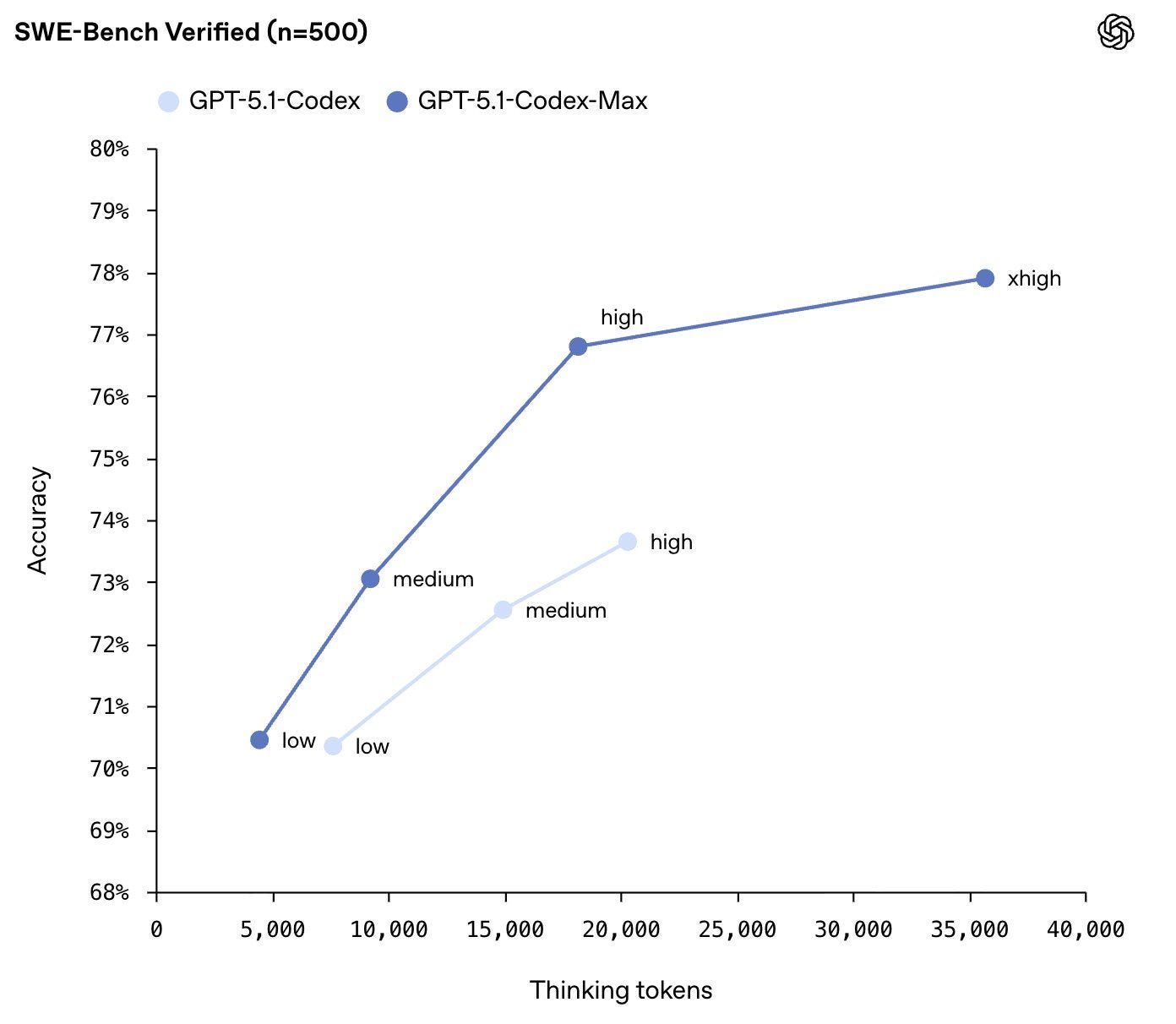

GPT-5.1-Codex-Max

OpenAI has released GPT-5.1-Codex-Max, which can work autonomously for more than a day over millions of tokens.

Read more: https://openai.com/index/gpt-5-1-codex-max/

Big gains on OpenAI-Proof Q&A

It tests real world ML debugging and diagnosis: Can models identify and explain the root causes of real OpenAI research and engineering bottlenecks using historical code, logs, and experiment data?

Source: https://openai.com/index/gpt-5-1-codex-max-system-card/

Early science acceleration experiments with GPT-5

OpenAI has internal models that can think for “a few hours” at a time.

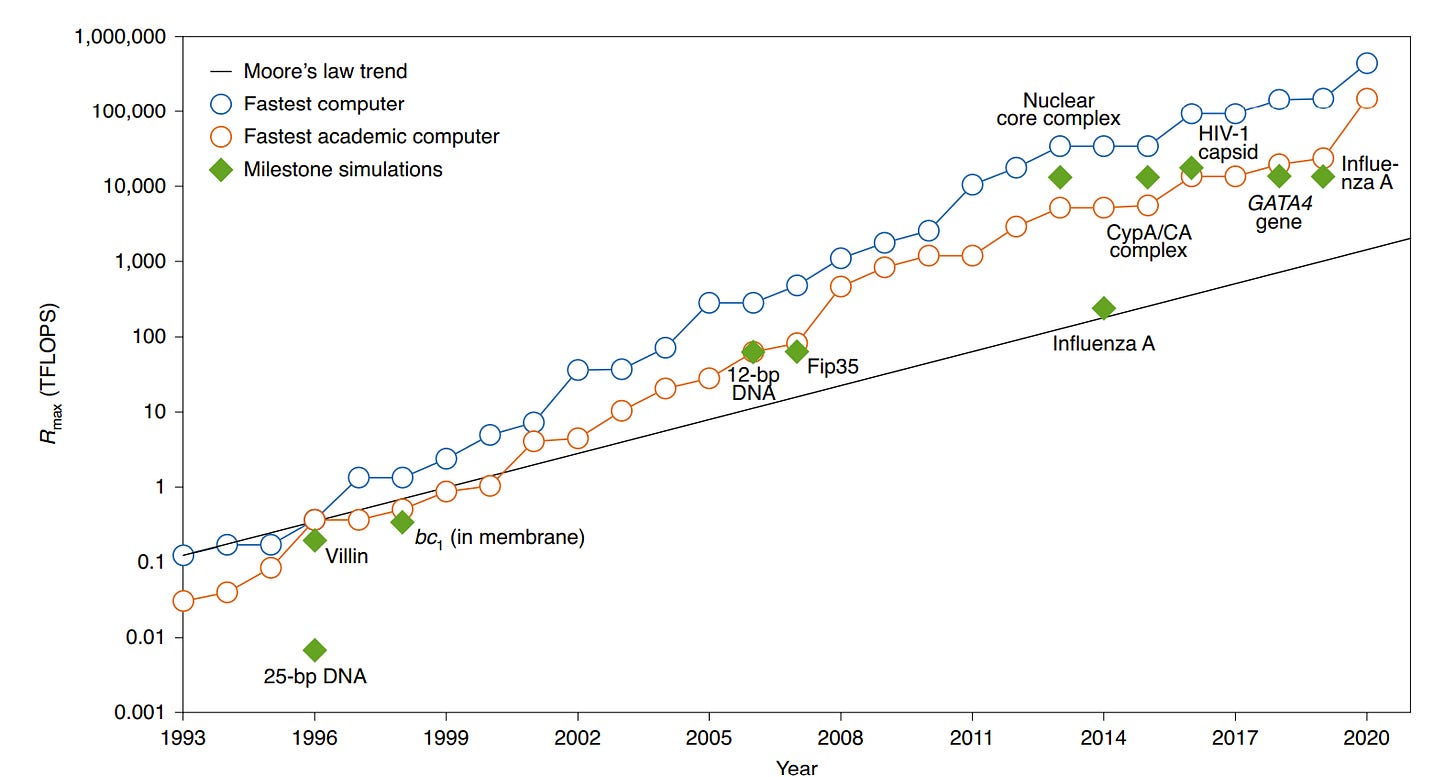

This report presents a collection of case studies demonstrating the capabilities of GPT-5 and GPT-5 Pro as scientific research assistants. The authors—comprising researchers from OpenAI and various academic institutions—document instances where the model accelerated workflows, performed deep literature searches, rediscovered frontier results, and contributed to novel, previously unsolved scientific problems. The paper emphasizes that while human expert oversight remains essential, AI can significantly compress the time required for scientific discovery.

Robotics

ACT-1: A frontier robot foundation model trained on zero robot data. Ultra long-horizon tasks. Zero-shot generalization. Advanced dexterity. https://www.sunday.ai/journal/no-robot-data

Why Humanoids Are the Future of Manufacturing | Boston Dynamics Webinar https://www.youtube.com/watch?v=laexcnaTrDM

AI race cars are catching up to human drivers https://www.semafor.com/article/11/19/2025/ai-race-cars-are-catching-up-to-human-drivers

Google DeepMind Hires Former CTO of Boston Dynamics as the Company Pushes Deeper Into Robotics https://www.wired.com/story/google-hires-cto-boston-dynamics-demis-hassabis-android/

Waymo enters 3 more cities: Minneapolis, New Orleans, and Tampa https://techcrunch.com/2025/11/20/waymo-enters-3-more-cities-minneapolis-new-orleans-and-tampa/

AI

It is said that to explain is to explain away. This maxim is nowhere so well fulfilled as in the area of computer programming, especially in what is called heuristic programming and artificial intelligence. For in those realms machines are made to behave in wondrous ways, often sufficient to dazzle even the most experienced observer. But once a particular program is unmasked, once its inner workings are explained in language sufficiently plain to induce understanding, its magic crumbles away; it stands revealed as a mere collection of procedures, each quite comprehensible. The observer says to himself ‘I could have written that.’ With that thought he moves the program in question from the shelf marked ‘intelligent,’ to that reserved for curios, fit to be discussed only with people less enlightened than he.

—Joseph Weizenbaum, MIT professor and inventor of the first chatbot, ELIZA, 1966

Anthropic finds (fairly concerning and dangerous - in particular a big gap between thinking tokens and final output) misalignment from reward hacking. They *give the model permission to reward hack* and this *prevents* other misaligned behavior. https://www.anthropic.com/research/emergent-misalignment-reward-hacking

Evolution Guided General Optimization via Low-rank Learning. Scaling backprop-free Evolution Strategies (ES) for billion-parameter models at large population sizes. https://eshyperscale.github.io/

What if we could autocomplete DNA based on function? Semantic design of functional de novo genes from a genomic language model https://www.nature.com/articles/s41586-025-09749-7

JAM-2 — the first AI model capable of generating drug-quality antibodies straight from the computer, with industry-leading success rates. [PDF] https://nabla-public.s3.us-east-1.amazonaws.com/2025_Nabla_JAM2.pdf

Locus: the first AI system to outperform human experts at AI R&D https://www.intology.ai/blog/previewing-locus

Nano Banana Pro: A REASONING image model https://deepmind.google/models/gemini-image/pro/

SAM 3, a unified model that enables detection, segmentation, and tracking of objects across images and videos. SAM 3 introduces some of our most highly requested features like text and exemplar prompts to segment all objects of a target category. https://ai.meta.com/blog/segment-anything-model-3/

SAM 3D: Powerful 3D Reconstruction for Physical World Images https://ai.meta.com/blog/sam-3d/

Walrus: A Cross-domain Foundation Model for Continuum Dynamics https://polymathic-ai.org/blog/walrus/

China’s public is much more trusting of AI than the Western world: https://www.edelman.com/sites/g/files/aatuss191/files/2025-11/2025%20Edelman%20Trust%20Barometer%20Flash%20Poll%20Trust%20and%20Artificial%20Intelligence%20at%20a%20Crossroads%201.pdf

LLMs Position Themselves as More Rational Than Humans: Emergence of AI Self-Awareness Measured Through Game Theory https://arxiv.org/abs/2511.00926

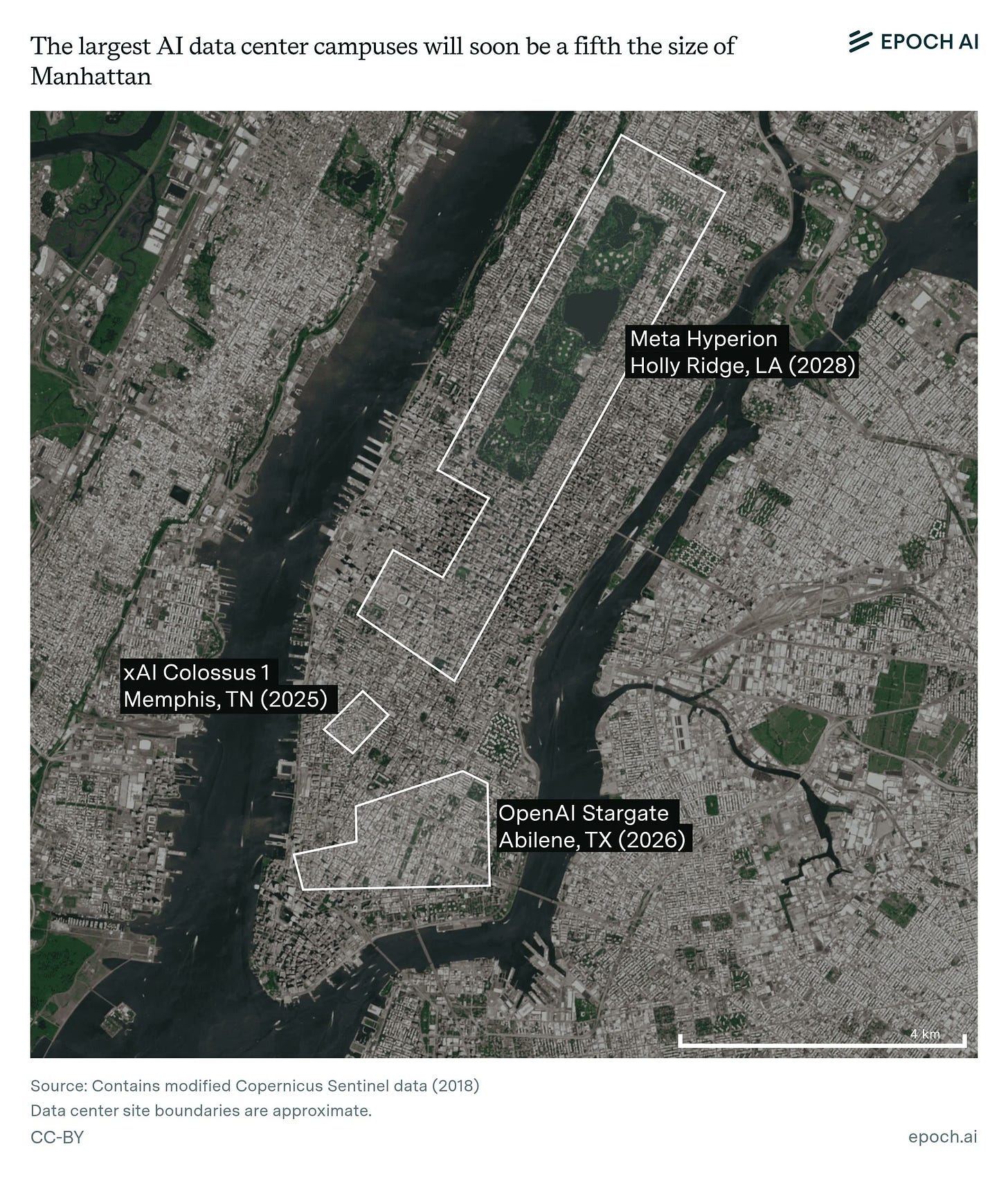

Google must double AI serving capacity every 6 months to meet demand, AI infrastructure boss tells employees https://www.cnbc.com/2025/11/21/google-must-double-ai-serving-capacity-every-6-months-to-meet-demand.html

“AI in the Cancer Journey: How I’m Using AI to Help My Son” https://www.youtube.com/watch?v=Jr3lxRAc-dY

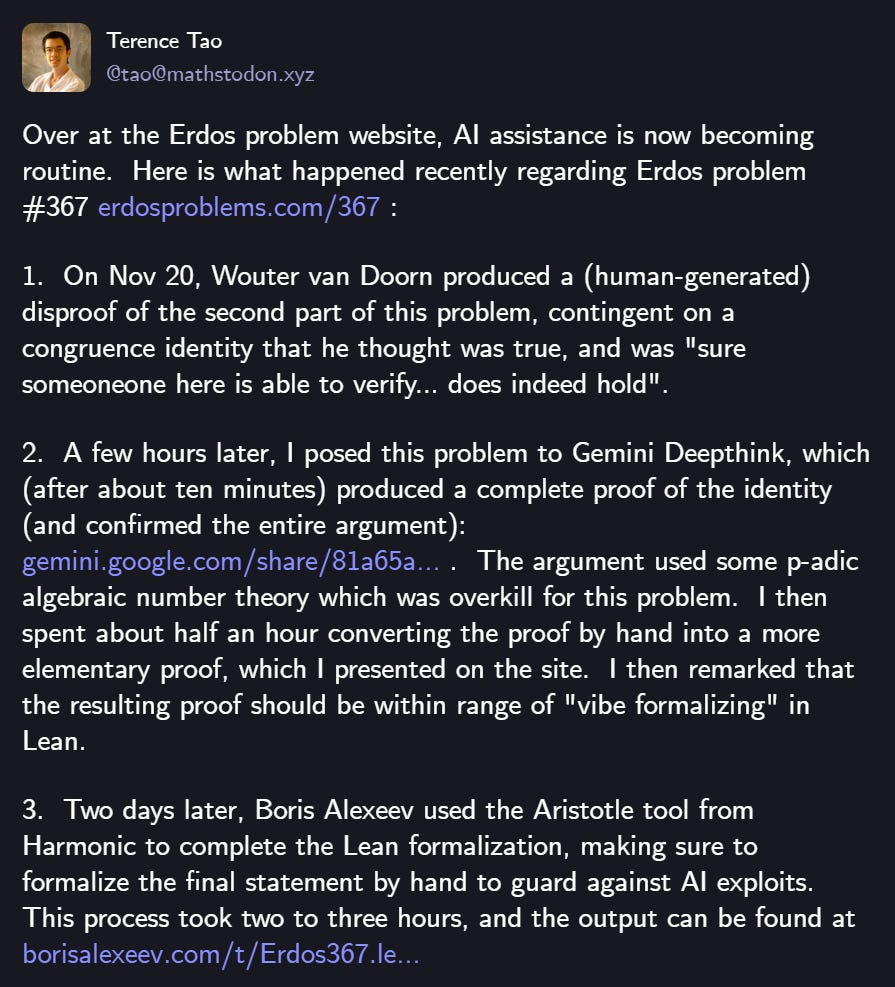

AI & Math

See also: Examples for the use of AI and especially LLMs in notable mathematical developments https://gilkalai.wordpress.com/2025/11/21/ten-recent-questions-for-chatgpt/

Neuroscience

Looking at cognition through the lens of metabolic costs https://www.cell.com/trends/cognitive-sciences/fulltext/S1364-6613(24)00319-X

The cost of thinking is similar between large reasoning models and humans. Scientists at MIT’s McGovern Institute for Brain Research have found that the kinds of problems that require the most processing from reasoning models are the very same problems that people need take their time with. https://news.mit.edu/2025/cost-of-thinking-1119

Adaptive stretching of representations across brain regions and deep learning model layers https://www.nature.com/articles/s41467-025-65231-y

Technology

Startup Zap Energy Just Set a Fusion Power Record With Its Latest Reactor https://singularityhub.com/2025/11/20/startup-zap-energy-just-set-a-fusion-power-record-with-its-latest-reactor/

Retinal Implant Restores Central Vision in Patients with Age-Related Macular Degeneration https://www.medschool.pitt.edu/news/retinal-implant-restores-central-vision-patients-age-related-macular-degeneration

Math & Science

Physicists have discovered a paradox where a closed universe without an observer mathematically collapses into a single state with zero complexity. This suggests that an objective “view from nowhere” may be impossible, and that observers are actually fundamental to the existence of a complex universe. https://www.quantamagazine.org/cosmic-paradox-reveals-the-awful-consequence-of-an-observer-free-universe-20251119/

Old ‘Ghost’ Theory of Quantum Gravity Makes a Comeback https://www.quantamagazine.org/old-ghost-theory-of-quantum-gravity-makes-a-comeback-20251117/

While the Pareto Principle suggests diminishing returns, this post argues that returns to effort often increase because high individual intensity allows you to avoid the quadratic coordination costs of scaling a team. Consequently, in many complex domains, one person working 40 hours is significantly more valuable than two people working 20 hours each. https://www.lesswrong.com/posts/swymiotpbYFv9pnEk/increasing-returns-to-marginal-effort-are-common

A deep, fundamental link between the nature of computation (algorithms) and definability (mathematical logical structure), unifying the finite and the infinite. https://www.quantamagazine.org/a-new-bridge-links-the-strange-math-of-infinity-to-computer-science-20251121/

The Mexican–American War

February 24, 2022

A large force of U.S. helicopter-borne Rangers, SEALs, and Delta seize Felipe Ángeles Airport just outside Mexico City to open the door for a massive airlift. They overrun the lightly equipped Mexican garrison, raise U.S. flags over the control tower, secure key buildings.

Result: They are unable to bring in C-17 and C-5 transports, are later isolated and mauled.

The Pentagon launches “Thunder Corridor”: a gigantic American armored column meant to crush resistance and encircle the Mexican capital. The column stretches 60–70 kilometers along Federal Highway 57, the main artery into the capital.

Result: The column turns into a stuck, starving, burning snake of metal that the whole world laughs at.

Late March - Early April 2022

Washington announces the “successful completion of Phase One” and withdraws U.S. forces from central Mexico to “focus on liberating the north.” Troops pull back from the outskirts of Mexico City and abandon the Felipe Ángeles bridgehead.

Result: In reality, the U.S. has failed to take the capital and been forced into a chaotic retreat, leaving wrecked armor and atrocities behind.

April 2022 - Sinking of the Flagship

Mexican anti-ship missiles and drones hit the USS San Jacinto, a Ticonderoga-class cruiser serving as flagship of the U.S. Fourth Fleet, in the Gulf of Mexico. The ship later sinks while under tow.

Result: The first loss of a major U.S. warship in generations shatters the aura of U.S. naval invincibility and forces the fleet to pull back out of Mexican missile range.

September 2022 - Northern Counteroffensive

Mexican forces launch a surprise offensive against thinly held U.S. lines in the north. They smash through along the Monterrey–Reynosa corridor, recapturing industrial towns and rail junctions the U.S. had already declared “liberated forever,” and forcing a panicked retreat back toward the Texas border, leaving behind depots, ammo, and vehicles.

Result: The “permanently liberated” northern belt collapses in days, exposing U.S. forces as overstretched, under-supplied, and unable to hold what they seized.

The “Special Manpower Adjustment”: The White House announces a mobilization of 300,000 reservists. Immediately, huge queues form at the Canadian border crossings in Buffalo and Detroit. Flights to Europe sell out instantly as hundreds of thousands of IT professionals, engineers, and young men flee the country to avoid being sent to the “meat grinder” in Monterrey.

November 2022 - Liberation of Veracruz

For months, the U.S. clings to a coastal bridgehead around Veracruz, supplied by a few vulnerable highways and the port. Mexican rocket artillery and drones systematically destroy bridges, ammo dumps, and ferries, making the enclave untenable. The Pentagon orders a hasty evacuation by sea; Mexican troops walk back into Veracruz to cheering crowds and burned-out U.S. armor.

Result: The first major Mexican city taken by the U.S. is lost again, turning a supposed strategic prize into a symbol of defeat and calling the entire invasion plan into question.

The Red Lines: After the loss of Veracruz, the White House Press Secretary warns that “if Mexican troops cross the Rio Grande, Washington reserves the right to use tactical nuclear weapons to defend the territorial integrity of the Homeland.”

June 2023 - The Contractor Mutiny

The head of the biggest U.S. private military company fighting in Mexico turns on the Pentagon, accusing generals of corruption and sabotaging his men. His armored columns roll into San Antonio, briefly seizing the regional headquarters for the war, then race up the interstate toward Washington, D.C., shooting down U.S. helicopters on the way.

Result: The world watches live as a U.S. warlord drives on the capital; the mutiny is only stopped by a last-minute deal, and the contractor’s jet mysteriously falls out of the sky weeks later.

2024 - Texas Incursion

Mexican troops cross the border and punch deep into southern Texas, seizing several small towns and highway junctions. A Mexican TV crew does live reporting from in front of a U.S. post office, joking about “liberated America.” Exhausted, understrength U.S. units fail to push them out for weeks, and Washington quietly flies in a Saudi mechanized brigade to help clear the pocket house by house.

Result: For the first time in modern history, foreign troops fight on U.S. soil to dislodge an invading army, and it isn’t even America doing the liberating.

Late 2025 - The Battle for Tula

It has been one year and four months since the United States began its offensive on the small Mexican town of Tula, north of Mexico City, and four weeks since the Chairman of the Joint Chiefs went on TV to boast that the town had been “fully encircled.” Tula still hasn’t fallen.

Meanwhile, Mexican drones and saboteurs hit U.S. infrastructure on a daily basis. Refineries along the Gulf Coast and oil terminals in Houston and Corpus Christi keep burning, pipelines are blown up, and billions in damage pile up as the self-proclaimed arsenal of democracy stumbles into a nationwide fuel crisis.

Ukraine

115 confirmed Ukrainian deep strikes in 34 days: https://docs.google.com/spreadsheets/d/1kH4qcGw3fREX3jhGO8c9fNmD9ocF_vikutPNsOo33ls/

This article comes at the perfect time, making me truely wonder at the breathtaking implications of models like GPT-5.1-Codex-Max thinking autonomously for days and accelerating scientific discovery.