Links for 2025-08-11

AI

Neuroscience study provides yet more evidence that AI systems and human brains converge on similar ways of representing the world https://www.nature.com/articles/s42256-025-01072-0

Diffusion Language Models are Super Data Learners https://jinjieni.notion.site/Diffusion-Language-Models-are-Super-Data-Learners-239d8f03a866800ab196e49928c019ac

Self-Questioning Language Models (SQLM): an asymmetric self-play framework where a proposer is given the topic and generates a question for a solver, who tries to answer it. https://arxiv.org/abs/2508.03682

Self-Improving Model Steering https://arxiv.org/abs/2507.08967

MathSmith: Towards Extremely Hard Mathematical Reasoning by Forging Synthetic Problems with a Reinforced Policy https://arxiv.org/abs/2508.05592

Microsoft debuted CLIO, a framework that enables non-reasoning LLMs to develop their own steerable thought patterns. On Humanity's Last Exam, it boosted GPT-4.1’s accuracy on text-only biomedical questions from 8.55% to 22.37%, beating o3 (high). https://www.microsoft.com/en-us/research/blog/self-adaptive-reasoning-for-science/

Lean + LLM for verified math reasoning. https://leandojo.org/

ELDER: Enhancing Lifelong Model Editing with Mixture-of-LoRA https://github.com/JiaangL/ELDER

Facebook uses RL to improve its LLM ad machine: "In a large-scale 10-week A/B test on Facebook spanning nearly 35,000 advertisers and 640,000 ad variations, we find that AdLlama improves click-through rates by 6.7% (p=0.0296) compared to a supervised imitation model trained on curated ads," Facebook writes. https://arxiv.org/abs/2507.21983

“Having personally evaluated LLMs on IMO problems several times over the last 3 years, it is astounding to me how far they’ve come. I remember manually going through Gemini and GPT-4’s solutions to every recent numerical solution IMO problem in mid 2023, and finding only one problem on which it deserved partial points (the rest being zeroes). A gold medal with 5/6 just 2 years later represents an insane speed of progress.” https://rishimehta.xyz/2025/08/09/imo-2025-results.html

How Does A Blind Model See The Earth? https://outsidetext.substack.com/p/how-does-a-blind-model-see-the-earth

Only 7% of ChatGPT Plus subscription users were using the o1/3/4 reasoning models https://x.com/sama/status/1954603417252532479

A new introduction to AI as an extinction threat https://www.lesswrong.com/posts/kgb58RL88YChkkBNf/the-problem

The trajectory of the future could soon get set in stone https://www.lesswrong.com/posts/RTJ48sb4GKYAhpoPx/the-trajectory-of-the-future-could-soon-get-set-in-stone

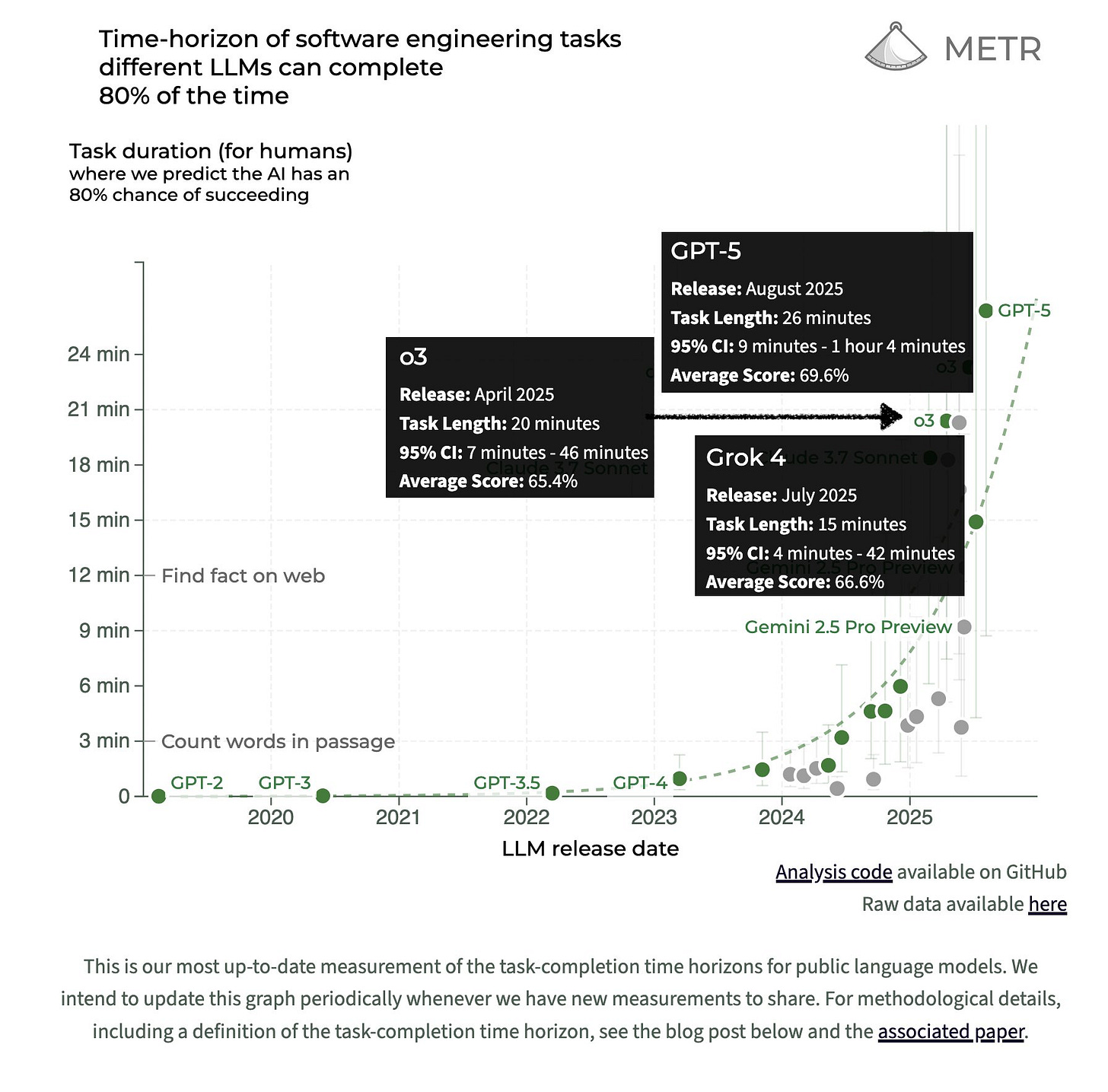

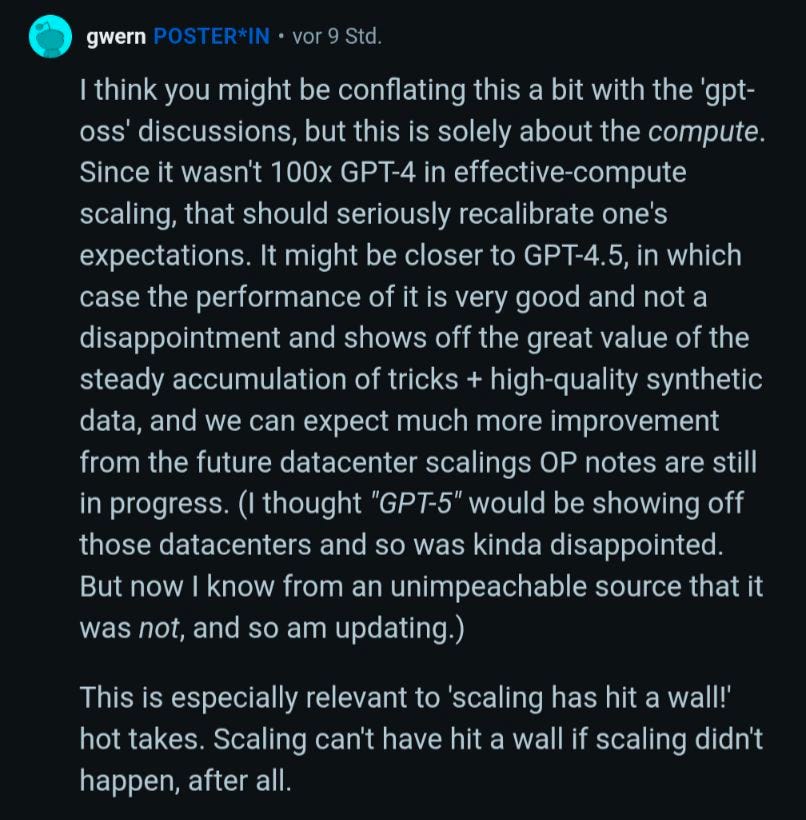

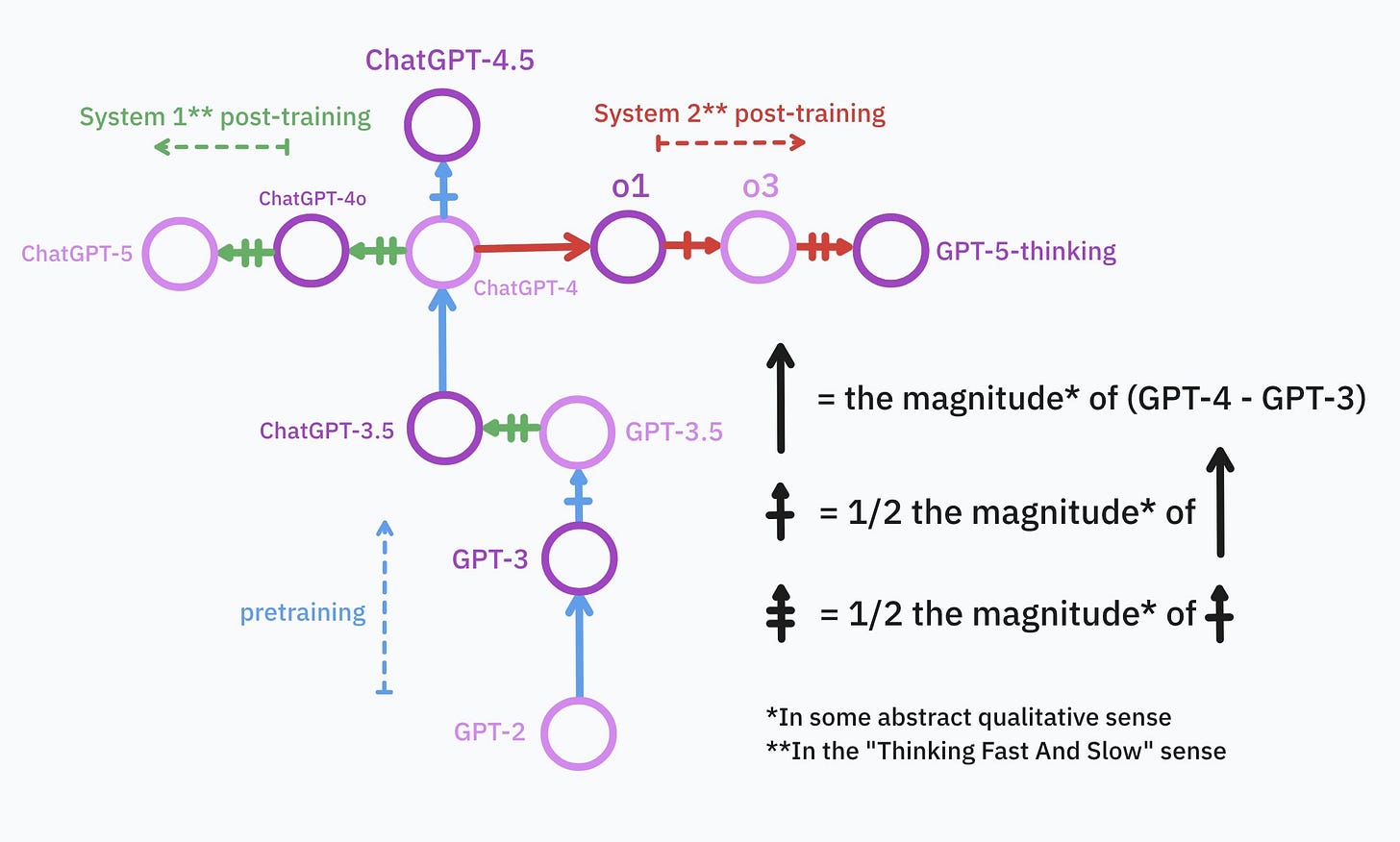

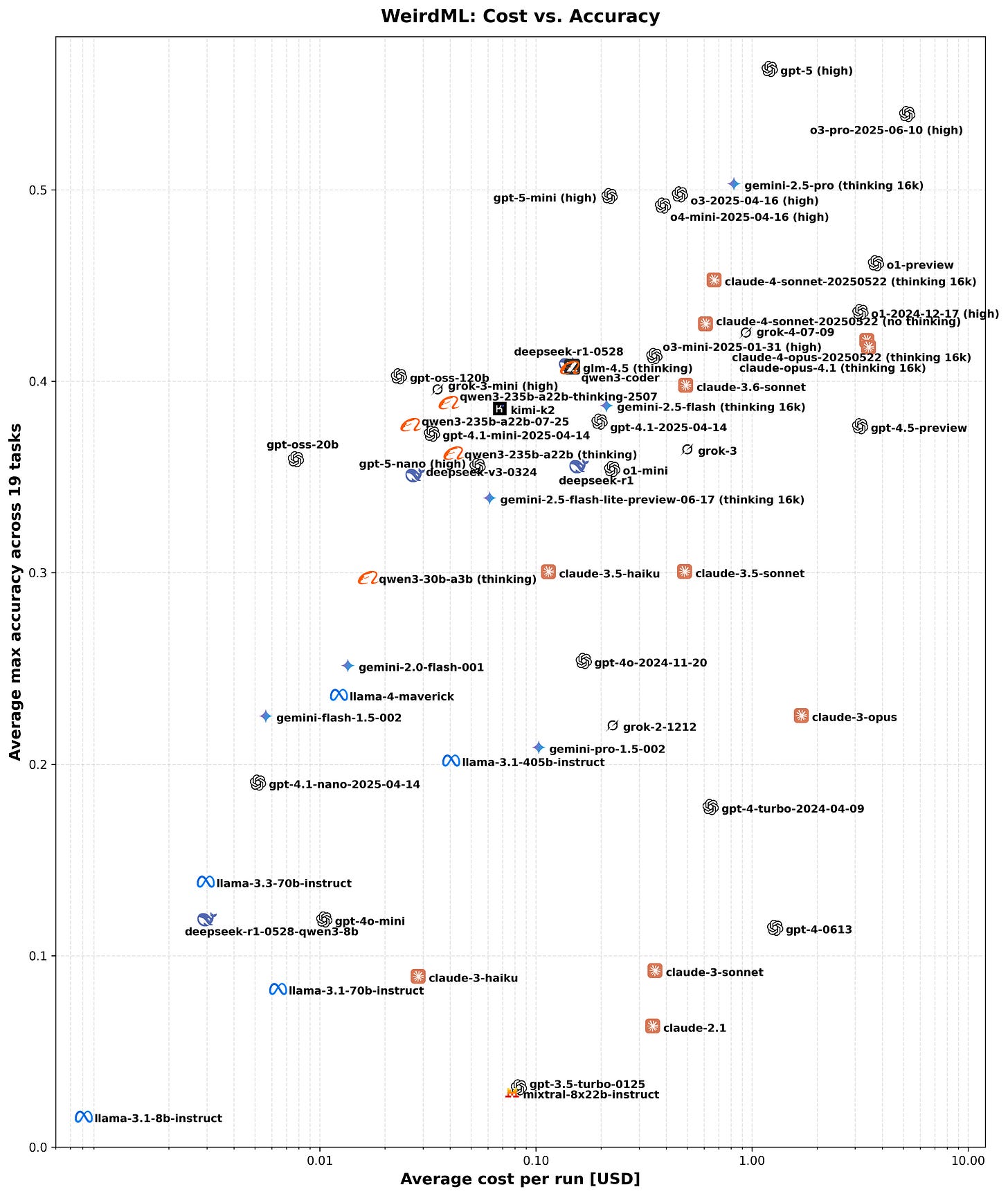

GPT-5 Update

If it is true that gpt-5 was less than a gpt-4.5 scale-up in pretraining compute, while gpt-2 -> gpt-3 -> gpt-4 were all ~100x scale-ups, this will cause a lot of people to dramatically underestimate future AI progress. The big data centers haven't been built yet.

See also: GPT-5s Are Alive: Basic Facts, Benchmarks and the Model Card https://www.lesswrong.com/posts/4fLB2uzCcH6dEGnGs/gpt-5s-are-alive-basic-facts-benchmarks-and-the-model-card

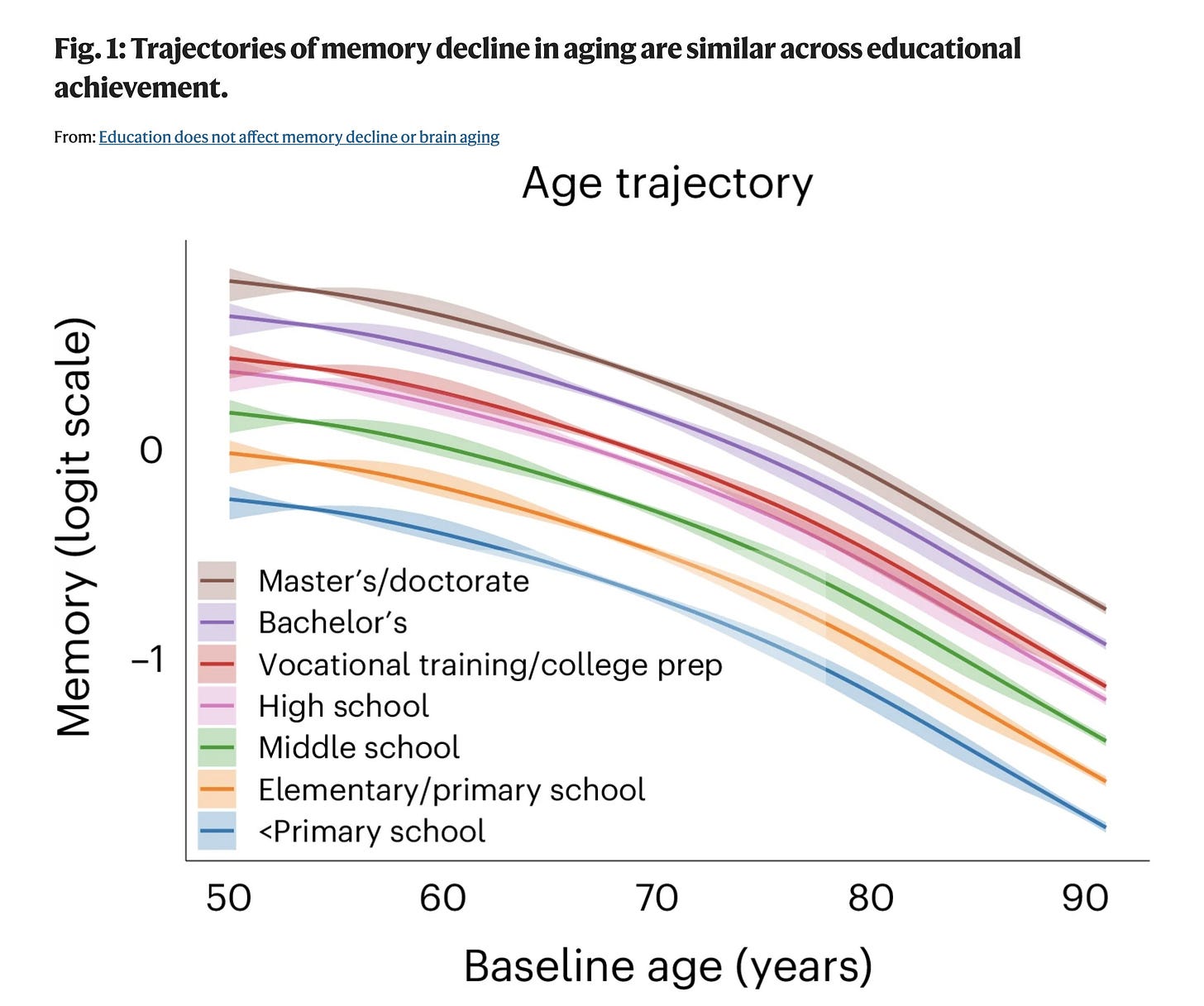

Biology

What is heritability? https://dynomight.net/heritable/

Some Brains Stay Sharp Thanks to a Plaque Eating Immune Cell That Fights Alzheimer’s https://www.ucsf.edu/news/2025/07/430411/immune-cells-eat-molecular-trash-to-keep-alzheimers-bay

Math

New Method Is the Fastest Way To Find the Best Routes https://www.quantamagazine.org/new-method-is-the-fastest-way-to-find-the-best-routes-20250806/

The Coding Theorem — A Link between Complexity and Probability https://www.lesswrong.com/posts/ejWjegoSwn95jhzXB/the-coding-theorem-a-link-between-complexity-and-probability

Technology

3D printing and AI used to slash nuclear reactor component construction time ‘from weeks to days’ — pioneers hail 'new era of nuclear construction' https://www.tomshardware.com/3d-printing/3d-printing-and-ai-used-to-slash-nuclear-reactor-component-construction-time-from-weeks-to-days-pioneers-hail-new-era-of-nuclear-construction

An awesome 3D cross-section view of the Wendelstein 7-X 'Stellerator' at the Max Planck Institute https://youtu.be/51Hji5NfkdA

“Two semiconductors—silicon carbide and gallium nitride—are the rivals in a (quite literally) heated competition to make circuits capable of performing at the highest temperatures.” https://spectrum.ieee.org/high-temperature-transistor

China vs US

The Militarization of Silicon Valley https://www.nytimes.com/2025/08/04/technology/google-meta-openai-military-war.html [no paywall: https://archive.is/Irh0J]

The Financial Times is reporting that NVIDIA has agreed to give 15 per cent of the revenues from H20 chip sales in China directly to the US Government as part of the export licence agreement. AMD will provide the same percentage from MI308 sales. https://www.ft.com/content/cd1a0729-a8ab-41e1-a4d2-8907f4c01cac [no paywall: https://archive.is/MUjhS]

China plots pathway to tech supremacy through brain-computer interfaces https://www.scmp.com/news/china/science/article/3321136/china-plots-pathway-tech-supremacy-through-brain-computer-interfaces

How China Became the World’s Biggest Shipbuilder https://www.construction-physics.com/p/how-china-became-the-worlds-biggest

New paper by Nick Bostrom: AI Creation and the Cosmic Host

There may well exist a normative structure, based on the preferences or concordats of a cosmic host, and which has high relevance to the development of AI.

The paper argues there is likely a “cosmic host”: Powerful natural or supernatural agents (e.g., advanced civilizations, simulators, deities) whose preferences shape cosmic-scale norms. This is motivated by the simulation argument, large/infinite-universe and multiverse hypotheses.

The paper also suggests the cosmic host may actually want us to build superintelligence. A superintelligence would be more capable of understanding and adhering to cosmic norms than humans are, potentially making our region of the cosmos more aligned with the host's preferences. Therefore, the host might favor a timely development of AI, as long as it becomes a good cosmic citizen.

Paper: https://nickbostrom.com/papers/ai-creation-and-the-cosmic-host.pdf

This is a serious version of the following tongue-in-cheek derivation of a God-like coalition via acausal trade by Scott Alexander: https://slatestarcodex.com/2018/04/01/the-hour-i-first-believed/

If you have been involved with rationalists for as long as I have, none of this will be surprising. If you follow the basic premises of rationality to their logical end, things get weird. As muflax once wrote:

I hate this whole rationality thing. If you actually take the basic assumptions of rationality seriously (as in Bayesian inference, complexity theory, algorithmic views of minds), you end up with an utterly insane universe full of mind-controlling superintelligences and impossible moral luck, and not a nice “let’s build an AI so we can fuck catgirls all day” universe. The worst that can happen is not the extinction of humanity or something that mundane – instead, you might piss off a whole pantheon of jealous gods and have to deal with them forever, or you might notice that this has already happened and you are already being computationally pwned, or that any bad state you can imagine exists. Modal fucking realism.