Links for 2023-12-05

“Quadratic attention has been indispensable for information-dense modalities such as language... until now. Announcing Mamba: a new SSM arch. that has linear-time scaling, ultra long context, and most importantly--outperforms Transformers everywhere we've tried.” https://github.com/state-spaces/mamba

“Consumers are increasingly using language models to effectively challenge unfair practices, particularly in the financial sector. These tools can empower individuals, providing them with the necessary knowledge and skills to address grievances that they might otherwise struggle to articulate. The study here finds that LLM usage is positively correlated with the likelihood of obtaining desirable outcomes (i.e., offer of relief from financial firms), partly due to the linguistic features improved by LLMs.” https://arxiv.org/abs/2311.16466

Synthetic data is the accelerator of the next phase of AI — what it is and what it means. https://www.interconnects.ai/p/llm-synthetic-data

“Who is leading in AI? Our new article compares leading AI companies on a range of research metrics. Main findings: Google, OpenAI and Meta lead across several metrics; Chinese industry labs lag their U.S. counterparts; and new labs can catch up quickly.” https://epochai.org/blog/who-is-leading-in-ai-an-analysis-of-industry-ai-research

Large Language Models in Robotics: “This collection contains 8 papers which cover an extensive range of topics including robotic applications such as chemistry, robotic control, task planning, anomaly detection and many more. LLMs play a significant role in each one of these articles, crucially contributing to and enabling new approaches that were not possible before.” https://link.springer.com/collections/bejhchcbag

Animate Anyone: Consistent and Controllable Image-to-Video Synthesis for Character Animation https://humanaigc.github.io/animate-anyone/

Visual Anagrams: Generating Multi-View Optical Illusions with Diffusion Models https://dangeng.github.io/visual_anagrams/

Localizing Lying in Llama: Understanding Instructed Dishonesty on True-False Questions Through Prompting, Probing, and Patching https://arxiv.org/abs/2311.15131

Generative AI is fueling the recovery of European SaaS https://techcrunch.com/2023/11/28/generative-ai-is-fueling-the-recovery-of-european-saas/

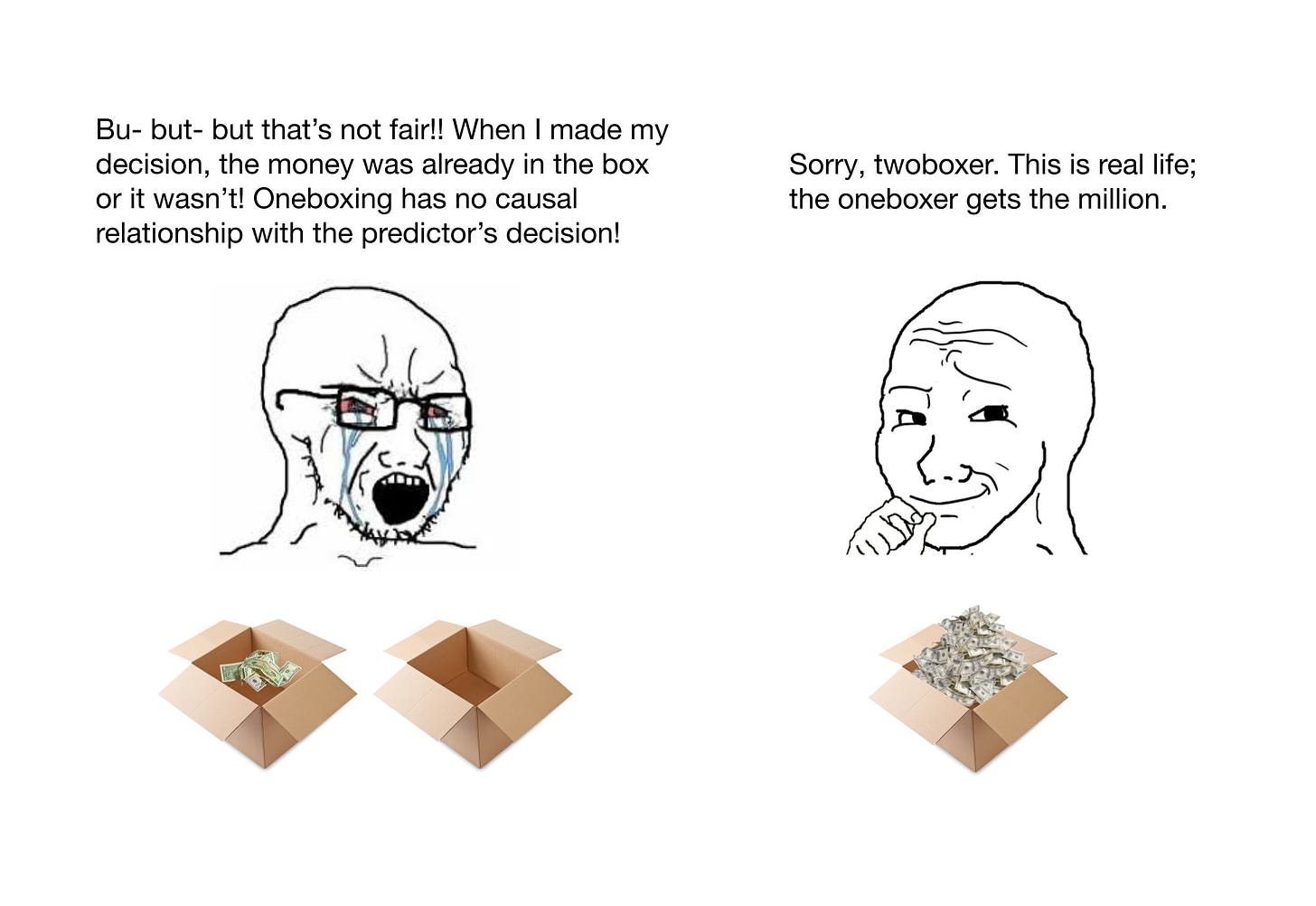

Consider the following version of Newcomb's problem:

The boxes are cups you have to lift to reach the money. There are $1000 under cup A but it is really heavy and only very strong people can lift it. However, cup B is really lightweight so that even a child could lift it. If Omega predicts that you can lift cup A then you will find nothing under cup B. Otherwise, Omega will put a million dollars under cup B.

You can lift both cups. The choice is up to you.

Now, you might reason, that since you cannot change the past, the correct way of looking at this is to treat the past as fixed. So why not just lift both cups and get an extra $1000? Well, because you are either too weak to lift cup A or there is nothing under cup B. Remember, the past is fixed.

Your ability to lift cup A is not in any relevant way different from your ability to refrain from taking both boxes in the original formulation of the problem. It's harder to predict, yes, but that's just a technical problem.

People are being misled by the postulation of a choice. But there is no choice and therefore no paradox.

Of course, you can stop going to the gym and become weaker. In the same sense, you can self-modify to take only one box. But that's just an indirect way of not being able to lift cup A or being able to refrain from taking both boxes.

Political links:

“The current shift to a pessimistic narrative around Ukraine following the failure of the counteroffensive reminds me of so many previous seemingly then-infallibly true narratives around the war. A quick thread:” https://twitter.com/NeilPHauer/status/1731699897911345436

"Signs of Western hesitation over support for Ukraine encourage Russian propagandists to speculate on which country might be the next victim." https://cepa.org/article/give-the-kremlin-an-inch-and-it-will-take-half-of-europe/

"Polish security chief: NATO Eastern Flank states have 3 years to prepare for Russia attack." https://news.err.ee/1609183456/polish-security-chief-nato-eastern-flank-states-have-3-years-to-prepare-for-russia-attack

“During Ukraine's preparations for the counteroffensive, the US advocated concentrating forces on the advance in the south, but Ukraine insisted on attacks at three different points. The Commander-in-Chief Zaluzhnyi believed that the huge length of the front would be a problem for Russia, The Washington Post writes. According to the publication, US and Ukrainian officials 'sometimes sharply disagreed' regarding the strategy, tactics and timing of the counteroffensive. The US wanted the offensive to begin in mid-April to prevent the Russians from continuing to build up their positions while Ukrainians hesitated, saying they were not ready without additional weapons.” https://www.washingtonpost.com/world/2023/12/04/ukraine-counteroffensive-stalled-russia-war-defenses/ [https://archive.is/Dsak9]

"Finland will start production of artillery ammunition for Ukraine," Finnish Defense Minister Antti Hakkanen said. "The plans are ready to increase production significantly. The purpose is to support Ukraine even stronger than we already do," he said. https://www.iltalehti.fi/politiikka/a/13303c4d-9d8f-45e7-8878-0f85754f87d6