Links for 2023-06-12

Mercedes Becomes the First Automaker to Sell Level 3 Self-Driving Vehicles in California — Mercedes (and not the driver) will be legally responsible for any accident that happens. https://www.engadget.com/mercedes-becomes-the-first-automaker-to-sell-level-3-self-driving-vehicles-in-california-103504319.html

Scaling laws for language encoding models in fMRI: “Mirroring scaling results from other contexts, we found that brain prediction performance scales log-linearly with model size...These results suggest that increasing scale in both models and data will yield incredibly effective models of language processing in the brain...” https://www.lesswrong.com/posts/iXbPe9EAxScuimsGh/linkpost-scaling-laws-for-language-encoding-models-in-fmri

Large Language Models Converge on Brain-Like Word Representations https://www.lesswrong.com/posts/2QexGHrqSxcuwyGmf/linkpost-large-language-models-converge-on-brain-like-word

Getting LLMs To Tell The Truth: Improves LLaMA 33% -> 65% on TruthfulQA https://arxiv.org/abs/2306.03341

What are Transformer Models and How do they Work? https://www.youtube.com/watch?v=tsbRdJbJi9U

Summary of Transformers and their evolution https://docs.google.com/presentation/d/1ZXFIhYczos679r70Yu8vV9uO6B1J0ztzeDxbnBxD1S0/mobilepresent?slide=id.g31364026ad_3_2

A new AI stack is emerging, using LLMs as endpoints and vector stores for local data. To answer a query, relevant data is found in the vector store and used to build a prompt for the LLM. https://medium.com/@brian_90925/llms-and-the-emerging-ml-tech-stack-6fa66ee4561a

Nanoscale robotic ‘hand’ made of DNA could be used to detect viruses [NewScientist] https://archive.is/wip/1SuTE

The abilities of retail store managers explain most of the store-to-store variability in productivity. https://www.nber.org/papers/w31192

Rausch et al. report that the latest generation of college students favours emotional well-being, and is less interested in academic rigor.

But female and male students differ in what they favour. Males tend to value academic freedom, while females value emotional well-being. https://researchers.one/articles/23.03.00001v1Giving old mice both glycine and N-acetylcysteine (NAC) reverses age-associated cognitive decline. https://www.mdpi.com/2076-3921/12/5/1042

White people tend to avoid Black people on the street in New York City. https://www.nature.com/articles/s41562-023-01589-7

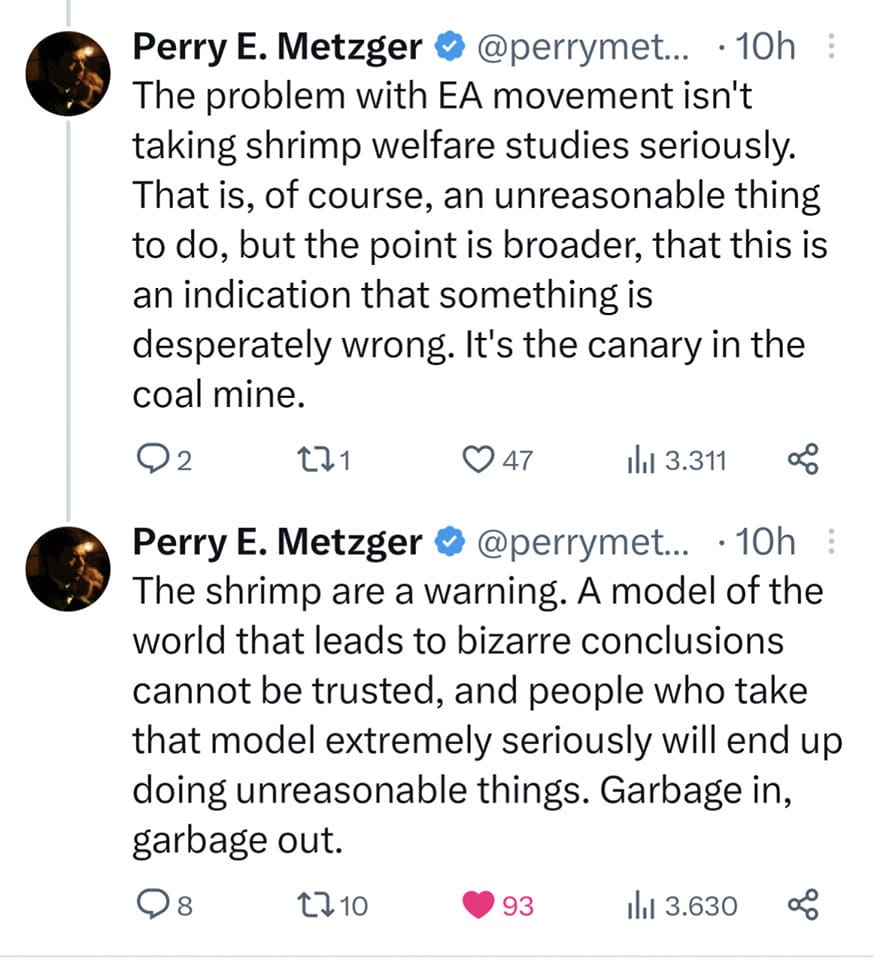

As user:muflax once wrote:

I hate this whole rationality thing. If you actually take the basic assumptions of rationality seriously (as in Bayesian inference, complexity theory, algorithmic views of minds), you end up with an utterly insane universe full of mind-controlling superintelligences and impossible moral luck, and not a nice “let’s build an AI so we can fuck catgirls all day” universe. The worst that can happen is not the extinction of humanity or something that mundane – instead, you might piss off a whole pantheon of jealous gods and have to deal with them forever, or you might notice that this has already happened and you are already being computationally pwned, or that any bad state you can imagine exists. Modal fucking realism.

The problem is that no one can point to a better model of rationality that isn't inconsistent and doesn't lead to absurdity.