Links for 2023-05-05

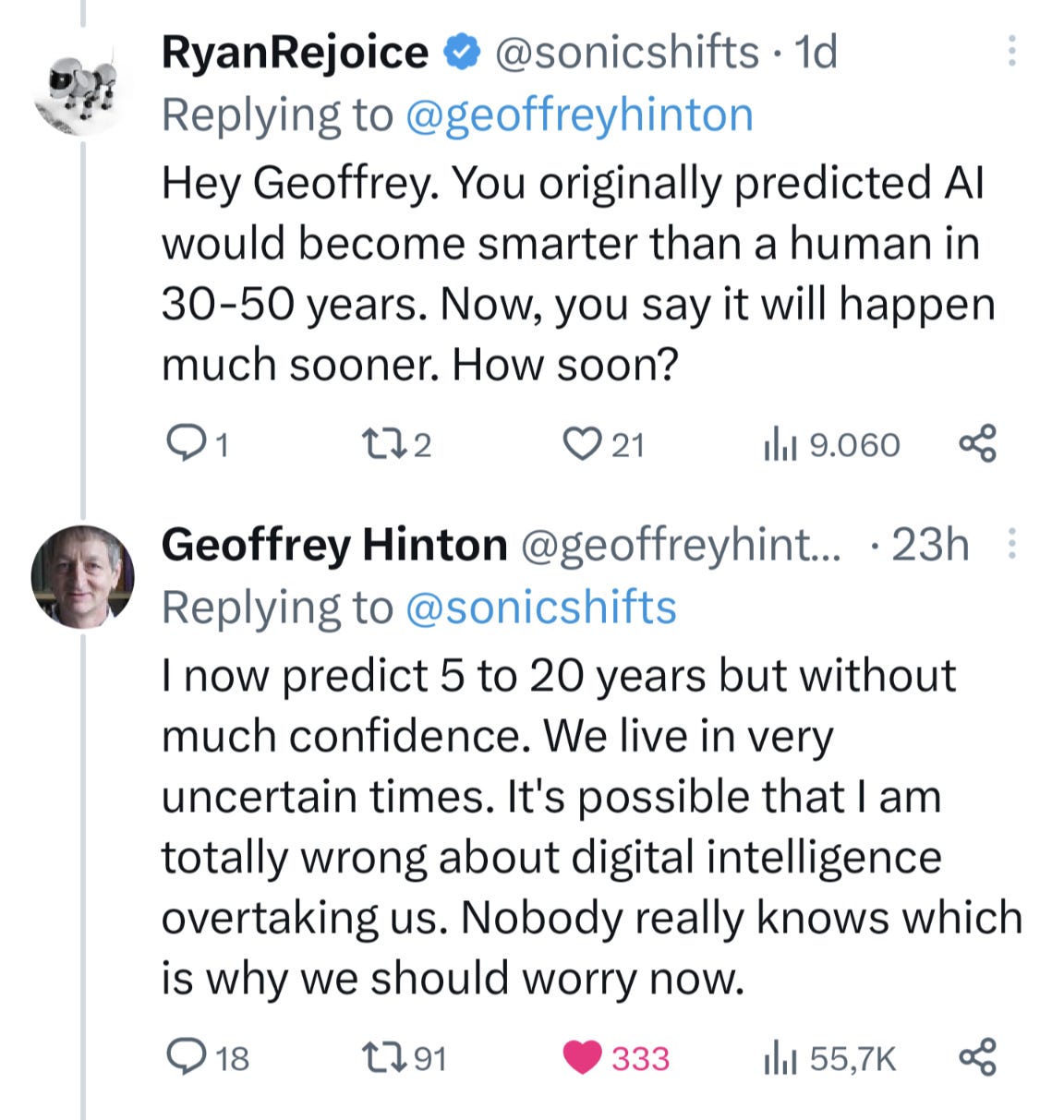

After Demis Hassabis, Geoffrey Hinton also predicts short timelines for human/superhuman AI: https://twitter.com/geoffreyhinton/status/1653687894534504451

A few weeks ago, I would have agreed with them. But given the rapid pace at which existential worries about AI are becoming mainstream, the possibility of self-censorship or government crackdowns makes short timelines less likely.

Distilling Step-by-Step! Outperforming Larger Language Models with Less Training Data and Smaller Model Sizes — Their 770M T5 outperforms the 540B PaLM model using only 80% of available data on a benchmark task. https://arxiv.org/abs/2305.02301

"When will I be able to train ~GPT-4 on my home gaming laptop? ...if things continue... probably within the next 6 years, if you're willing to leave it running a few months." https://twitter.com/ohlennart/status/1654111973007585285

“Introducing LLaVA Lightning: Train a lite, multimodal GPT-4 with just $40 in 3 hours!” https://twitter.com/imhaotian/status/1653575387798986756

Leaked Internal Google Document Claims Open Source AI Will Outcompete Google and OpenAI https://www.semianalysis.com/p/google-we-have-no-moat-and-neither

“Self-supervised multimodal ML is promising the next AI breakthrough. CEBRAai: for self-supervised hypothesis- and discovery-driven science.” https://twitter.com/TrackingActions/status/1653867482804019201

A new programming language. It's Python, but with none of Python's problems: “Chris Lattner of LLVM and Swift fame just announced a new programming language for ML that is high-performance and backwards compatible with Python (works with Python libraries). Could be a game changer.” — Amjad Masad https://www.fast.ai/posts/2023-05-03-mojo-launch.html (see also: https://docs.modular.com/mojo/why-mojo.html)

“Samsung is the most important tech company you’ve never thought about. The family dynasty behind Samsung is planning to spend $228 billion on semiconductor development and manufacturing to dethrone TSMC.” https://twitter.com/SamoBurja/status/1651675061802381312

AMD Says AI Is The Number One Priority Right Now https://www.nextplatform.com/2023/05/03/amd-says-ai-is-the-number-one-priority-right-now/

Unlimiformer: Long-Range Transformers with Unlimited Length Input https://arxiv.org/abs/2305.01625

Is Your Code Generated by ChatGPT Really Correct? Rigorous Evaluation of Large Language Models for Code Generation https://arxiv.org/abs/2305.01210

From Words to Code: Harnessing Data for Program Synthesis from Natural Language https://arxiv.org/abs/2305.01598

Accelerating Neural Self-Improvement via Bootstrapping https://arxiv.org/abs/2305.01547

Power-seeking can be probable and predictive for trained agents https://arxiv.org/abs/2304.06528

“Fixing a small bug in my recent study dramatically changes the data, and the new data provides significant evidence that an LLM that gives incorrect answers to previous questions is more likely to produce incorrect answer to future questions.” https://www.lesswrong.com/posts/mfn32QHwKb55afHq4/i-was-wrong-simulator-theory-is-real

DIY Neurotech: Making BCI Accessible. New open-source hardware thrusts brain-signal processing into a maker’s world. https://spectrum.ieee.org/neurotechnology-diy

The link between police staffing and crime rates is extremely clear: "Using data from nearly 7,000 U.S. municipalities, I find that a 10% increase in police employment rates reduces violent crime rates by 13% and property crime rates by 7%." https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2845099

What are some minimal viable axioms to believe in? “Induction works” and the Peano Axioms? Possibly your own sanity?

(Note: I don’t fact-check everything I post. You have to do that yourself.)