Links for 2023-04-14

UniPi: Learning universal policies via text-guided video generation: https://ai.googleblog.com/2023/04/unipi-learning-universal-policies-via.html

Building models that solve a diverse set of tasks has become a dominant paradigm in the domains of vision and language...A natural next step is to use such tools to construct agents that can complete different decision-making tasks across many environments.

UniPi leverages text for expressing task descriptions and video (i.e., image sequences) as a universal interface for conveying action and observation behavior in different environments. Given an input image frame paired with text describing a current goal (i.e., the next high-level step), UniPi uses a novel video generator (trajectory planner) to generate video with snippets of what an agent’s trajectory should look like to achieve that goal. The generated video is fed into an inverse dynamics model that extracts underlying low-level control actions, which are then executed in simulation or by a real robot agent. We demonstrate that UniPi enables the use of language and video as a universal control interface for generalizing to novel goals and tasks across diverse environments.

UniPi is only one step towards what generative models can bring to decision making. Other examples include using generative foundation models to provide photorealistic or linguistic simulators of the world in which artificial agents can be trained indefinitely.

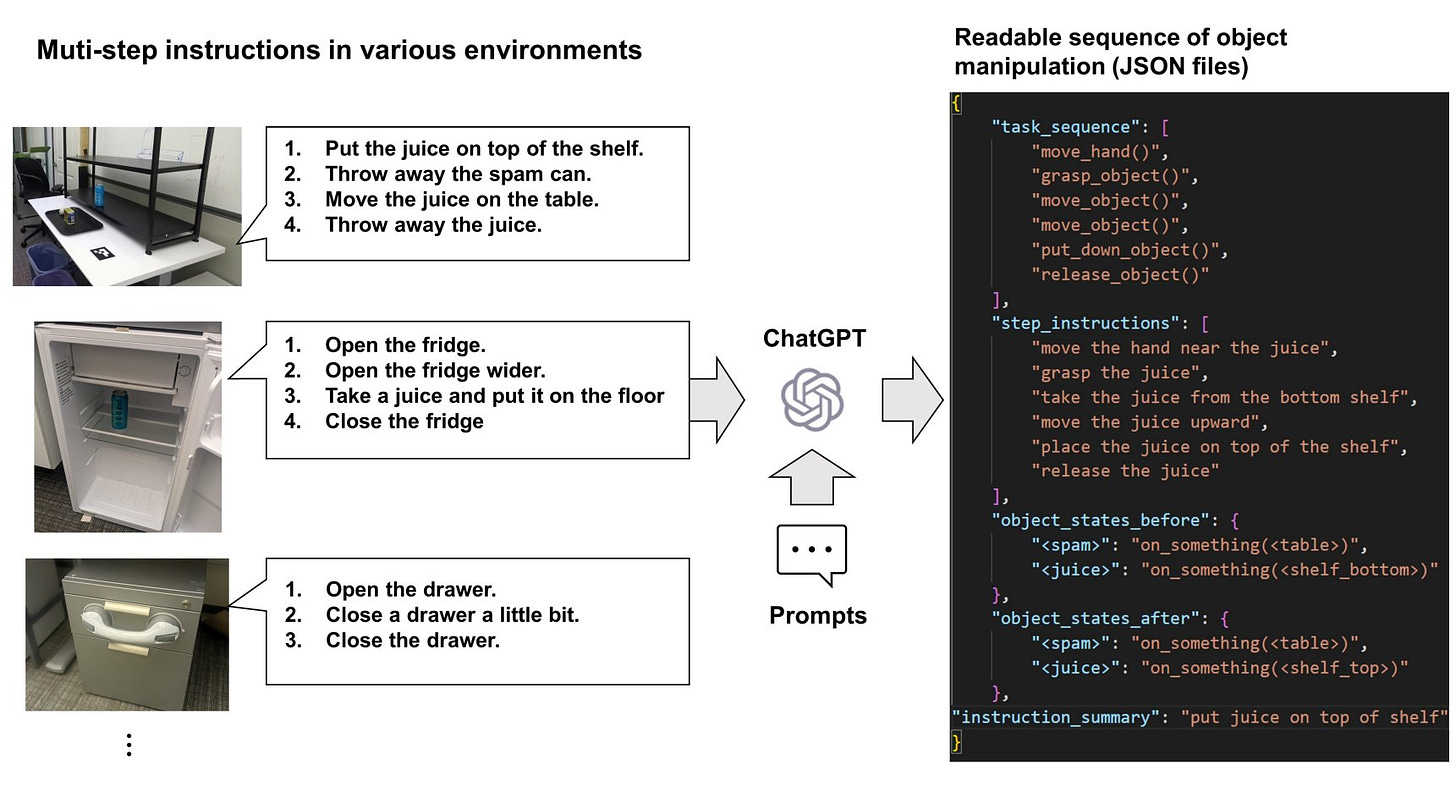

ChatGPT Can Convert Natural Language Instructions Into Executable Robot Actions: https://arxiv.org/abs/2304.03893

Teaching Large Language Models to Self-Debug: “...without any feedback on the code correctness or error messages, the model is able to identify its mistakes by explaining the generated code in natural language. Self-Debugging achieves the state-of-the-art performance on several code generation benchmarks...” https://arxiv.org/abs/2304.05128

Emergent autonomous scientific research capabilities of large language models: “…we present an Intelligent Agent system that combines multiple large language models for autonomous design, planning, and execution of scientific experiments. We showcase the Agent's scientific research capabilities with three distinct examples, with the most complex being the successful performance of catalyzed cross-coupling reactions.” https://arxiv.org/abs/2304.05332

An introduction to zero-knowledge machine learning (ZKML): Zero-knowledge cryptography will allow us to make statements like: “a given piece of content C came out of model M applied to some input X.” https://worldcoin.org/blog/engineering/intro-to-zkml

Keep the Conversation Going: Fixing 162 out of 337 bugs for $0.42 each using ChatGPT https://arxiv.org/abs/2304.00385

"A demo of #BabyAGI. We used it to generate a company's carbon footprint report." https://twitter.com/eucheetan/status/1646353261254037506 (Code: https://python.langchain.com/en/latest/use_cases/agents/baby_agi.html)

On AutoGPT https://www.lesswrong.com/posts/566kBoPi76t8KAkoD/on-autogpt

“AutoGPTs are improving at a blazingly fast speed and could soon transform the face of business.” https://twitter.com/NathanLands/status/1646101184384573446

Scott Aaronson: GPT-4 gets a B on my quantum computing final exam! https://scottaaronson.blog/?p=7209

Open-source AI: LAION proposes to openly replicate GPT-4 – a public call https://www.heise.de/news/Open-source-AI-LAION-proposes-to-openly-replicate-GPT-4-a-public-call-8785603.html

AUDIT: Audio Editing by Following Instructions with Latent Diffusion Models https://audit-demo.github.io/

We must slow down the race to God-like AI [Financial Times] https://archive.is/5XSAZ

1st step towards vision restoration using very high resolution remote cerebral activation based on Ultrasonic waves & sonogenetics! https://www.nature.com/articles/s41565-023-01359-6

Mind-Controlled Robots: New Graphene Sensors Are Turning Science Fiction Into Reality https://scitechdaily.com/mind-controlled-robots-new-graphene-sensors-are-turning-science-fiction-into-reality/

Meet the Ddog: The World’s First Brain-Controlled Spot Robot Assistant: “Ddog project features Spot robot from Boston Dynamics and a Brain-Computer Interface (BCI) system, powered by AttentivU, a pair of wireless glasses that can measure person’s Electroencephalography (EEG – brain activity) and Electrooculography (EOG – eye movements) signals.” https://www.youtube.com/watch?v=uBcdnOlYJw8

“All four of the solar system’s gas giants are either known or suspected to have watery moons of their own. There is even some evidence that the same may be true for Pluto, a dwarf planet that orbits in the frigid darkness beyond the orbit of Uranus. Assuming that gas giants in other star systems also have moons—and there is no reason to assume they do not—that drastically raises the number of places in the galaxy in which life could have arisen.” [The Economist] https://archive.is/qAcFb

Ready for launch: the mission to find alien life on Jupiter’s icy moons https://www.theguardian.com/science/2023/apr/09/ready-for-launch-the-mission-to-find-alien-life-on-jupiters-icy-moons

Survey: “How many unarmed Black men were killed by police in 2021?” 40% of "Very Liberals": 1,000+; 16% of "Very Conservatives": 1,000+; Actual number: 11 https://www.skeptic.com/research-center/reports/Research-Report-PADS-003.pdf

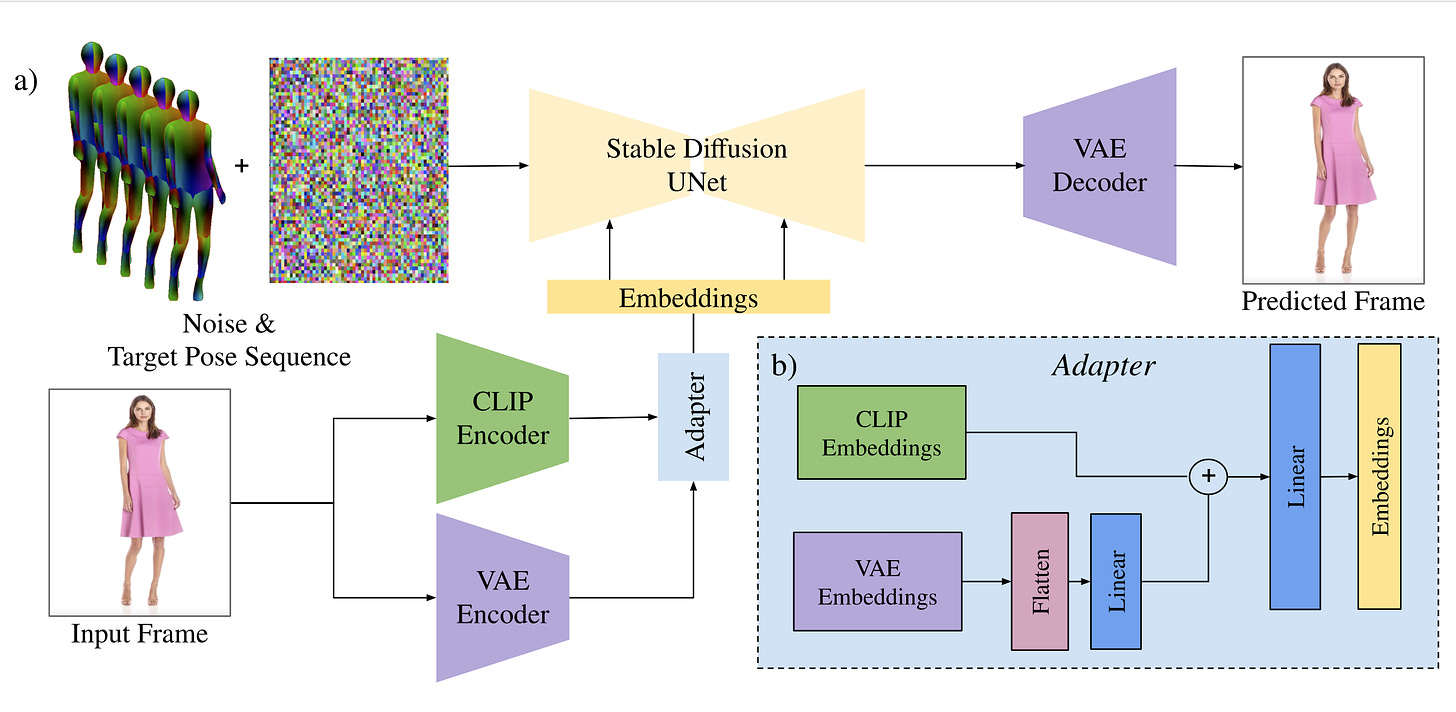

DreamPose: Fashion Image-to-Video Synthesis via Stable Diffusion https://grail.cs.washington.edu/projects/dreampose/

DreamPose is a diffusion-based image-to-video synthesis model. Given an input image of a person and pose sequence, DreamPose synthesizes a photorealistic video of the input person following the pose sequence.