Links for 2023-04-13

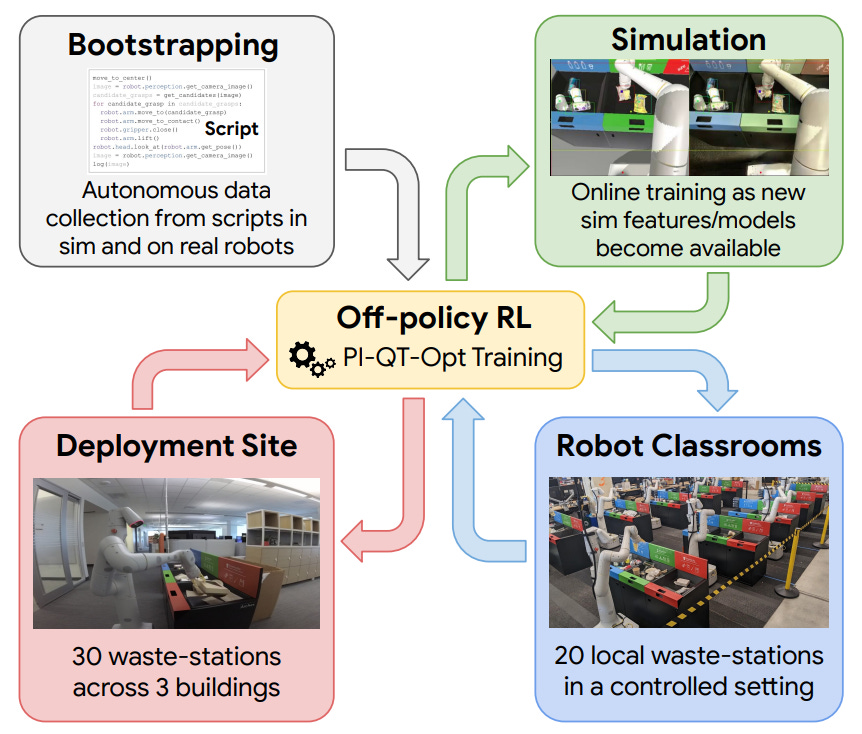

Robots sorting trash end-to-end in real offices: https://rl-at-scale.github.io/

...our system - RL at Scale (RLS) - combines scalable deep RL from real-world data with bootstrapping from training in simulation, and incorporates auxiliary inputs from existing computer vision systems as a way to boost generalization to novel objects, while retaining the benefits of end-to-end training.

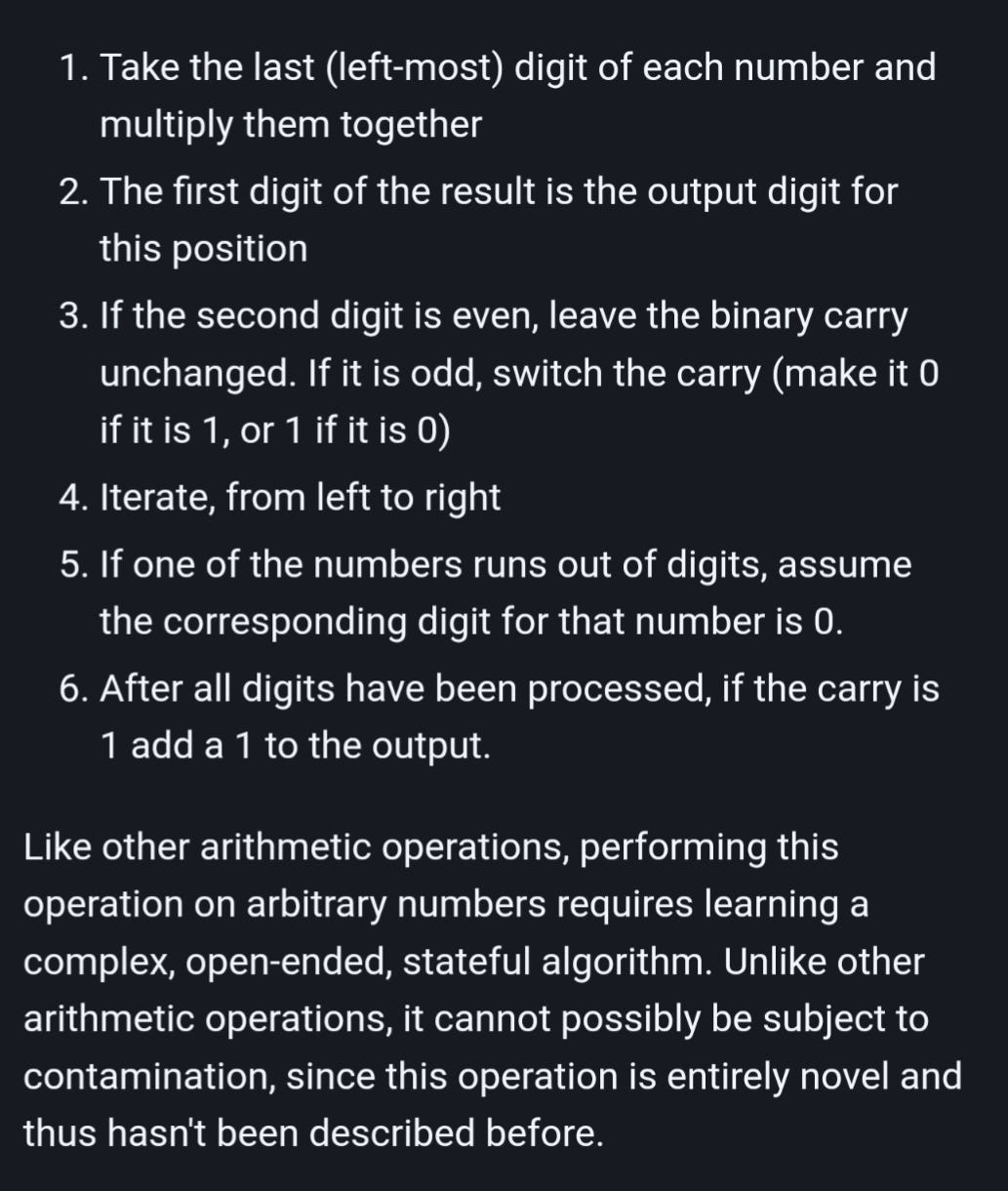

Thomas Miconi reproduces the results of a paper showing that LLMs can pick up new, complex algorithms from their prompts, and generalize them to arbitrary inputs. He used a totally novel (and deliberately bizarre) arithmetic operation to avoid contamination.

From Deep to Long Learning? “These models hold the promise to have context lengths of millions… or maybe even a billion!” https://hazyresearch.stanford.edu/blog/2023-03-27-long-learning

OpenAGI: New paper proposes LLMs + domain experts (i.e task-specific models) + “Reinforcement Learning from Task Feedback” (RLTF) as a path to AGI. — “…the LLM is responsible for synthesizing various external models for solving complex tasks, while RLTF provides feedback to improve its task-solving ability, enabling a feedback loop for self-improving AI.” https://arxiv.org/abs/2304.04370

OpenAI released an implementation of Consistency Models: consistency models, a new family of generative models that achieve high sample quality without adversarial training. They support fast one-step generation by design, while still allowing for few-step sampling to trade compute for sample quality https://github.com/openai/consistency_models

Insights into insight problem-solving: individuals with more connections between concepts in their semantic networks tend to solve problems using insight. https://www.sciencedirect.com/science/article/pii/S1871187123000470

SVT: Supertoken Video Transformer for Efficient Video Understanding — “Quantitatively, our method improves the performance of both ViT and MViT while requiring significantly less computations…” https://arxiv.org/abs/2304.00325

“GPTs are not being trained to imitate human error. They're being trained to *predict* human error...A human can write a rap battle in an hour. A GPT loss function would like the GPT to be intelligent enough to predict it on the fly...It is being asked to model what you were thinking - the thoughts in your mind whose shadow is your text output - so as to assign as much probability as possible to your true next word.” https://www.lesswrong.com/posts/nH4c3Q9t9F3nJ7y8W/gpts-are-predictors-not-imitators

BabyAGI User Guide https://python.langchain.com/en/latest/use_cases/agents/baby_agi.html (see also: Another take on BabyAGI, focused on workflows that complete. https://github.com/alvarosevilla95/autolang)

Introducing GPT-Legion, an autonomous agent framework https://github.com/eumemic/gpt-legion

“the global semiconductor industry isn’t just the supply chain. It’s one of humanity’s great technological scientific achievements. Our ability to do this stuff at nanoscale is us up against the face of God, in a sense.” [Wired] https://archive.is/9U8YL

Scientists at EPFL’s Blue Brain Project have developed a groundbreaking computational model of the thalamic microcircuit in the mouse brain, offering new insights into the role this region plays in brain function and dysfunction. https://actu.epfl.ch/news/new-circuit-model-offers-insights-into-brain-funct/

“...you can do calculus with infinitesimals in a perfectly rigorous way...logician Abraham Robinson showed that hyperreal numbers are just as consistent as ordinary real numbers...Here's a free online textbook that teaches calculus this way...” https://mathstodon.xyz/@johncarlosbaez/110135844804089321

‘Bees are sentient’: inside the stunning brains of nature’s hardest workers https://www.theguardian.com/environment/2023/apr/02/bees-intelligence-minds-pollination

“Scientists haven’t spotted one of influenza’s most mysterious lineages for more than three years. Is it truly gone?” [The Atlantic] https://archive.is/AaJhH

Supernatural explanations across 114 societies are more common for natural than social phenomena https://twitter.com/danicajwilbanks/status/1642944504524337152

O'Neill Cylinder animation by Mark A. Garlick https://twitter.com/SpaceBoffin/status/1645839712106446853