Links for 2023-04-06

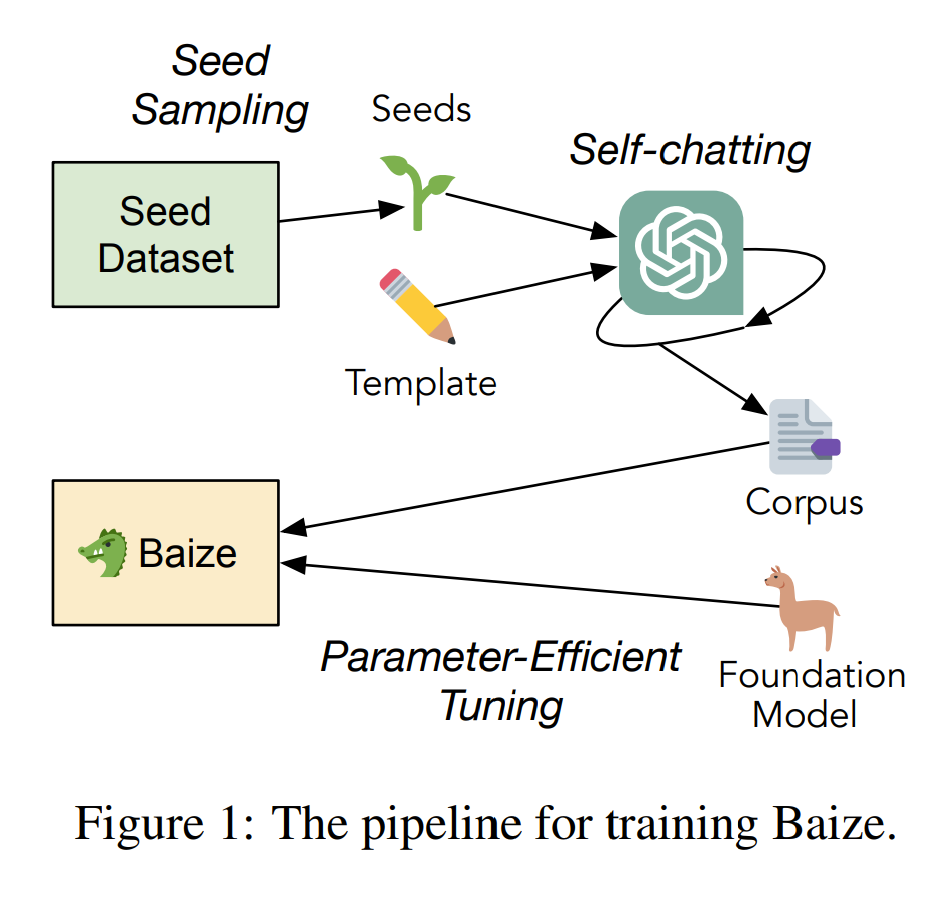

Baize: An Open-Source Chat Model with Parameter-Efficient Tuning on Self-Chat Data https://arxiv.org/abs/2304.01196

Proposes a pipeline that can automatically generate a high-quality multi-turn chat corpus by leveraging ChatGPT to engage in a conversation with itself.

The beginning of an AGI arms race? “On March 4, the opening ceremony of the 14th National Committee of the Chinese People’s Political Consultative Conference (CPPCC) was held. Zhu Songchun, a member of the CPPCC and director of the Beijing Institute for General Artificial Intelligence, suggested in a proposal that China should elevate the development of AGI to the level of the “Two Bombs, One Satellite” of the contemporary era and seize the global strategic high ground of technology and industrial development.” https://www.chinatalk.media/p/ai-proposals-at-two-sessions-agi

TPU v4: An Optically Reconfigurable Supercomputer for Machine Learning with Hardware Support for Embeddings — Much cheaper, lower power, and faster than Infiniband, OCSes and underlying optical components are <5% of system cost and <3% of system power. TPU v4 outperforms TPU v3 by 2.1x, and The TPU v4 pod is 4x larger at 4096 chips and thus ~10x faster overall. https://arxiv.org/abs/2304.01433

“REFINER, a framework for finetuning LMs to explicitly generate intermediate reasoning steps while interacting with a critic model that provides automated feedback on the reasoning. Specifically, the critic provides structured feedback that the reasoning LM uses to iteratively improve its intermediate arguments.” https://arxiv.org/abs/2304.01904

“…we are releasing Cerebras-GPT, a family of 7 GPT models from 111M to 13B parameters trained using the Chinchilla formula. These are the highest accuracy models for a compute budget and are available today open-source!” https://twitter.com/CerebrasSystems/status/1640725880711569408

“We present Cluster-Branch-Train-Merge (c-BTM), a new way to scale sparse expert LLMs on any dataset — completely asynchronously.” https://twitter.com/ssgrn/status/1640322362100051968

unarXive 2022: All arXiv Publications Pre-Processed for NLP, Including Structured Full-Text and Citation Network https://arxiv.org/abs/2303.14957

Robotic hand can identify objects with just one grasp https://news.mit.edu/2023/robotic-hand-can-identify-objects-just-one-grasp-0403

To Make Self-Driving Cars Safer, Expose Them to Terrible Drivers https://singularityhub.com/2023/03/31/to-make-self-driving-cars-safer-expose-them-to-terrible-drivers/

“I’m scared of AGI. It's confusing how people can be so dismissive of the risks. I’m an investor in two AGI companies and friends with dozens of researchers working at DeepMind, OpenAI, Anthropic, and Google Brain. Almost all of them are worried.” https://twitter.com/arram/status/1642614341622181889

IQ estimates of public intellectuals and personas https://kirkegaard.substack.com/p/iq-estimates-of-public-intellectuals

Calling the Lab-Leak Theory 'Disinformation' Created Disinformation [The New York Times] https://archive.is/6YLW8

Do Politicians Ignore NIH Ties with Wuhan Lab to Protect Biodefense "Contract Racket"? https://disinformationchronicle.substack.com/p/do-politicians-ignore-nih-ties-with

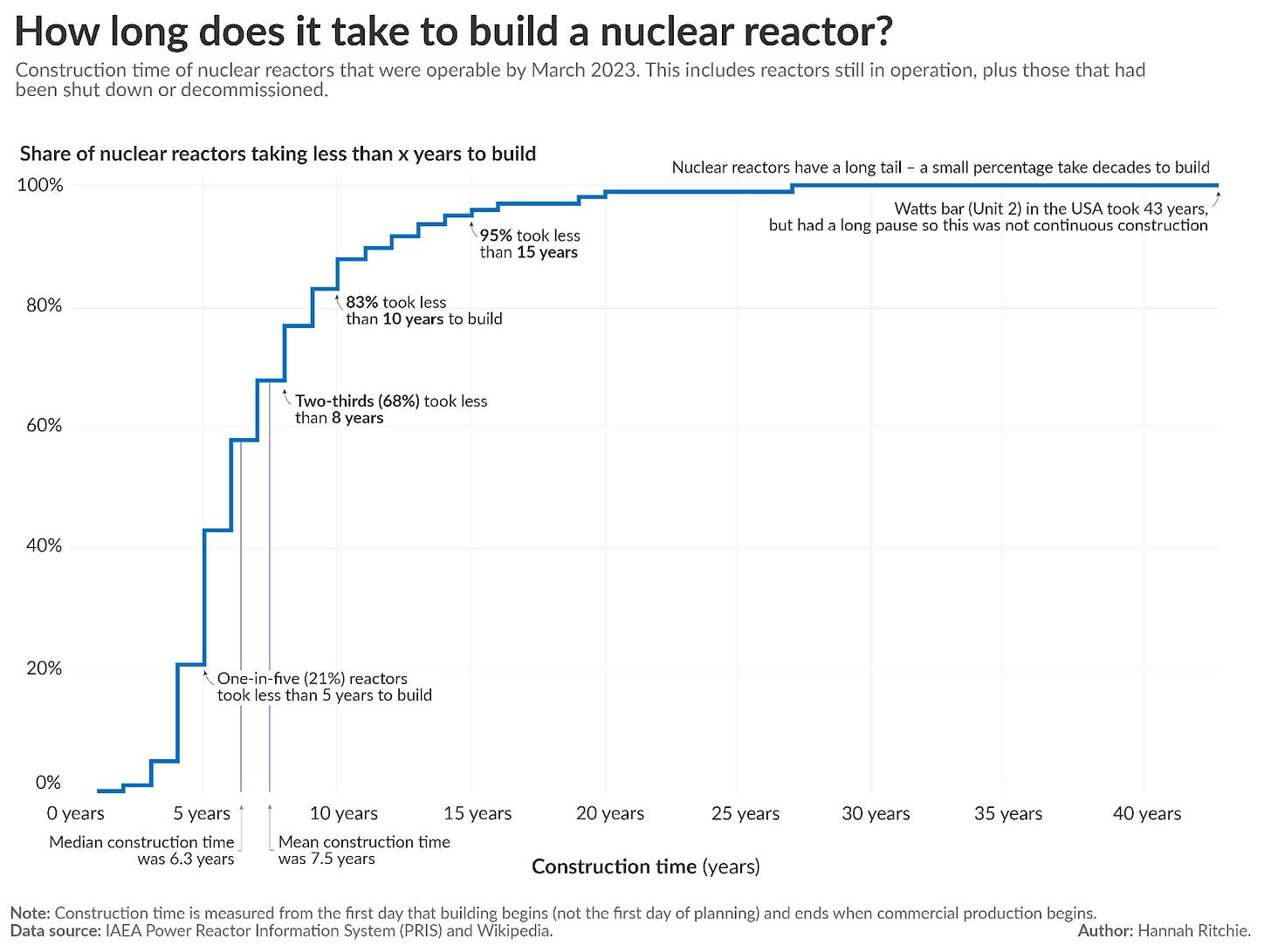

It takes around 6 to 8 years to build a nuclear reactor. That’s the average construction time globally. Reactors can be built very quickly: some have been built in just 3 to 5 years.

Read more: https://hannahritchie.substack.com/p/nuclear-construction-time

Derek Parfit in his book Reasons and Persons:

Compare three outcomes:

1. Peace

2. A nuclear war that kills 99% of the world’s existing population.

3. A nuclear war that kills 100%.

(2) would be worse than (1), and (3) would be worse than (2).

Which is the greater of these two differences?

Most people believe that the greater difference is between (1) and (2). I believe that the difference between (2) and (3) is very much greater.

Extinction would be much worse because it prevents the existence of all future generations.