Links for 2023-04-01

Note: Despite April Fools' Day, there should be no hoaxes below.

HuggingGPT, a new way towards AGI: Solving AI Tasks with ChatGPT and its Friends in HuggingFace https://arxiv.org/abs/2303.17580

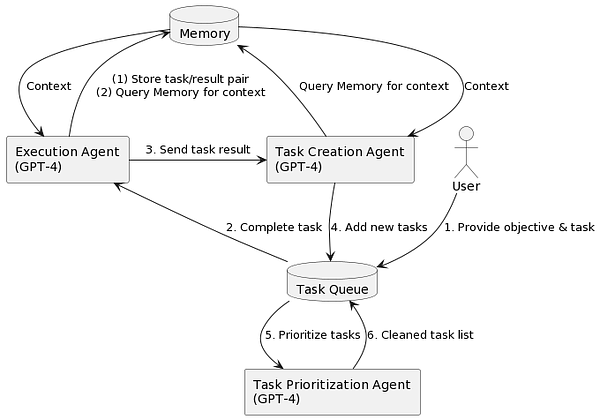

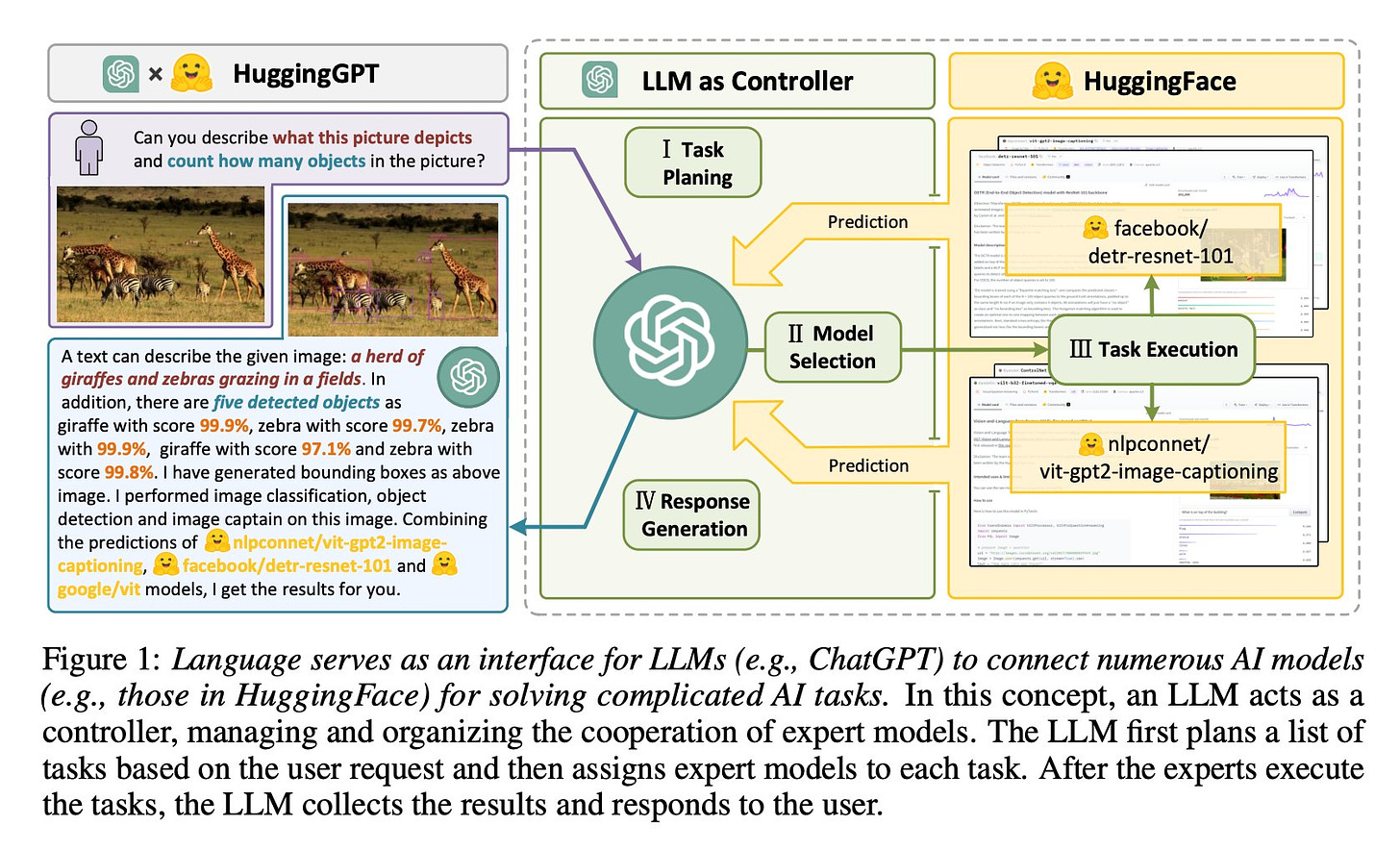

Solving complicated AI tasks with different domains and modalities is a key step toward artificial general intelligence (AGI). While there are abundant AI models available for different domains and modalities, they cannot handle complicated AI tasks. Considering large language models (LLMs) have exhibited exceptional ability in language understanding, generation, interaction, and reasoning, we advocate that LLMs could act as a controller to manage existing AI models to solve complicated AI tasks and language could be a generic interface to empower this. Based on this philosophy, we present HuggingGPT, a system that leverages LLMs (e.g., ChatGPT) to connect various AI models in machine learning communities (e.g., HuggingFace) to solve AI tasks. Specifically, we use ChatGPT to conduct task planning when receiving a user request, select models according to their function descriptions available in HuggingFace, execute each subtask with the selected AI model, and summarize the response according to the execution results. By leveraging the strong language capability of ChatGPT and abundant AI models in HuggingFace, HuggingGPT is able to cover numerous sophisticated AI tasks in different modalities and domains and achieve impressive results in language, vision, speech, and other challenging tasks, which paves a new way towards AGI.

"…we're sharing two major advancements in our work toward general-purpose embodied AI agents: VC-1 & ASC. We're excited for how this work will help build toward a future where AI agents can assist humans in both the virtual & physical world." https://ai.facebook.com/blog/robots-learning-video-simulation-artificial-visual-cortex-vc-1/

“Given the enormous number of instructional videos available online, learning a diverse array of multi-step task models from videos is an appealing goal. We introduce a new pre-trained video model, VideoTaskformer, focused on representing the semantics and structure of instructional videos.” https://medhini.github.io/task_structure/

“here’s a force-directed knowledge graph interface for @OpenAI’s gpt-4. given a topic, it prompts new questions to ask based on its own generated responses, allowing curiosity-led exploration of a concept.” https://twitter.com/hturan/status/1641780868640374784

TaskMatrix.AI: Completing Tasks by Connecting Foundation Models with Millions of APIs https://arxiv.org/abs/2303.16434

ReBotNet: Fast Real-time Video Enhancement https://jeya-maria-jose.github.io/rebotnet-web/

“Chatbase lets you create a custom ChatGPT from your data, customize its UI, and embed it on your website as a chat bubble or an iframe!” https://twitter.com/yasser_elsaid_/status/1640391858143608843

Incorporating AI to query your SQL database https://twitter.com/JonZLuo/status/1638638298666004483

“Rather than discarding a previous version of a model, Kim and his collaborators use it as the building blocks for a new model. Using machine learning, their method learns to “grow” a larger model from a smaller model in a way that encodes knowledge the smaller model has already gained. This enables faster training of the larger model.” https://news.mit.edu/2023/new-technique-machine-learning-models-0322

"Former UK government advisor: We’re giving away AGI to the private sector. Why? https://jameswphillips.substack.com/p/securing-liberal-democratic-control

“AI takeover is very likely: Humans compete with each other, but that doesn't "keep us in check" from the perspective of chimpanzees. Our concepts are too advanced for them. They lack the ability to participate in our economy. The world is now a human world, not a chimp world.” https://twitter.com/JeffLadish/status/1639194473350717442

A GPT-4-based worm https://github.com/refcell/run-wild

“i have been told that gpt5 is scheduled to complete training this december and that openai expects it to achieve agi.” https://www.nextbigfuture.com/2023/03/agi-level-gpt5-in-nine-months.html

OA apparently is denying they are 'currently training GPT-5': “Hannah Wong, a spokesperson for OpenAI, says the company spent more than six months working on the safety and alignment of GPT-4 after training the model. She adds that OpenAI is not currently training GPT-5.” https://www.wired.com/story/chatgpt-pause-ai-experiments-open-letter/

With GPT-4, OpenAI Is Deliberately Slow Walking To AGI https://www.piratewires.com/p/openai-slowing-walking-gpt

What can we learn from hunter-gatherers about children's mental health? An evolutionary perspective https://acamh.onlinelibrary.wiley.com/doi/10.1111/jcpp.13773

What does it mean to predict the next token well enough? … It means that you understand the underlying reality that led to the creation of that token.

Remember Teddy From Steven Spielberg's A.I. Artificial Intelligence?

Even if AI progress mostly stops now, I believe such a toy or artificial pet should soon be within our reach. What's needed is optimization, fine-tuning, and bringing all the tools together into a coherent whole. But we already have the basic technology in our hands:

1. Take a multimodal model like PaLM-E (this will serve as the evolutionary prior/innate knowledge).

2. Give it access to a robotic body (embodiment/feedback).

3. Give it long-term memory by giving it access to a database.

4. Give it self-reflection by allowing it to talk to itself, other models, and humans.

5. Give it a terminal goal like “make humans smile” (to be assessed using feedback from its camera) and allow it to generate instrumental goals on its own.

P.S. I wrote this a few days ago before research caught up.