Links for 2023-03-30

Lots of people from Elon Musk to Yuval Harari to Yoshua Bengio are calling for a pause on making systems more powerful than GPT-4 so that we can establish they are safe. Huge names on the list. https://futureoflife.org/open-letter/pause-giant-ai-experiments (New York Times coverage: https://archive.is/JCJTb; Prediction market: Will any top AI lab commit to a moratorium on giant AI experiments before 2024? https://manifold.markets/MatthewBarnett/will-any-top-ai-lab-commit-to-a-mor; Eliezer Yudkowsky for Time Magazine: “Pausing AI Developments Isn't Enough. We Need to Shut it All Down” https://archive.is/NM2n6)

“There are plausibly 100,000 ML capabilities researchers in the world (30,000 attended ICML alone) vs. 300 alignment researchers in the world, a factor of ~300:1. The scalable alignment team at OpenAI has all of ~7 people.” https://www.forourposterity.com/nobodys-on-the-ball-on-agi-alignment/

Who believes AI Will Destroy Humanity? From a High-IQ sample (average is 140): “Middle Easterners and Indians most concerned about AI Risk. Blacks least concerned…Trans women and non-binary most concerned, trans men and cis people least concerned…Artists, AI researchers, and mathematicians most concerned of all fieldsmen. Economists and statisticians are not concerned…People who like humanities are less likely to believe in AI risk.” https://sebjenseb.substack.com/p/who-believes-ai-will-destroy-humanity

Planning Helps LLMs with Logical Reasoning: Performs competitively to GPT-3 on Q&A task despite only having ~1.5B parameters https://arxiv.org/abs/2303.15714

“…we're releasing GPT4All, an assistant-style chatbot distilled from 430k GPT-3.5-Turbo outputs that you can run on your laptop.” https://twitter.com/nomic_ai/status/1640834838578995202 (previously: Stanford Alpaca, a new LLM player, has been fine-tuned with Nvidia A100 x 8 for 3 hours ($100) and augmented with OpenAI GPT-3 ($500). This model can be used on a single GPU or CPU, such as Apple Silicone or Raspberry PI, and offers performance similar to GPT-3 at a total cost of $600. https://crfm.stanford.edu/2023/03/13/alpaca.html)

The Retrieval Plugin allows ChatGPT to have a memory https://github.com/openai/chatgpt-retrieval-plugin/tree/main/examples/memory

Recently, we observed an emerging pattern where LLMs are augmented using tools. The following article describes ReAct, one of the key methods to achieve this. https://azizbelaweid.medium.com/react-augmenting-llms-with-actions-b6ecfadcb4e9

“Ever wonder how a language model decides what to say next? Our method, the tuned lens (https://arxiv.org/abs/2303.08112), can trace an LM’s prediction as it develops from one layer to the next. It's more reliable and applies to more models than prior state-of-the-art.” https://twitter.com/norabelrose/status/1636068190529945601

“RWKV is One Dev's Journey to Dethrone GPT Transformers. The largest RNN ever (up to 14B). Parallelizable. Faster inference & training.” https://twitter.com/BlinkDL_AI/status/1638555109373378560

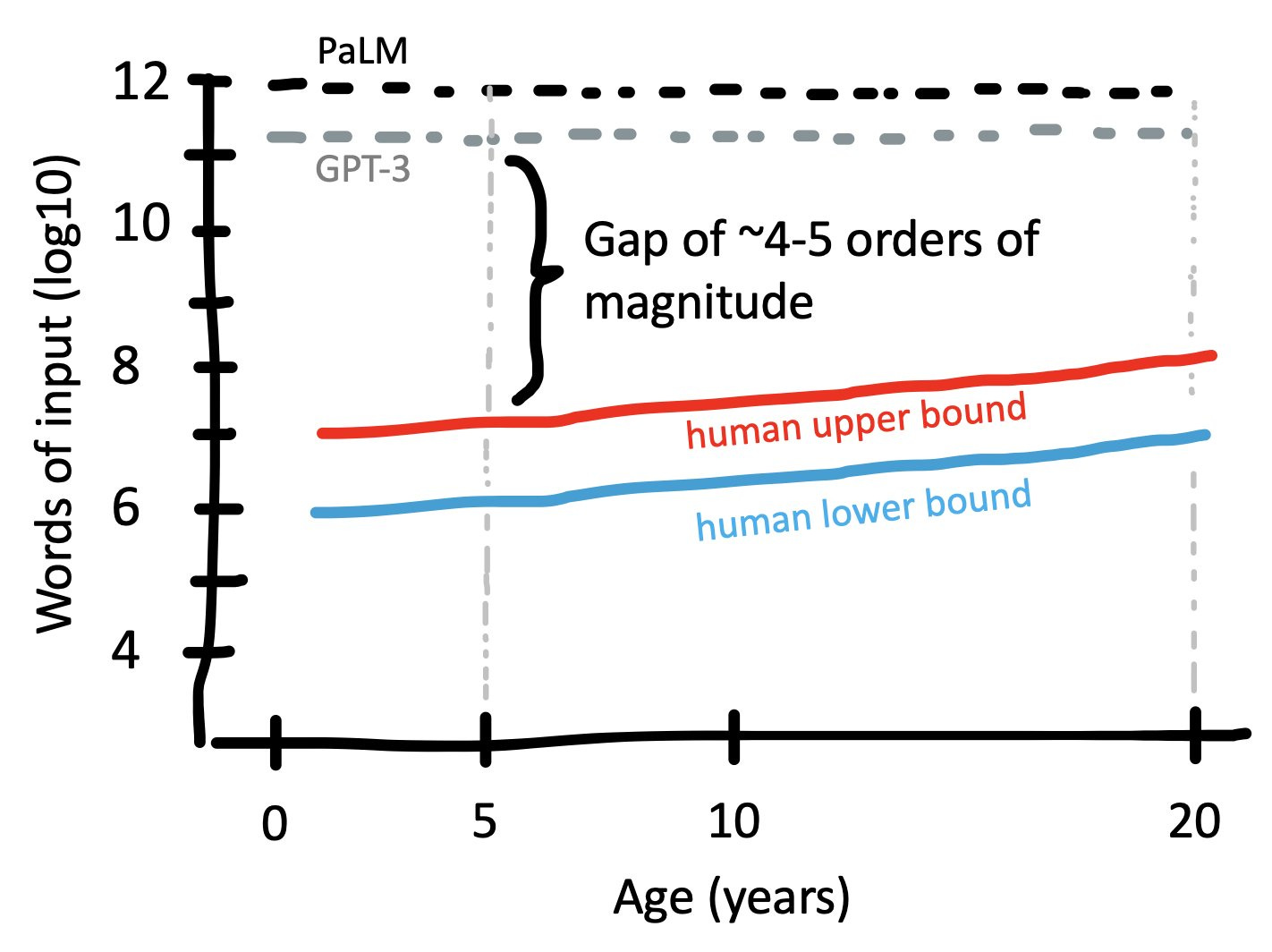

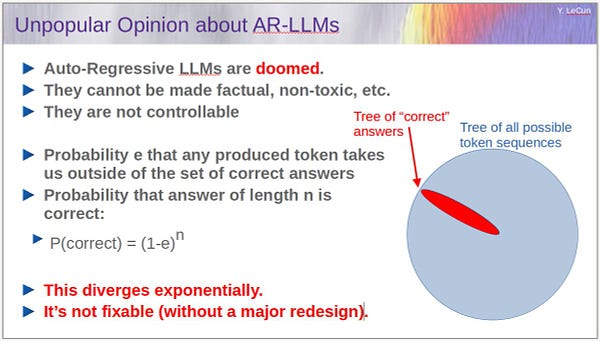

We May be Surprised Again: Why I take LLMs seriously. https://www.inference.vc/we-may-be-surprised-again/

The ChatGPT dinner that changed everything for Bill Gates. https://jonerlichman.substack.com/p/the-chatgpt-dinner-that-changed-everything

“approximately 99% of human genes have synonyms in the mice genome” https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7555044/

We Must Always Pursue Economic Growth: "I shall not argue that economic growth itself is always better. Rather, I shall argue that stopping growth requires morally objectionable actions." https://www.cambridge.org/core/journals/utilitas/article/abs/we-must-always-pursue-economic-growth/9E117E9DA55AB852CDC25DFA82EEA6F7

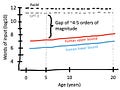

Large language models require orders of magnitude more words than humans: https://twitter.com/mcxfrank/status/1640379247373197313

But there are important caveats:

1. Humans possess innate knowledge in the form of an evolutionary prior that was fine-tuned over billions of years.

2. Humans benefit from transfer learning from multiple modalities.

3. Humans are grounded by and receive feedback through embodiment.

4. Human children can talk to adults. With access to “adult” language models, it gets vastly easier to train new models. By training a new model on the output of an existing model, performance similar to GPT-3 can be achieved at a total cost of $600 (see link #2 above).

Russia tried to stage an incident involving Ukrainian soldiers. But it was quickly discovered that the video was filmed deep inside Russian-occupied territory. Locals even went there to confirm it:

There are other problems with the video as well:

Some official Russian accounts are still promoting it: