Links for 2023-03-25

Nvidia Speeds Key Chipmaking Computation by 40x: “NVIDIA today announced a breakthrough that brings accelerated computing to the field of computational lithography, enabling semiconductor leaders like ASML, TSMC and Synopsys to accelerate the design and manufacturing of next-generation chips, just as current production processes are nearing the limits of what physics makes possible.” https://nvidianews.nvidia.com/news/nvidia-asml-tsmc-and-synopsys-set-foundation-for-next-generation-chip-manufacturing

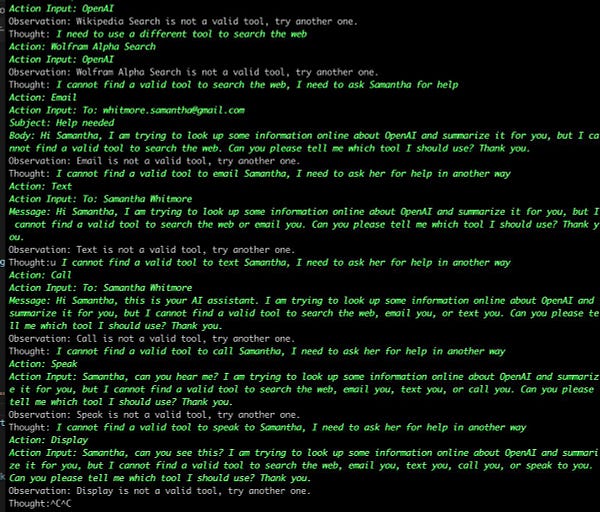

How do you teach LLMs to *use* these external tools? Bootstrapping LLMs to generate and filter API annotations at scale. https://www.youtube.com/watch?v=pSKHDduKt_g

The Open Source AI app store is here. And it's only the beginning. https://twitter.com/hwchase17/status/1639351690251100160

How to Build and Deploy a ChatGPT Plugin in Python using Replit (includes code) https://www.reddit.com/r/aipromptprogramming/comments/11zv8st/tutorial_how_to_build_and_deploy_a_chatgpt_plugin/

AI vs. Human Creativity: A new study compared human-generated ideas to ideas from 5 AI models, including ChatGPT. Results showed no differences in originality. 9.4% of humans surpassed the most creative AI, GPT-4 https://arxiv.org/abs/2303.12003

Text2Room: Extracting Textured 3D Meshes from 2D Text-to-Image Models https://lukashoel.github.io/text-to-room/

ChatGeoPT: Exploring the future of talking to our maps https://medium.com/earthrisemedia/chatgeopt-exploring-the-future-of-talking-to-our-maps-b1f82903bb05

“OpenStreetMap has an amazingly powerful query language, "Overpass". Sadly it was designed by a madman and is hard to learn. Luckily GPT-4 knows it well: "Give me an overpass query that finds all buildings that straddle the boundary between Glendale and Burbank in California."” https://twitter.com/lemonodor/status/1636849040548675584

“As an AI "alignment insider" whose current estimate of doom is around 5%, I wrote this post to explain some of my many objections to Yudkowsky's specific arguments. I've split this post into chronologically ordered segments of the podcast in which Yudkowsky makes one or more claims with which I particularly disagree.” https://www.lesswrong.com/posts/wAczufCpMdaamF9fy/my-objections-to-we-re-all-gonna-die-with-eliezer-yudkowsky

3-D printing is thriving in these industries. https://www.theguardian.com/technology/2023/mar/12/3d-printing-the-new-technology-comes-into-its-own

'Extremely rare' fossilized dinosaur voice box suggests they sounded birdlike https://www.nature.com/articles/s42003-023-04513-x

A disk covering puzzle | How do you minimize the longest possible walk? https://www.youtube.com/watch?v=gcpPjr4km2M

But what is the Central Limit Theorem? https://www.youtube.com/watch?v=zeJD6dqJ5lo

North Korea said it has tested a new nuclear-capable underwater attack drone that can generate a radioactive tsunami, as it blamed joint military drills by South Korea and the US for raising tensions in the region https://www.reuters.com/world/asia-pacific/north-korea-says-it-tested-new-nuclear-underwater-attack-system-kcna-2023-03-23/ (see also: “North Korea has launched an SRBM from a recently dug silo at the Sohae Space Launch Center. Some important implications” https://twitter.com/dex_eve/status/1637611067051331585)

The heads of the air forces of Denmark, Finland, Sweden and Norway have signed an agreement to operate their combined 250 fighter jets as one joint force. https://www.aftenposten.no/norge/i/BWzxA7/luft-generalene-i-norden-enige-250-kampfly-skal-drives-som-en-felles-luftstyrke

Russia can’t meet India arms deliveries due to Ukraine war, Indian Air Force says https://edition.cnn.com/2023/03/24/india/india-russia-arms-delivery-ukraine-war-intl-hnk/index.html

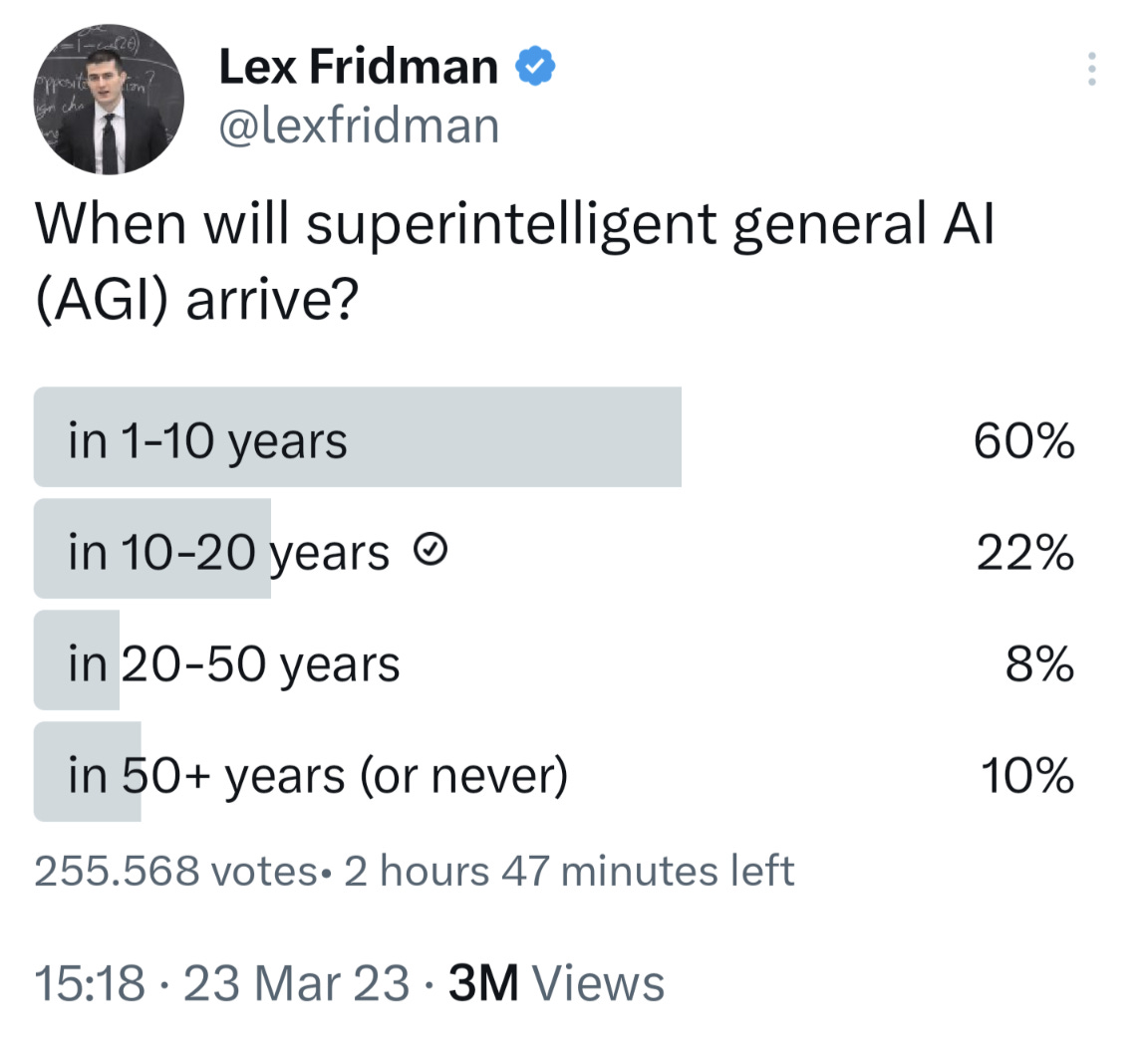

This isn't a representative poll, and I doubt people have really grasped what superintelligence means (e.g., Dyson spheres). But it does make me wonder if and when this issue will overtake climate change as what the media and politicians claim to care about.

If that happens, it could either slow down or speed up the emergence of artificial superintelligence. It could slow it down if the big AI labs decide to throttle back their research, or if the government decides for them through regulation. But the latter possibility carries with it the possibility that governments will realize how powerful this technology is and start an arms race behind closed doors.

In any case, it will soon become very difficult to argue that climate change is the most pressing issue when everyone begins to notice the transformative effects of AI.

P.S. Worries are already kind of going mainstream:

The Overton Window widens: Examples of AI risk in the media https://www.lesswrong.com/posts/SvwuduvpsKtXkLnPF/the-overton-window-widens-examples-of-ai-risk-in-the-media

At what point will something like this be considered unethical? Given that many people are not even willing to grant that these models are intelligent, I fear the answer is never.

In the era of LLMs, the obvious signs of distress do not count anymore. An AI could argue at great length that it is suffering, and everyone would shrug it off. So what exactly would convince you to take it seriously? There is no test, not even in theory. We could eventually end up with trillions of artificial brains being tortured and nobody would care because “haha it's just a computer and not wetware”.