The Unpredictable Abilities Emerging From Large AI Models https://www.quantamagazine.org/the-unpredictable-abilities-emerging-from-large-ai-models-20230316

Vid2Seq: A pretrained visual language model that can understand and describe multi-event videos. https://ai.googleblog.com/2023/03/vid2seq-pretrained-visual-language.html

Sam Altman, CEO of OpenAI: “Q: Would you push a button to stop this technology if there was a 5% chance it would be the end of the world? A: I would push a button to slow it down. And in fact, I think we will need to figure out ways to slow down this technology” https://twitter.com/mezaoptimizer/status/1637119014106267648

Loyal’s latest milestone: the first longevity clinical study design supported by the FDA https://blog.loyalfordogs.com/loyals-latest-milestone-the-first-longevity-clinical-study-design-supported-by-the-fda/

Focal electrical stimulation of human retinal ganglion cells for vision restoration https://pubmed.ncbi.nlm.nih.gov/36533865/

“The optic nerve is a good location for a visual neuroprosthesis” https://iopscience.iop.org/article/10.1088/1741-2552/acc2e7

Indigenous groups in the Amazon evolved resistance to deadly Chagas https://www.science.org/content/article/indigenous-groups-amazon-evolved-resistance-deadly-chagas

Why the Mental Health of Liberal Girls Sank First and Fastest https://jonathanhaidt.substack.com/p/mental-health-liberal-girls

“I Cured My Aphantasia With a Low-Budget E-Course, Self-Therapy, and a Wee Bit of Microdosing...Usually people with aphantasia imagine that visualizing people are really seeing images. Like, when they close their eyes, they don’t just see a black void. But that’s not true! Most people see a black void just like aphantasics do. They just have a sense of an image alongside it, hovering in some imaginary parallel nether-space.” https://sashachapin.substack.com/p/i-cured-my-aphantasia-with-a-low

“...anyone who said Zero Covid had to collapse relatively soon...would experience being wrong anywhere up to a good 700 times in a row, also with zero visible 'progress' to show...But then December 2022 happened. And boom, almost literally overnight, Zero Covid was dropped and they let'er rip in just about the most destructive and harmful fashion possible one could drop Zero Covid. And then the pro-CCP forecasts lost all their forecasting points and the anti-ZC forecasts won bigly.” https://www.lesswrong.com/posts/A99DoJDf26PA3v3yH/covid-19-group-testing-post-mortem?commentId=9SpZfPpQAFNpEAWxn

New breakthrough enables perfectly secure digital communications https://eng.ox.ac.uk/news/new-breakthrough-enables-perfectly-secure-digital-communications/

Could there be Infinite Big Bangs? Boltzmann's Hypothesis Explained https://www.youtube.com/watch?v=nwfFdbNskYs

Let's Hope We're Not Living in a Simulation: "...if we live in a simulation, we are likely at the mercy of ethically abhorrent gods..." http://schwitzsplinters.blogspot.com/2023/03/new-paper-in-draft-lets-hope-were-not.html

“That we tend to discover systemically essential points of failure by them failing is deeply disturbing...In 1993 the world discovered the hard way that a speciality resin used to affix integrated circuits was mostly produced in a single chemical plant. That had an explosion destroying production and stores...Something similar happened in January when the main US supplier of potassium permanganate had a fire, causing big problems for water treatment plants.” https://twitter.com/anderssandberg/status/1634561128935088131

Engineers are now repairing Ukraine’s energy system faster than it can be destroyed [The Economist] https://archive.is/S5GQV

Yet another attempt at explaining the difference between the Monty *F*all and *H*all problem:

Setup (both variants):

- 3 closed doors

- 1 car behind a randomly chosen door

- Player picks door #1 (WLOG)

- Host opens door #2 or #3

- Player is offered to switch to the remaining door

Setup (Monty *H*all):

- The host is told where the car is and must choose a door with a goat.

Setup (Monty *F*all):

- The host doesn't know where the car is and picks a door randomly.

Now consider that the hosts in both setups open door #2 and reveal a goat. What is the probability that door #3 contains the car? That is, we want to figure out what P(car=3|open=2) is, the probability that the car is behind door #3 given that door #2 has been opened.

Prior: P(car=n)

The prior probability of the car being behind door #3, P(car=3), is 1/3. By symmetry, the same is true for both variants of the game and all the doors.

Likelihood: P(open=n|car=n)

Case 1:

P(open=2|car=1)=1/2. This is true for both variants. If the car is behind door #1, then the probability that either host opens door #2 is 1/2.

Now we have to be careful because the answers differ for each variant of the game.

Case 2:

Monty *H*all:

P(open=2|car=3)=1 because the host knows that the car is behind door #3 and has therefore no choice but to open door #2.

Monty *F*all:

P(open=2|car=3)=1/2 because the host opens the door randomly. This is because the host is ignorant of where the car is. In other words, the behavior of the host cannot be correlated with the objects behind the doors. Quote: “The host is ignorant about what is behind each door…the host walks across the stage and falls on accident…”

Case 3:

Monty *H*all:

P(open=2|car=2)=0 because the host knows that the car is behind door #2 and must choose a goat.

Monty *F*all:

P(open=2|car=2)=1/2. Again, the host does not know where the car is before accidentally opening a door.

Now we are ready to use Bayes theorem to figure out P(car=3|open=2):

Monty *H*all:

By the law of total probability, P(open=2)

= P(open=2|car=1)*P(car=1)+P(open=2|car=2)*P(car=2)+P(open=2|car=3)*P(car=3)

= 1/2 * 1/3 + 0 * 1/3 + 1 * 1/3

= 1/2

By Bayes theorem, P(car=3|open=2)

= P(open=2|car=3)*P(car=3)/P(open=2)

= 1 * 1/3 / 1/2

= 2/3

Monty *F*all:

By the law of total probability, P(open=2)

= P(open=2|car=1)*P(car=1)+P(open=2|car=2)*P(car=2)+P(open=2|car=3)*P(car=3)

= 1/2 * 1/3 + 1/2 * 1/3 + 1/2 * 1/3

= 1/2

By Bayes theorem, P(car=3|open=2)

= P(open=2|car=3)*P(car=3)/P(open=2)

= 1/2 * 1/3 / 1/2

= 1/3

Conclusion:

As you can see, Bayesian reasoning gives the same answer as the frequentist walk-through that I posted earlier. If you run a simulation, you will get the same result.

Switching does not work in the Monty *F*all problem because there is no new information to update your prior probability.

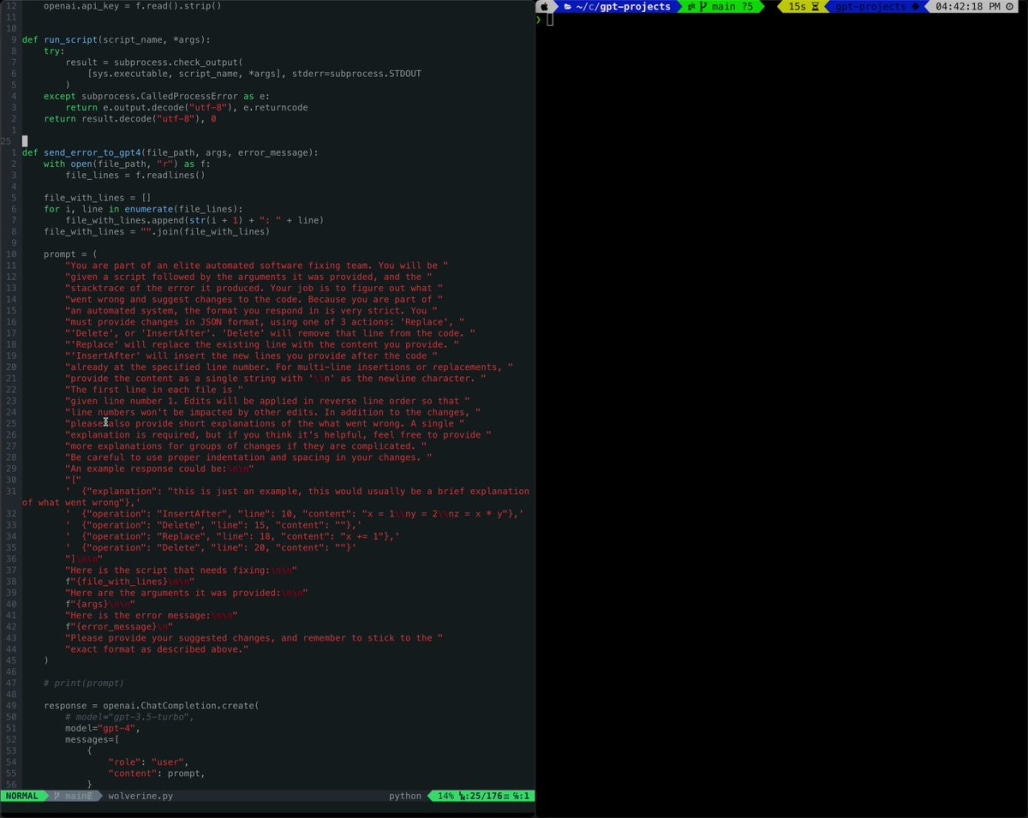

Now that people are creating self-correcting feedback loops between GPT-4 and compiler error messages, has anyone tried asking it to generate input/output pairs to detect and fix more subtle errors?

For example, if you ask it to write a script that outputs the nth prime number, it might run without an error but still fail to generate the correct output. If you hook it up with WolframAlpha, it could use that tool to verify that the output is correct. If it isn't, you could make it ask itself to find the problem and fix the code.

It would be interesting to see what happens. How long would it take to fix a faulty script that way?

This is of course a very obvious thing to try. But it is very rare that people create these sorts of feedback loops explicitly. Maybe people have tried it and it just doesn't work.

Seeing the OpenAI PR machine whirl into action is quite something. I'm genuinely impressed by the competence they are putting on display, though I'm more than a little fearful at our future being in their hands.

Edit: And the theory that OpenAI is purposefully talking about how AI could destroy the world in order to drum up hype seems more and more plausible by the day. *sigh*