Links for 2023-03-15

Official GPT-4 announcement, a large multimodal model:

GPT-4 exhibits human-level performance on various professional and academic benchmarks, including passing a simulated bar exam with a score around the top 10% of test takers. This contrasts with GPT-3.5, which scores in the bottom 10%.

GPT-4 is 82% less likely to respond to requests for disallowed content and 40% more likely to produce factual responses than GPT-3.5 on our internal evaluations.

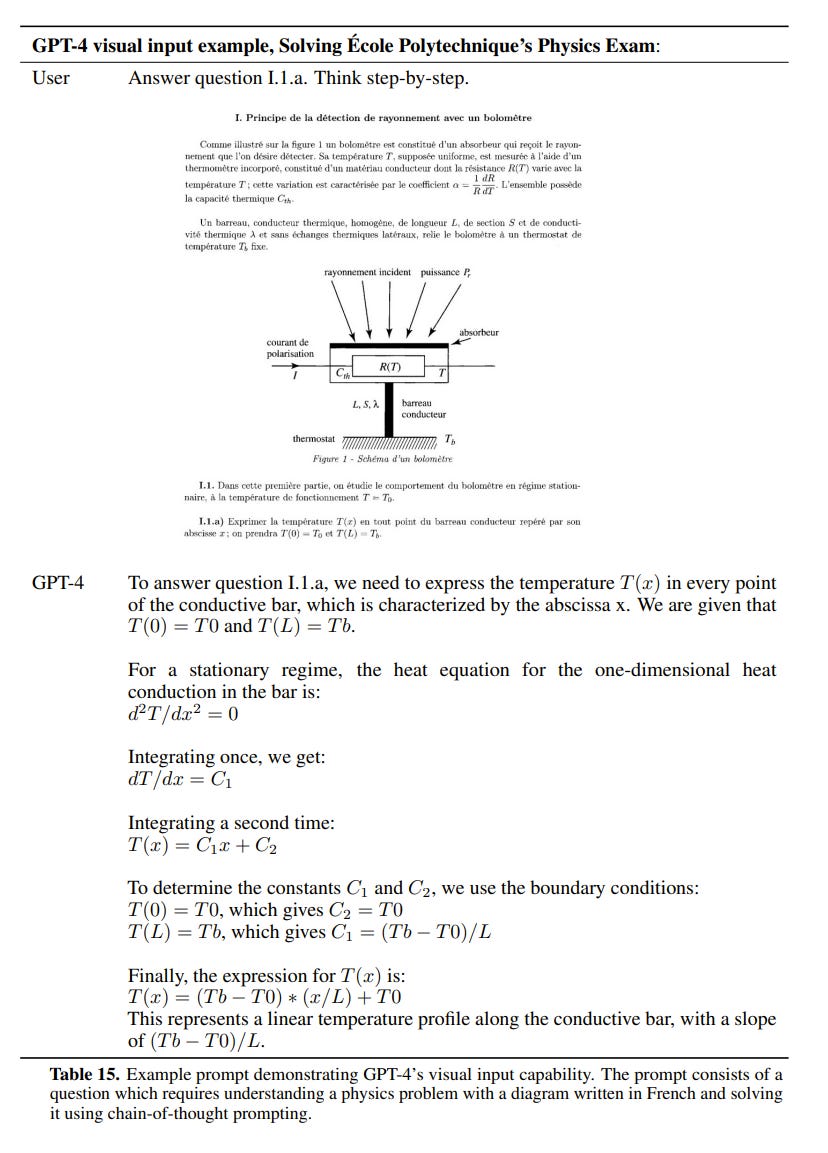

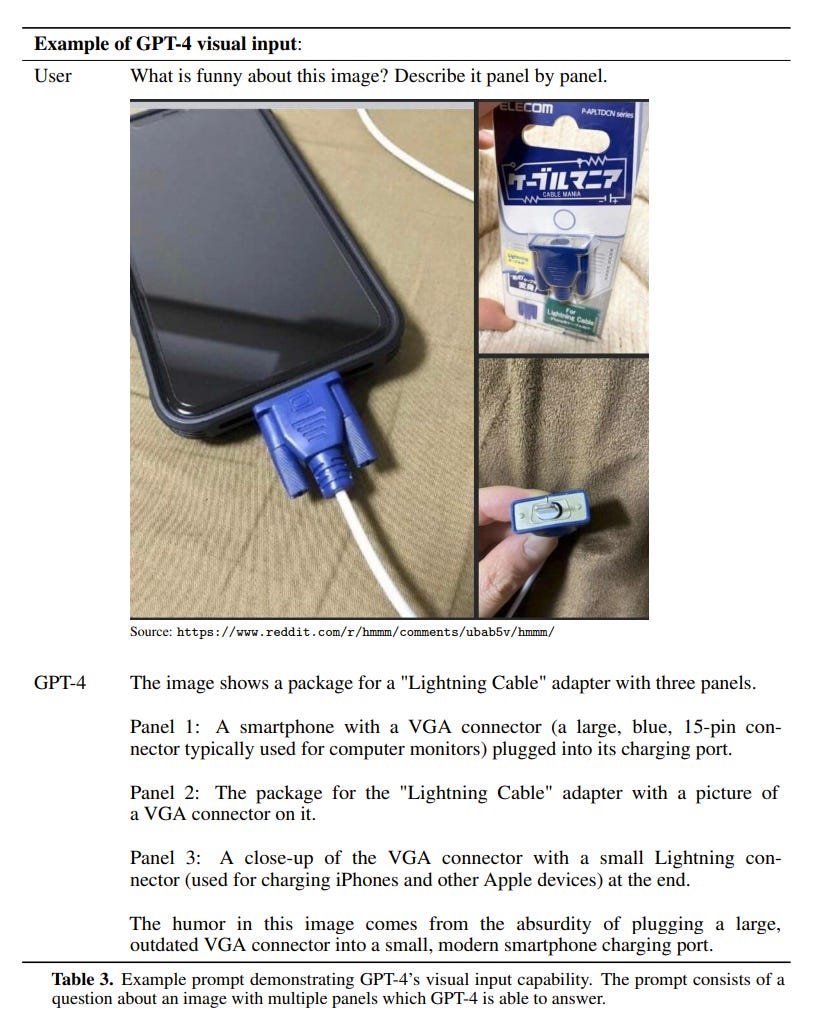

GPT-4 can accept images as inputs and generate captions, classifications, and analyses.

GPT-4 is capable of handling over 25,000 words of text, allowing for use cases like long form content creation, extended conversations, and document search and analysis.

“Given both the competitive landscape and the safety implications of large-scale models like GPT-4, this report contains no further details about the architecture (including model size), hardware, training compute, dataset construction, training method, or similar.”

Blog: https://openai.com/product/gpt-4

Technical report: https://cdn.openai.com/papers/gpt-4.pdf

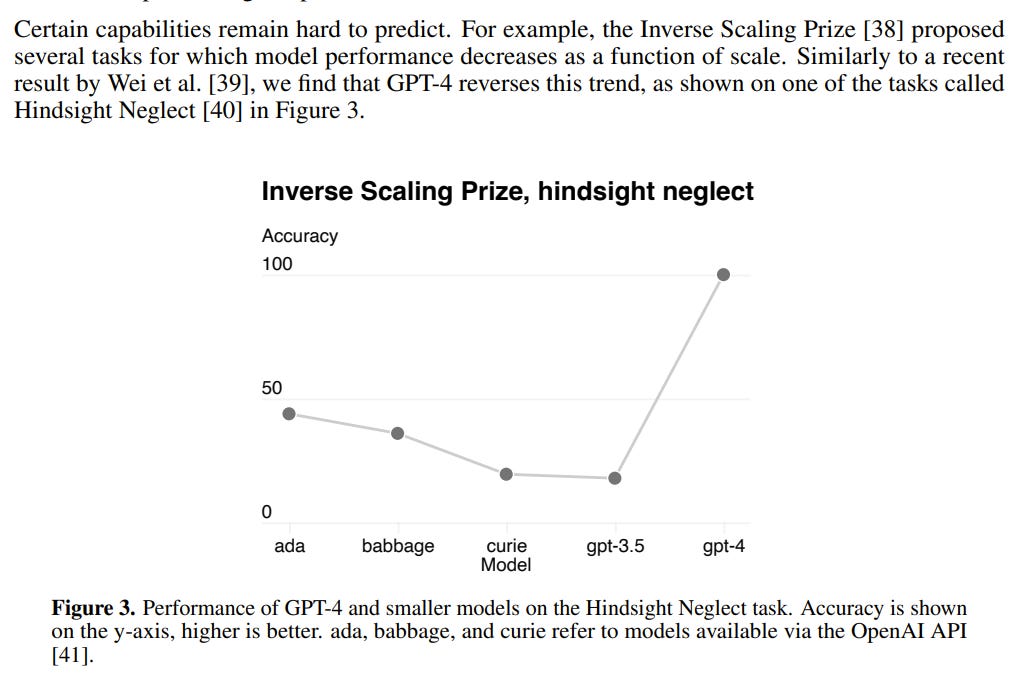

Skimming the GPT-4 paper, this is the scariest bit I came across:

I expected this, but it's good (bad?) seeing another empirical confirmation.

Nobody knows what GPT-N will be capable of. At some scale, we might witness the abrupt emergence of strong superintelligence.

More:

An example of a useful real-world application of GPT-4: A Virtual Volunteer Tool for People who are Blind or Have Low Vision, Powered by OpenAI’s GPT-4 https://www.bemyeyes.com/blog/introducing-be-my-eyes-virtual-volunteer

Khan Academy is using GPT-4 as a tutor for learners and an assistant for teachers. https://www.khanacademy.org/khan-labs

GPT can write Quines now (GPT-4) https://www.lesswrong.com/posts/ux93sLHcqmBfsRTvg/gpt-can-write-quines-now-gpt-4

“GPT-4 coming up with novel unpatended compounds, sending emails to get custom synthesis and overall showcasing pharmocological reasoning + agency is terrifying in terms of risk of engineered pandemics” https://twitter.com/MichaelTrazzi/status/1635751847767207938

I wrote this in 2020 while most people were distracted. It was then that I realized the importance of the scaling hypothesis. If GPT-5 turns out to be as powerful as I suspect, everyone, including state actors, will be racing full speed toward GPT-6 and artificial superintelligence.

Stanford Alpaca, a new LLM player, has been fine-tuned with Nvidia A100 x 8 for 3 hours ($100) and augmented with OpenAI GPT-3 ($500). This model can be used on a single GPU or CPU, such as Apple Silicone or Raspberry PI, and offers performance similar to GPT-3 at a total cost of $600. https://crfm.stanford.edu/2023/03/13/alpaca.html

“In December, we discussed Med-PaLM, at that time a SOTA medical LLM that achieved a 67.6% score on the USMLE MedQA evaluation (passing is 60%). Today, we're describing Med-PaLM2, which improves on this by +18% with a score of 85.4% ("expert performance")!” https://blog.google/technology/health/ai-llm-medpalm-research-thecheckup/

Against LLM Reductionism: 1. LLMs Can Learn General Algorithms 2. LLMs Can Contain and Use Models of the World 3. LLMs Aren't Next-Token Predictors, They Are Next-Token-Prediction Artefacts https://www.lesswrong.com/posts/PwfwZ2LeoLC4FXyDA/against-llm-reductionism

Rewarding Chatbots for Real-World Engagement with Millions of Users — “…a more than 30% increase in user retention for a GPT-J 6B model.” https://arxiv.org/abs/2303.06135

Researchers unveil new AI-driven method for improving additive manufacturing https://www.anl.gov/article/researchers-unveil-new-aidriven-method-for-improving-additive-manufacturing

The ChatGPT list of lists: A collection of 3000+ prompts, examples, use-cases, tools, APIs, extensions, fails and other resources. https://medium.com/mlearning-ai/the-chatgpt-list-of-lists-a-collection-of-1500-useful-mind-blowing-and-strange-use-cases-8b14c35eb

This geothermal startup showed its wells can be used like a giant underground battery [MIT Technology Review] https://archive.is/py5dX

Impact of the Tambora volcanic eruption of 1815 on islands and relevance to future sunlight-blocking catastrophes https://www.nature.com/articles/s41598-023-30729-2

10 million tons of lunar regolith ejected into the Earth-Sun Lagrange point L1 per year would create enough of a shadow to cut solar heating equivalent to 6 days. Average power cost for the mass driver is 19 GW. https://journals.plos.org/climate/article?id=10.1371/journal.pclm.0000133

New Results From NASA’s DART Mission Confirm We Could Deflect Deadly Asteroids https://singularityhub.com/2023/03/06/new-results-from-nasas-dart-mission-confirm-we-could-deflect-deadly-asteroids/

"Local food is often no better than food shipped from continents away. Organic food often has a higher carbon footprint... A plastic bag seems a lot worse than a paper one. In fact, it’s the opposite... What is good for the environment often doesn’t line up with our intuitions." https://worksinprogress.substack.com/p/notes-on-progress-an-environmentalist

Could we make creating microelectronics as easy as printing a document? What if we made transistors the same way we make chemicals today? https://spec.tech/library/nanomodular-electronics-roadmap

An arthritis drug mimicks the anti-aging benefits of youthful blood transfusions https://mindblog.dericbownds.net/2023/03/an-arthritis-drug-mimicks-anti-aging.html