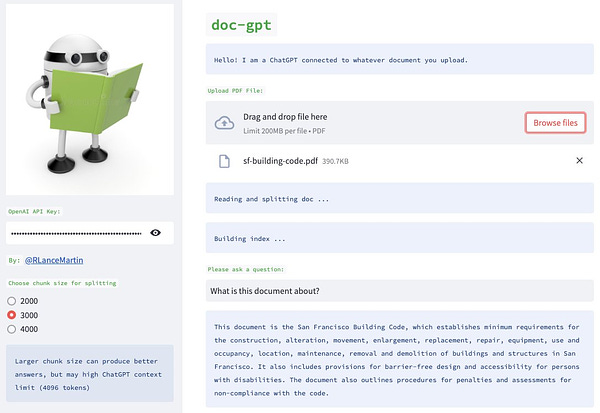

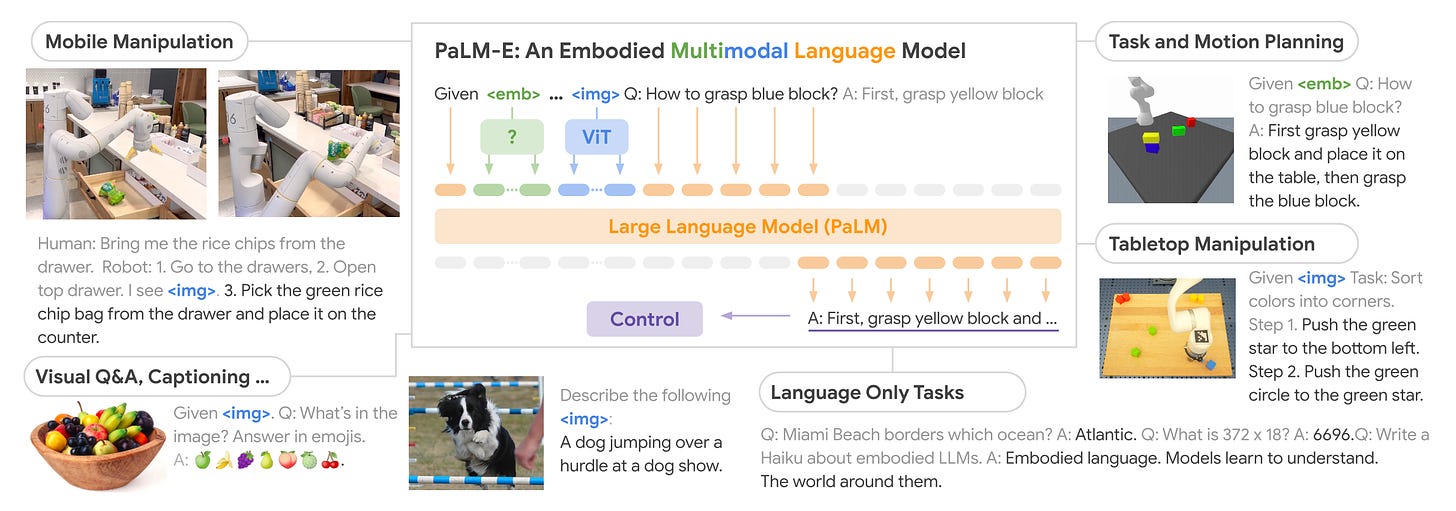

PaLM-E: An embodied multimodal language model, general-purpose, embodied visual-language generalist - across robotics, vision, and language.

Project page: https://palm-e.github.io/

PaLM-E enables robot planning directly from pixels – all in a single model, trained end-to-end.

Exhibits positive transfer: simultaneously training PaLM-E across several domains, including internet-scale general vision-language tasks, leads to significantly higher performance compared to single-task robot models.

A notable trend with model scale: the larger the language model, the more it maintains its language capabilities when training on visual-language and robotics tasks.

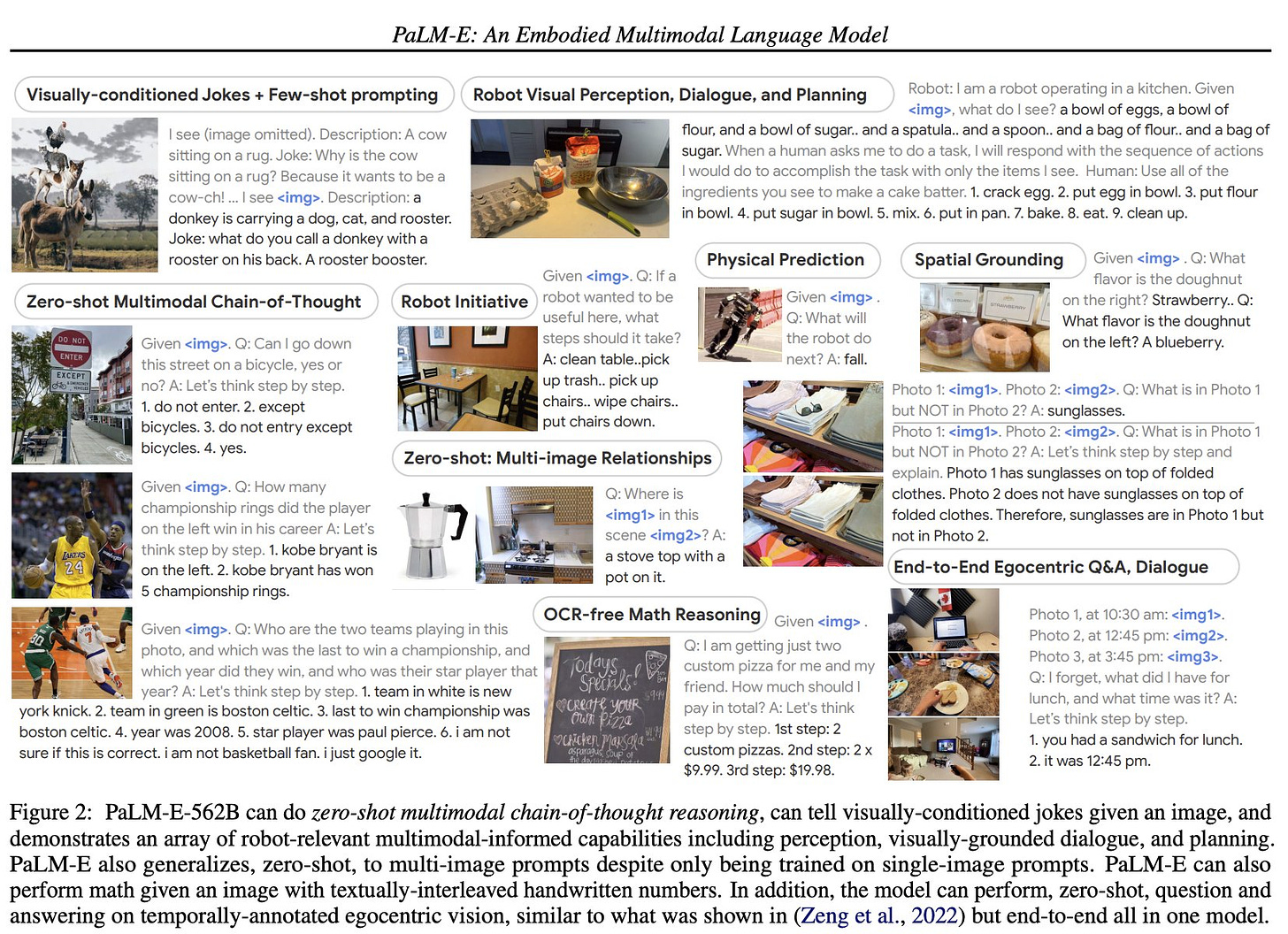

Emergent capabilities like multimodal chain of thought reasoning, and multi-image inference, despite being trained on only single-image prompts.

Directly incorporates real-world continuous sensor modalities into a language model and thereby establishes the link between words and percepts.

No one wants to focus autistically on this one topic. But artificial intelligence is *by far* the most important issue.

Sure, another AI winter cannot be ruled out. But if progress continues at the current rate for just a decade, the implications will be transformative.

More links:

“In this talk we discuss how foundation models are beginning to validate a hypothesis formed over 70 years ago: statistical models which better compress their source data resultantly learn more fundamental and general capabilities from it. We start by covering some fundamentals of compression, and then describe how larger language models, spanning into the hundreds of billions of parameters, are actually state-of-the-art lossless compressors. We discuss some of the emergent capabilities and persistent limitations we may expect along the path to optimal compression.” https://www.youtube.com/watch?v=dO4TPJkeaaU

Deep learning pioneer Yoshua Bengio looks forward to neural nets that can reason. — “I’m excited by generative flow networks, or GFlowNets, an approach to training deep nets that my group started about a year ago. This idea is inspired by the way humans reason through a sequence of steps, adding a new piece of relevant information at each step.” https://www.deeplearning.ai/the-batch/yoshua-bengio-wants-neural-nets-that-reason/

“Great example of why you shouldn't read too much into analogies like "bullshit generator" and "blurry JPEG of the web". The best way to predict the next move in a sequence of chess moves is… to build an internal model of chess rules and strategy, which Sydney seems to have done…It's not surprising that chess-playing ability emerges in sufficiently large-scale LLMs (we've seen emergent abilities enough times). What's very surprising is that Bing's LLM is apparently already at that scale. ChatGPT couldn't play chess at all — couldn't consistently make legal moves, couldn't solve mate in 1 in a K+Q vs K position. Sydney, on the other hand, has not only learnt the rules, but can play reasonably good chess! Far better than a human who has just learned the rules.” https://threadreaderapp.com/thread/1631491972685869056.html

Predictive Coding has been Unified with Backpropagation: "This paper permanently fuses artificial intelligence and neuroscience into a single mathematical field. This paper opens up possibilities for neuromorphic computing hardware." [published in 2021] https://www.lesswrong.com/posts/JZZENevaLzLLeC3zn/predictive-coding-has-been-unified-with-backpropagation

“So apparently OpenAI at one point trained and ran a model with sign-flipped reward due to a coding bug…This bug was remarkable since the result was not gibberish but maximally bad output.” https://threadreaderapp.com/thread/1629656909417701378.html

“The myth that AI “neural networks” cannot be understood obstructs ordinary scientific and engineering investigation. This is extremely convenient for both tech people and powerful decision makers.” https://threadreaderapp.com/thread/1631674193287716866.html

The reverse Flynn effect, the decline of the long-rising intelligence quotient, is also making itself felt in the USA. https://www.sciencedirect.com/science/article/pii/S0160289623000156

“How did Russian cosmonauts know where they were? The Globus INK (1967) showed the position of their Soyuz spacecraft on a rotating globe. It is an analog computer built from tiny gears. I reverse-engineered the wiring (which inconveniently had been cut) and we powered it up.” https://www.righto.com/2023/01/inside-globus-ink-mechanical-navigation.html

Proposed adaptive cognitive biases: “The notion that human judgment is fundamentally flawed appears to have been flawed itself... Some genuine cognitive biases might be functional features designed by the wisdom of natural selection.” https://doi.org/10.1002/9781119125563.evpsych241

Ingenious Technique Could Make Moon Farming Possible https://gizmodo.com/ingenious-technique-could-make-moon-farming-possible-1850145392

How Well Personality Traits Predict Social Outcomes? It’s Complicated… https://humanvarieties.org/2023/02/28/how-well-personality-traits-predict-social-outcomes-well-its-complicated/

“GOODHART'S LAW IN EVERYTHING: I'm starting a MEGATHREAD that I will be updating with interesting examples illustrating Goodhart's law.” https://threadreaderapp.com/thread/1631069116147675137.html

> So apparently OpenAI at one point trained and ran a model with sign-flipped reward due to a coding bug…This bug was remarkable since the result was not gibberish but maximally bad output

We're SO dead.

Great link set. I'm glad to see predictive processing getting some love.