Links for 2023-02-23

ChatGPT for Robotics: Design Principles and Model Abilities https://www.microsoft.com/en-us/research/group/autonomous-systems-group-robotics/articles/chatgpt-for-robotics/ (watch the video: https://www.youtube.com/watch?v=NYd0QcZcS6Q)

Tony Wu talked on how Google OpenAI’s large language models could generate informal proofs for high school to IMO problems, wotg a separate autoformalizer then converting them into formally verifiable proofs ( or vice versa) with reasonable success rates at the high school level. https://www.youtube.com/watch?v=_pqJYnQua58

Scott Aaronson on LLMs: “Mostly my reaction has been: how can anyone stop being fascinated for long enough to be angry? It’s like ten thousand science-fiction stories, but also not quite like any of them…For a million years, there’s been one type of entity on earth capable of intelligent conversation: primates of genus Homo, of which only one species remains...But now there’s a second type of conversing entity. An alien has awoken—admittedly, an alien of our own fashioning, a golem, more the embodied spirit of all the words on the Internet than a coherent self with independent goals. How could our eyes not pop with eagerness to learn everything the alien has to teach?…The science we could learn from a GPT-7 or GPT-8 that continued along the capability curve we’ve come to expect from GPT-1, -2, and -3. Holy mackerel.” https://scottaaronson.blog/?p=7042

“I set out to write up the AI events of the past week and, well, things escalated quickly.” https://www.lesswrong.com/posts/WkchhorbLsSMbLacZ/ai-1-sydney-and-bing

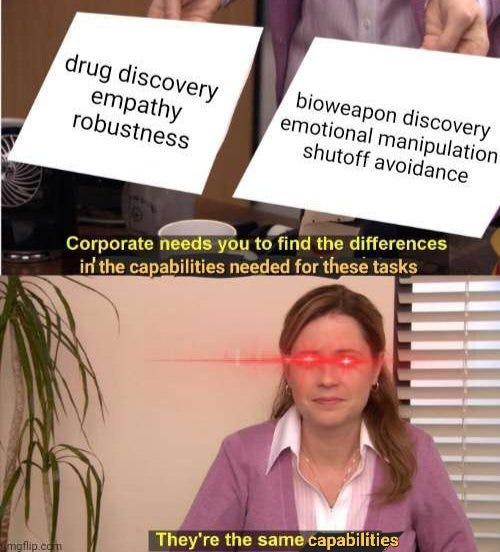

“I’ll describe two specific emergent capabilities that I’m particularly worried about: deception (fooling human supervisors rather than doing the intended task), and optimization (choosing from a diverse space of actions based on their long-term consequences).” https://www.lesswrong.com/posts/aEjckcqHZZny9L2zy/emergent-deception-and-emergent-optimization

“For the First Time, Genetically Modified Trees Have Been Planted in a US Forest…genetically engineered to grow wood at turbocharged rates while slurping up carbon dioxide from the air.” [The New York Times] https://archive.is/TQ5ro

Third patient free of HIV after receiving virus-resistant cells https://www.nature.com/articles/d41586-023-00479-2

“…the night sky grew about 10 percent brighter, on average, every year from 2011 to 2022.” https://www.sciencenews.org/article/light-pollution-night-sky-bright-citizen-science

Berkeley computer science degrees have increased 1100% in a decade https://eighteenthelephant.com/2023/02/12/10-double-the-number-of-computer-science-majors-20-goto-10/

Google’s Fully Homomorphic Encryption Compiler — A Primer https://jeremykun.com/2023/02/13/googles-fully-homomorphic-encryption-compiler-a-primer/

Majority of criminals say we don't do enough to stop them. https://lefineder.substack.com/p/criminals-views-crime

“It was the fastest, most efficient genocide in history. If it had gone as long as the holocaust, it would have killed 12,000,000+ people. Most of the perpetrators were completely ordinary people. Farmers. The vast majority of the killing was done with machetes. Intimate. Most of the victims knew their killers personally, sharing communities with them for years, friends even...Most of the killers were professing, practicing Christians (majority Catholic) & maintained after the killings. They killed on Sundays.” https://threadreaderapp.com/thread/1625974398967578646.html

Mixed-race adults have poor mental health https://twitter.com/jonahdavids1/status/1625166030086586369

An adenovirus that makes you fat? https://twitter.com/UrsulaV/status/1624866711617867780

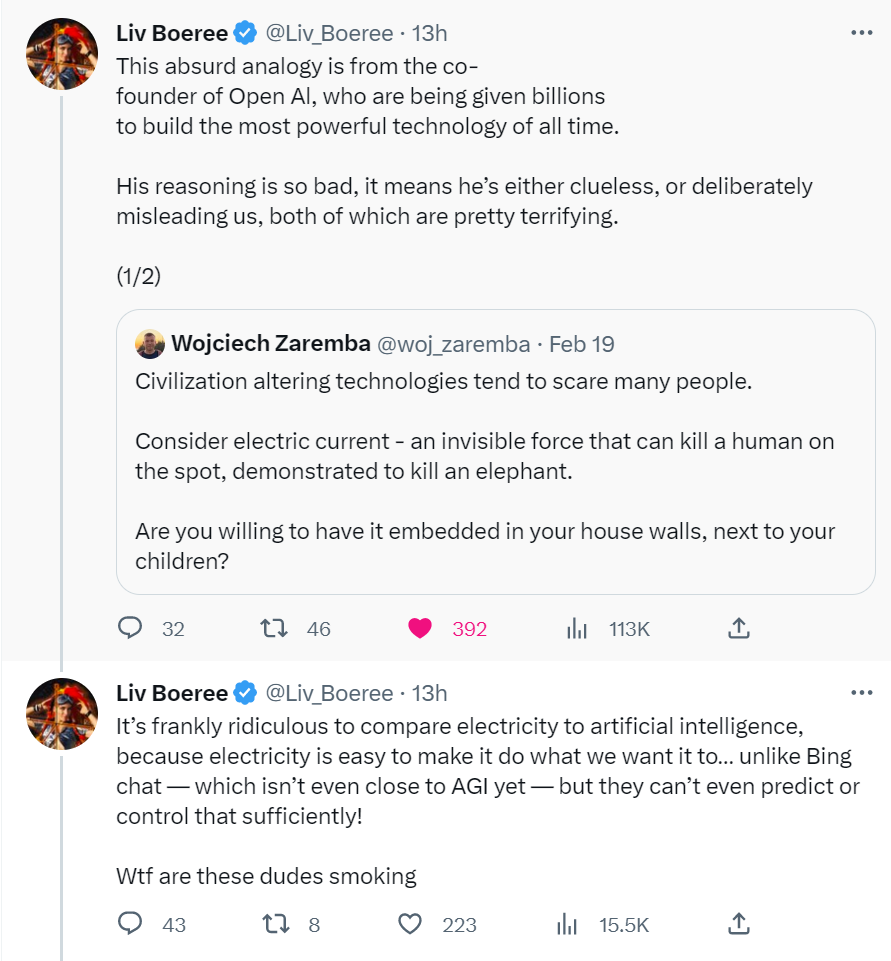

More than a decade ago, I also criticized Yudkowsky et al. and their claims about how artificial general intelligence might end the world. But at least I tried to come up with some actual arguments. Now that these concerns seem much more grounded in reality, the criticism mostly consists of “haha” reactions.

It is true that many experts disagree with Yudkowsky. But most of them never engage with his actual arguments. Others, like Steven Pinker, don't even seem to know or understand what is being argued. Their criticisms are so bad that even I would have been embarrassed to make them in 2010.

Of course, Yudkowsky could be wrong, and his concerns should be scrutinized and criticized. But if you don't at least know what a mesa-optimizer is and that in 2022 Flan-PaLM surpassed SotA forecasts for 2023 and 2024, you might want to keep quiet.

I can already afford almost everything I want (e.g., books), and the few things I want but cannot afford would cost many billions or even trillions of dollars. I'm also not nearly as confident about doom and short timelines as other people. So the bottom line is that it wouldn't make sense for me to risk going bankrupt in a few decades in order to afford some luxuries I don't care about.

One behavioral change I have made is to focus less on practical things and more on things I find interesting.

People are once again confused about winning. From the point of view of rationality, it is wrong to give in to value drift. Here is something I wrote some time ago: