Links for 2023-01-17

Demis Hassabis, DeepMind CEO: “I would advocate not moving fast and breaking things...When it comes to very powerful technologies—and obviously AI is going to be one of the most powerful ever—we need to be careful” https://time.com/6246119/demis-hassabis-deepmind-interview/

Emergent collective intelligence from massive-agent cooperation and competition https://arxiv.org/abs/2301.01609

Emergent Analogical Reasoning in Large Language Models: GPT-3.5 IQ testing using Raven’s Progressive Matrices https://arxiv.org/abs/2212.09196

PromptArray: A Prompting Language for Neural Text Generators https://github.com/jeffbinder/promptarray

“T. rex had baboon-like numbers of brain neurons, which means it had what it takes to build tools, solve problems, and live up to 40 years, enough to build a culture! Paper is just out in J Comp Neurol. Reality was actually MORE terrifying than the movies!” https://twitter.com/suzanahh/status/1611119537109479424

“How Should We Think About Our Different Styles of Thinking? Some people say their thought takes place in images, some in words. But our mental processes are more mysterious than we realize.” [The New Yorker] https://archive.is/3oBZQ

People who think themselves as less attractive are more likely willing to wear surgical masks. https://www.frontiersin.org/articles/10.3389/fpsyg.2023.1084941/abstract

Iron deficiencies are very bad and you should treat them https://www.lesswrong.com/posts/6frs5xTkeLc9vZSRN/iron-deficiencies-are-very-bad-and-you-should-treat-them

Fuel from the sky: Cheap hydrocarbons from CO2 direct air capture and sunlight. https://terraformindustries.wordpress.com/2023/01/09/terraform-industries-whitepaper-2-0/

“We need to treat growth as a moral good, and the obstacles to growth as a moral outrage” https://capx.co/the-morality-of-growth/

How Random is Crypto Wealth? A Statistical Analysis. https://www.statsignificant.com/p/how-random-is-crypto-wealth-a-statistical

Ukraine's Bakhmut: Inside the frontline city • FRANCE 24 https://www.youtube.com/watch?v=jO94rW4tHNs

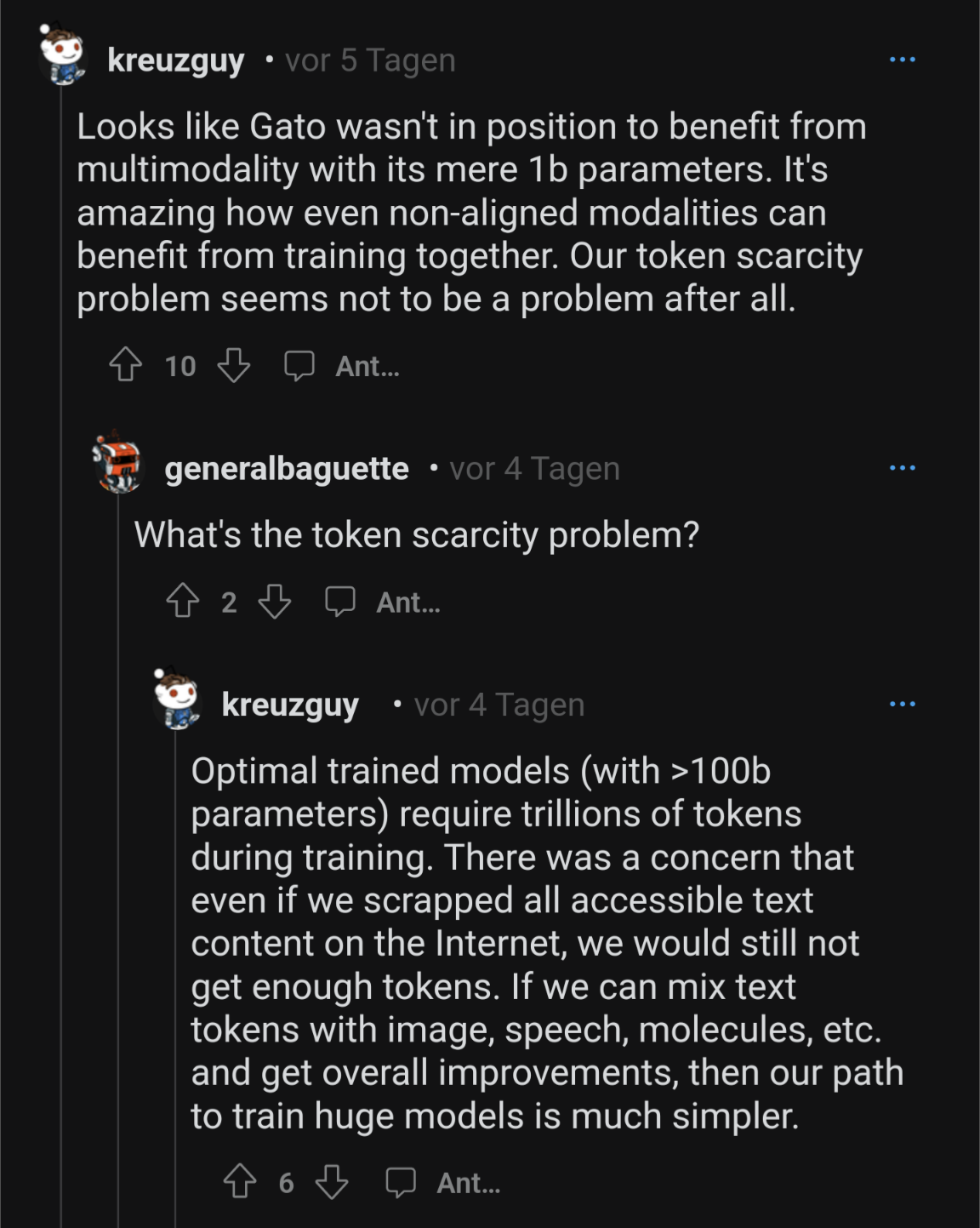

Paper: "Scaling Laws for Generative Mixed-Modal Language Models", Aghajanyan et al 2023 {FB} (why multimodal models have disappointed thus far: inadequate model+data size to reach scale where they synergize) https://arxiv.org/abs/2301.03728

Reddit thread: https://www.reddit.com/r/mlscaling/comments/109cvmx/scaling_laws_for_generative_mixedmodal_language/

For the Gato reference, see: https://www.deepmind.com/publications/a-generalist-agent