Links for 2022-12-17

A new project to help improve the scientific study of psychology: “when new psychology and behavioral science papers come out in the two most prestigious general science journals (Nature and Science), we will replicate all of them (within budgetary and technology constraints)” https://threadreaderapp.com/thread/1600869499653468162.html

“Thought-provocative new paper from Geoffrey Hinton what if we could replace backpropagation with something better?” https://threadreaderapp.com/thread/1599755684941557761.html

New embedding model by OpenAI is significantly more capable at language processing and code tasks, outperforming their previous best one while being 99.8% cheaper. https://openai.com/blog/new-and-improved-embedding-model/

“With Constitutional AI, we need only a few dozen principles and examples to train less harmful language assistants. With prior techniques, we needed tens of thousands of human feedback labels.” https://threadreaderapp.com/thread/1603791161419698181.html

“Announcing data2vec 2.0, a new general self-supervised algorithm built by Meta AI for speech, vision & text that can train models 16x faster than the most popular existing algorithm for images while achieving the same accuracy.” https://ai.facebook.com/blog/ai-self-supervised-learning-data2vec/

A diffusion model for protein design https://www.bakerlab.org/2022/11/30/diffusion-model-for-protein-design/

“Introducing Bird SQL, a Twitter search interface that is powered by Perplexity’s structured search engine. It uses OpenAI Codex to translate natural language into SQL, giving everyone the ability to navigate large datasets like Twitter.” https://twitter.com/perplexity_ai/status/1603441221753372673

“...it turns out that humans are only working with roughly a few thousand maybe ten thousand different primitive concepts...we know that the space in which humans are kind of trying to describe the world or make sense of the world is a very finite dimensional space...the AIs, when they are trained on human language, they naturally develop this kind of structure so you can imagine we'll have computers that are able to operate in a million-dimensional conceptual space...” https://youtu.be/C9vHv_7rbeU?t=1495

Language models show human-like content effects on reasoning https://arxiv.org/abs/2207.07051

“PaLM's dataset is 78% Eng, only 0.005% Swahili. Can GPT/PaLM be used to do homework problems written in Swahili or Thai? Yes, for some reasoning tasks at least.” https://twitter.com/OwainEvans_UK/status/1603008968045244416

Paper-thin solar cell can turn any surface into a power source https://news.mit.edu/2022/ultrathin-solar-cells-1209

Economics research is mostly untrustworthy because economists cheat so much with the statistics https://kirkegaard.substack.com/p/economics-research-is-mostly-untrustworthy

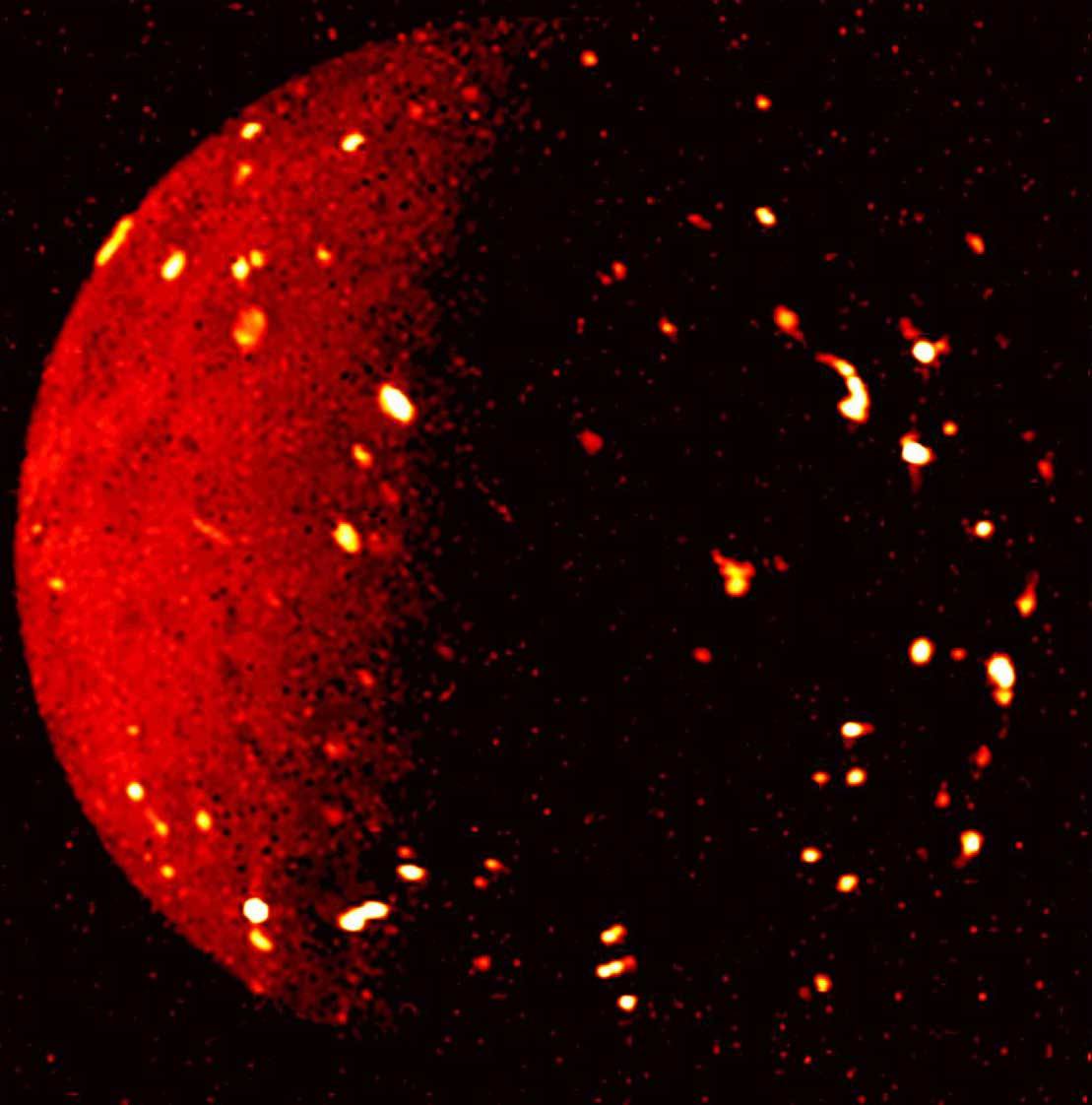

This new infrared image of Jupiter’s moon Io from the Juno spacecraft shows volcanoes, lava flows, and lava lakes glowing with heat radiation:

Source: https://www.jpl.nasa.gov/news/nasas-juno-exploring-jovian-moons-during-extended-mission