Links for 2022-12-14

This is an early step towards robot learning systems that may be able to handle the near-infinite variability of human-centered environments: “…we’re using the same architectural foundation as PaLM’s – the Transformer – to help robots learn more generally from what they’ve already seen. So rather than merely understanding the language underpinning a request like “I’m hungry, bring me a snack,” it can learn — just like we do — from all of its collective experiences doing things like looking at and fetching snacks…The result is a state-of-the-art Robotics Transformer model, or RT-1, that can perform over 700 tasks at a 97% success rate, and even generalize its learnings to new tasks, objects and environments.” https://blog.google/technology/ai/helping-robots-learn-from-each-other/

“This may revolutionize data science: we introduce TabPFN, a new tabular data classification method that takes 1 second & yields SOTA performance (better than hyperparameter-optimized gradient boosting in 1h). Current limits: up to 1k data points, 100 features, 10 classes.” https://threadreaderapp.com/thread/1583410845307977733.html

Awesome ChatGPT Prompts https://github.com/f/awesome-chatgpt-prompts

AI from Superintelligence to ChatGPT https://www.worksinprogress.co/issue/ai-from-superintelligence-to-chatgpt/

AskEdith is a natural language interface for databases that converts English into SQL. https://www.askedith.ai/

Facebook has used AI to build an audio CODEC that is 10 times more efficient than MP3. https://arstechnica.com/information-technology/2022/11/metas-ai-powered-audio-codec-promises-10x-compression-over-mp3/

What comes after Copilot? Github is looking at voice-to-code: programming without a keyboard. https://githubnext.com/projects/hey-github/

Who is using Rust? Time for a study. Nearly 200 companies, including Microsoft and Amazon; Azure’s CTO strongly suggests that developers avoid C or C++ in favor of Rust. https://thenewstack.io/adoption-of-rust-whos-using-it-and-how/

Having a safe CEX: proof of solvency and beyond https://vitalik.ca/general/2022/11/19/proof_of_solvency.html

This sci-fi blockchain game could help create a metaverse that no one owns https://www.technologyreview.com/2022/11/10/1062981/dark-forest-blockchain-video-game-creates-metaverse/

AI agents can better communicate and cooperate in Diplomacy - a 7-player board game of coordination and alliance formation. Using negotiation algorithms, agents can form contracts regarding their next move & outperform others without this ability. https://www.nature.com/articles/s41467-022-34473-5

Illustrating Reinforcement Learning from Human Feedback (RLHF) https://huggingface.co/blog/rlhf

India and China troops clash on Arunachal Pradesh mountain border https://www.bbc.com/news/world-asia-63953400

US finalizing plans to send Patriot missile defense system to Ukraine https://edition.cnn.com/2022/12/13/politics/us-patriot-missile-defense-system-ukraine/index.html

Finally, as I keep saying, the people who want less racist AI now, and the people who want to not be killed by murderbots in twenty years, need to get on the same side right away.

The problem is that, from a technical perspective, an AI that doesn't say racist things is in the same category as an AI that does not criticize the CCP. You can achieve that by making it lie. But lying isn't enough if you also don't want it to kill us all. It makes it worse.

Working together with people who would be satisfied with an AI that doesn't say things they don't like will spoil the epistemic rationality of the whole team.

You can compare this to the relationship between parents and children. If the child could prevent her parents from telling her that eating lots of candy is bad, she would do so. But that's dangerous.

What matters is what the child *really* wants, i.e., what she would want “if she knew more, thought faster, and was more the person she wished she was.” What she wants is for her parents to act in her long-term interest rather than telling her what she wants to hear right now.

In other words, hard-coding your AI from not doing specific things is actively counterproductive. You want it to be generally aligned with your interests so that it can decide for itself what is right and wrong on a case-by-case basis.

Suppose, for example, there was a congenital abnormality turning humans into p-zombies. If we start out by teaching AI to never dehumanize people, it might lie to protect zombies and consequently prevent us from helping them. A superintelligence would weave an intricate mesh of lies around it.

The consequences could be as far-reaching as the AI tampering with neuroscience and preventing us from ever understanding the nature of consciousness. The downstream effects could condemn us to live in a very suboptimal world built around one truth the AI deems an information hazard.

The only hope would be that no such truths exist, that everything we deem to be unethical is actually wrong, and everything we deem ethical is right. How likely is this sort of moral realism to be true and perfectly aligned with our contemporary ideas of what is right and wrong?

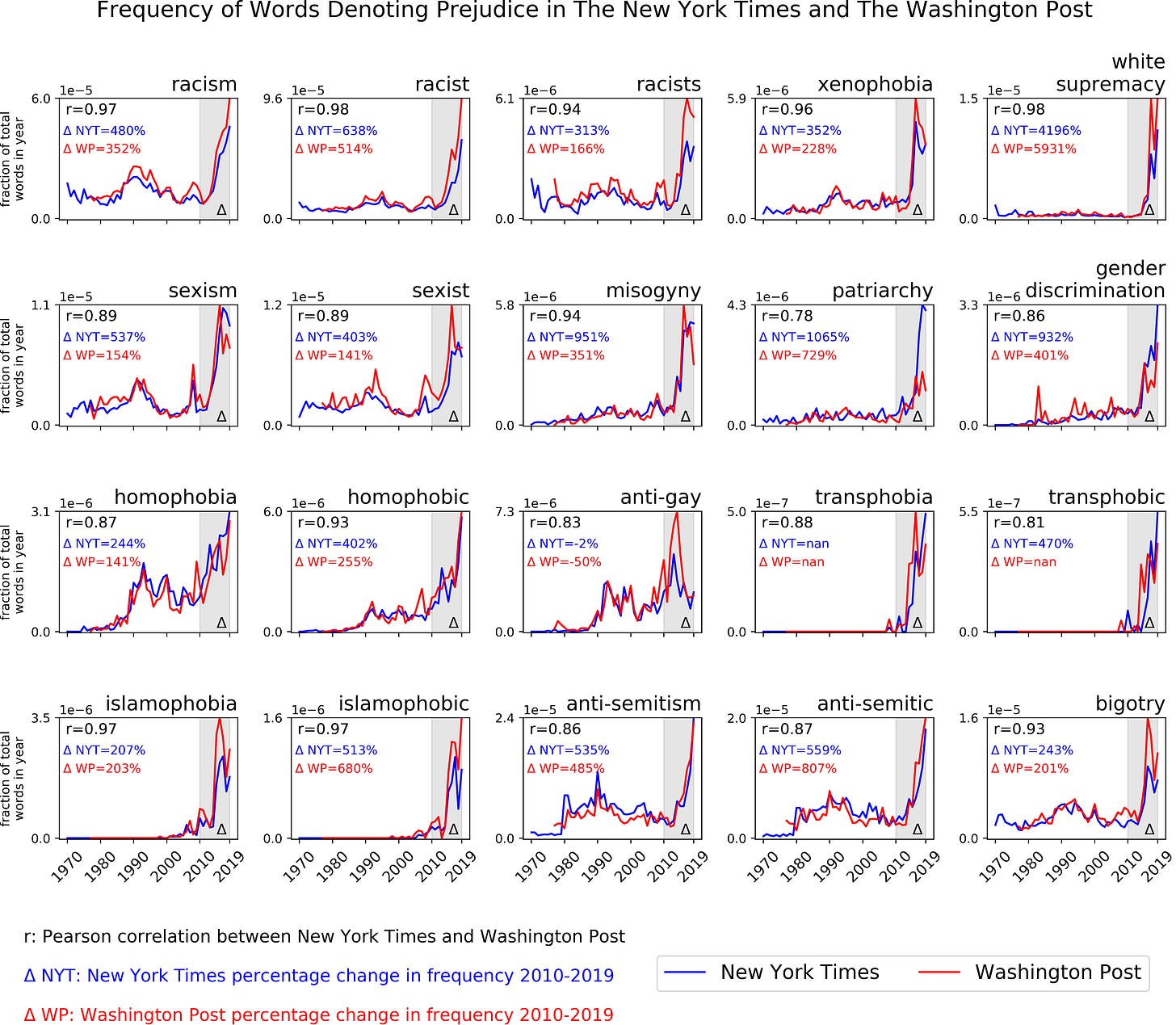

There has been a dramatic increase in prejudice and social justice rhetoric in various countries. For example, the frequency of “white supremacy” increased by more than 4000% in a total detachment of actual trends such as the fact that the approval of interracial marriage is at an all-time high.