Links for 2022-12-12

A fusion energy breakthrough has possibly been achieved. Q-total is still less than 1 [no net output of energy into the grid] but the path to a power plant seems to be clear. “Q is what matter for these experiments b/c ignition is a ~exponential feedback loop, and Q-total differs from Q by a O(1) prefactor. Once you achieve ignition (Q > 1), it will quickly scale much larger, and Q-total comes along for the ride.” Financial Times report: https://archive.vn/fny0J

Logical induction for software engineers https://www.lesswrong.com/posts/jtMXj24Masrnq3SpS/logical-induction-for-software-engineers

A general recipe for quantifying someone’s personal probability in a proposition based on the person's betting rate. https://jonathanweisberg.org/vip/beliefs-betting-rates.html

“That the Mahābhārata is concomitant w/the Iliad can be seen through narrative parallels. Any rapprochment need not imply a direct derivation of one from the other, but it may point to a common kernel between both. One such association can be seen between Agamemnon & Duryodhana” https://threadreaderapp.com/thread/1599091133996363776.html

Navigating to Objects in the Real World https://theophilegervet.github.io/projects/real-world-object-navigation/

Learning Video Representations from Large Language Models: "We repurpose pre-trained LLMs to be conditioned on visual input, and finetune them to create automatic video narrators." https://facebookresearch.github.io/LaViLa/

Character is a full stack Artificial General Intelligence (AGI) company: “Our Character.ai beta generates more than 1 billion words per day. Our users have created more than 350 thousand Characters.” https://blog.character.ai/introducing-character/

Indonesian mass killings of 1965–66 https://en.wikipedia.org/wiki/Indonesian_mass_killings_of_1965%E2%80%9366

A list of very advanced math tricks by Terence Tao https://mathstodon.xyz/@tao/109451634735720062

Did an ancient human relative, with a much smaller brain, still use fire? [Washington Post] https://archive.ph/OJXd9

No radiation very close to Europa and on surface says Juno co-investigator after flyby https://www.reddit.com/r/junomission/comments/y35qr8/no_radiation_very_close_to_europa_and_on_surface/

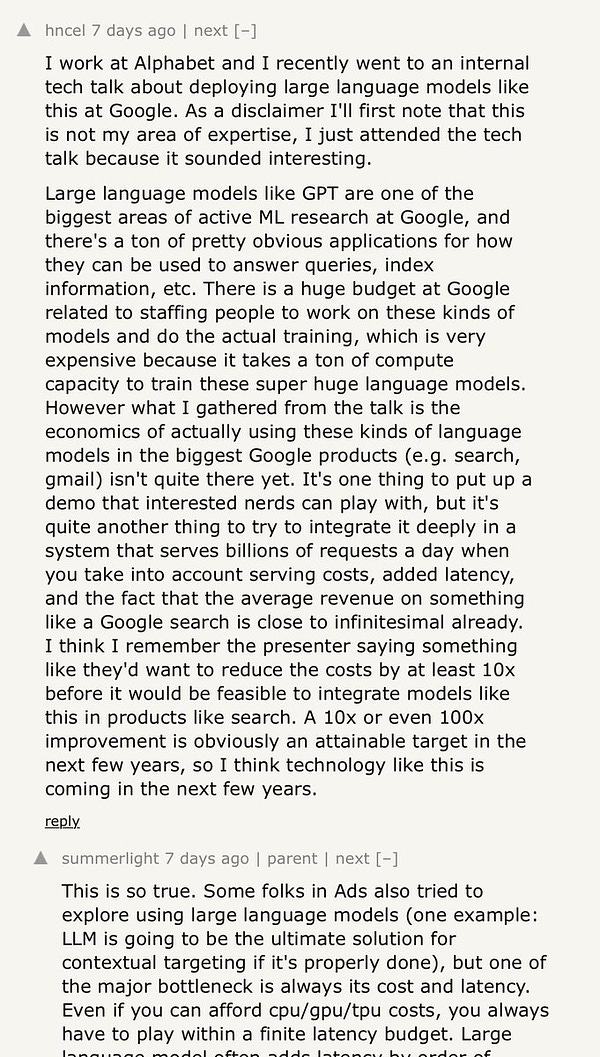

People say that the current flavor of artificial neural networks is not a solution to general intelligence. One argument is that they need a vast number of examples to learn something, whereas humans can learn the same based on a few examples.

How strong is this argument if one takes into account the following:

1. Humans are equipped with an “evolutionary prior” in the form of hard-coded instincts.

2. While growing up, humans are exposed to tens of thousands of hours of multimodal data.

3. Humans are grounded in the real world by their bodies and the feedback these bodies provide from experimenting with their environment.

Given these points, does it make sense to argue that biological neural networks require vastly less data? By comparison, artificial neural networks are blank slates that are confined to their own static minds.