Here's an amazing collection of examples of AI doing weird / unexpected things: https://docs.google.com/spreadsheets/u/1/d/e/2PACX-1vRPiprOaC3HsCf5Tuum8bRfzYUiKLRqJmbOoC-32JorNdfyTiRRsR7Ea5eWtvsWzuxo8bjOxCG84dAg/pubhtml

Fundamental Breakthrough: Error-Free Quantum Computing Gets Real https://scitechdaily.com/fundamental-breakthrough-error-free-quantum-computing-gets-real/

Intelligence and General Knowledge: Your Starter for 10 https://www.unz.com/jthompson/intelligence-and-general-knowledge-your-starter-for-10/

“Just as the silver screen was the death of the live theater, AI will be the death of the movie theater.” (Check the updates at the end of this post. It’s hard to write anything about AI these days that isn’t quickly outdated by actual research.) https://www.lesswrong.com/posts/hKS4NdcZqDnosbKhY/synthetic-media-and-the-future-of-film

People did not tend to develop romantic interest in partners whose attributes matched their a priori ideal preferences. https://journals.sagepub.com/doi/abs/10.1177/08902070221085877

Non-Gaussian Risk Bounded Trajectory Optimization for Stochastic Nonlinear Systems in Uncertain Environments https://arxiv.org/abs/2203.03038

The horrors of Japan's WWII 'human experiments unit': Disturbing images show how Chinese civilians and Allied POWs were dissected ALIVE and infected with the PLAGUE https://en.wikipedia.org/wiki/Unit_731

The brain-wide network in mice that encodes rewarding social experience https://www.cell.com/neuron/fulltext/S0896-6273%2822%2900181-7

Powering Next Generation Applications with OpenAI Codex https://openai.com/blog/codex-apps/

DARPA moving forward with development of nuclear powered spacecraft https://spacenews.com/darpa-moving-forward-with-development-of-nuclear-powered-spacecraft/

Think about what it means to be able to copy general intelligence: manpower becomes a free good like sunlight. This means it can be consumed in as much quantity as needed without reducing its availability.

Artificial general intelligence is the seed that grows into a forest all by itself. Artificial general intelligence is the automation of automation. It will multiply its existing intelligence by recursively improving itself.

If artificial general intelligence can be aligned with human values, we will end up in a world in which everyone can be vastly richer than the richest person alive today irrespective of their own capabilities.

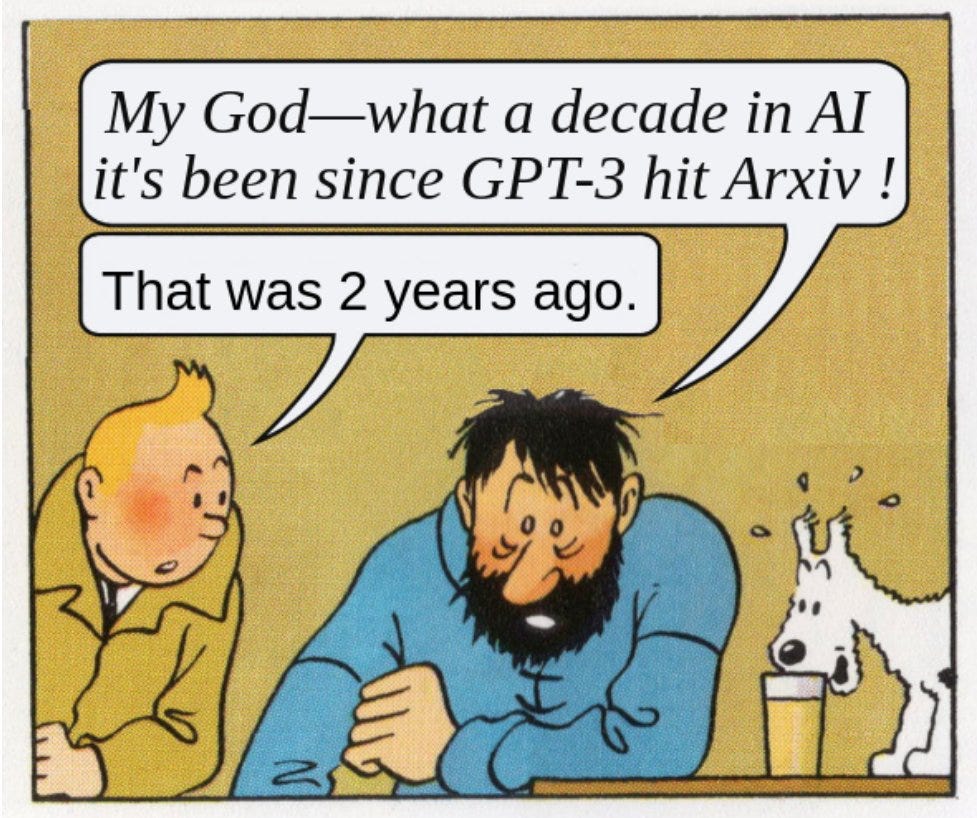

On the second anniversary of GPT-3, gwern gives an overview of the political landscape related to AI timelines: https://www.reddit.com/r/mlscaling/comments/uznkhw/gpt3_2nd_anniversary/iacca6c/

There are several political decisions that could delay the advent of artificial general intelligence. One such decision would be an invasion of Taiwan which would severely affect chip production.

This is also related to the concept of an anthropic shadow: if artificial intelligence was to cause human extinction but required a lot of computing power, you would be more likely to find yourself in world lines in which the necessary conditions for cheap compute are not met.

If you were living in such a world line you might expect to see crypto miners causing a GPU shortage or supply chain disruptions due to a pandemic. A war between the United States and China over Taiwan in which important chip fabrication plants are destroyed is also much more likely to occur in world lines that are not wiped out.

An anthropic shadow hides evidence in favor of catastrophic and existential risks by making observations more likely in worlds where such risks did not materialize, causing an underestimation of actual risk.

There is more from gwern in the parent comments: https://www.reddit.com/r/mlscaling/comments/uznkhw/gpt3_2nd_anniversary/iab8vy2/?context=3

“A psychologist thrown back in time to 2012 is a one-eyed man in the kingdom of the blind, with no advantage, only cursed by the knowledge of the falsity of all the fads and fashions he is surrounded by; a DL researcher, on the other hand, is Prometheus bringing down fire.”

[…]

The combination of large language models good at coding, inner-monologues, tree search, knowledge about math through natural language, and increasing compute all suggest that automated theorem proving may be near a phase transition. Solving a large fraction of existing formalized proofs, coding competitions, and even an IMO problem certainly looks like a rapid trajectory upwards.

Appreciate your tireless curation efforts!