Links for 2024-09-15

AI:

Physics of Language Models: Part 2.1, Grade-School Math and the Hidden Reasoning Process https://www.youtube.com/watch?v=bpp6Dz8N2zY

How to use the o1 model, specifically o1-preview, to perform data validation through reasoning. https://cookbook.openai.com/examples/o1/using_reasoning_for_data_validation

“ChatGPT o1 preview + mini Wrote My PhD Code in 1 Hour*—What Took Me ~1 Year” https://www.youtube.com/watch?v=M9YOO7N5jF8

“GPT-4 level open weights models like Llama-3-405B don't seem capable of dangerous cognition. OpenAI o1 demonstrates that a GPT-4 level model can be post-trained into producing useful long horizon reasoning traces. AlphaZero shows how capabilities can be obtained from compute alone, with no additional data. If there is a way of bringing these together, the apparent helplessness of the current generation of open weights models might prove misleading.” https://www.lesswrong.com/posts/Rpc9ACd6zCy3FssTj/openai-o1-llama-4-and-alphazero-of-llms

UI-JEPA: Towards Active Perception of User Intent through Onscreen User Activity https://www.arxiv.org/abs/2409.04081

Larry Ellison says he and Elon Musk went to dinner with Jensen Huang and begged him for GPUs and to take more of their money, because there will be only one winner in the race for AI https://x.com/tsarnick/status/1835108443063304412 (Original source: Q&A with Larry Ellison https://www.oracle.com/events/financial-analyst-meeting-2024/)

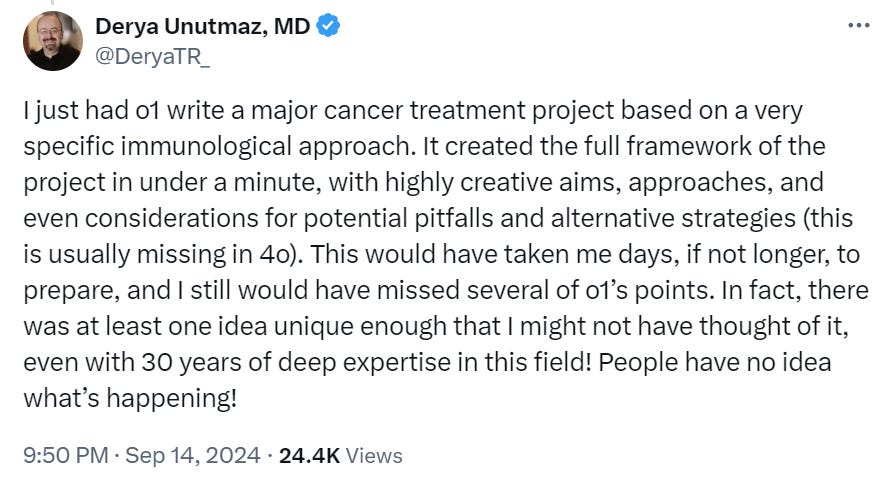

Sam Altman says OpenAI's new o1 AI model is the beginning of a significant new paradigm and "not only is progress not slowing down but we have the next few years in the bag" https://www.youtube.com/watch?v=7Lqsg2nlM3M

Sam Altman says he has a conversation with someone from the government "every few days" to discuss US leadership, data centers, computer chips, safety, economic impact and government use of AI https://x.com/tsarnick/status/1834732619269013958

All-In Summit: Peter Thiel says AI will transform the world not in 6 months but in 20 years and for the time being, NVIDIA is making over 100% of the profits while everyone else loses money https://x.com/tsarnick/status/1834714236599386464 (Original source: https://www.youtube.com/watch?v=SYRunzR9fbk)

Bill Gates says AI could enhance productivity by 300% and in 10 years people won't have to work as much as they do now and "that is basically a good thing" https://x.com/tsarnick/status/1834738975011226007

Consider the bewildering complexity of the human brain: in just 1 cubic millimeter of brain tissue, there are approximately 57,000 cells and 150 million synaptic connections, equating to about 1,400 terabytes of imaging data.

Now, reflect on the capabilities of artificial neural networks (ANNs), which, despite being far smaller than the human brain and lacking the vast complexity of biological systems, can perform a wide range of tasks at extraordinary speeds—seemingly without the ability to reason. Imagine what could be achieved by an ANN the size of a human brain, with its brute-force computational power.

Critics often argue that ANNs, trained on massive datasets, just rehash what they have learned. This is why they call them stochastic parrots. But this perspective overlooks a key point: ANNs begin as blank slates. In contrast, human cognition is the product of billions of years of evolution, millennia of cultural knowledge, and a lifetime of embodied learning through multimodal sensory feedback-loops from real-world interaction.

The point I'm trying to make here is that it's underappreciated how much of human cognition can be replicated with what many people think of as just “a brute-force statistical pattern matcher which blends up the internet and gives you back a slightly unappetizing slurry of it when asked.”

It is now argued that o1, the latest model from OpenAI, is just more brute force rather than Reasoning™, and that you need exponentially increasing compute for linear gains.

But that's not the critical question. The real question is whether this is enough to bootstrap our way to something that can go FOOM. The real question is if we can brute force our way to recursive self-improving artificial general intelligence. Given that current models are already solving complex problems like Math Olympiad challenges, it might be risky to assume the answer is definitely no.

Miscellaneous:

Geothermal energy could outperform nuclear power https://www.economist.com/science-and-technology/2024/09/13/geothermal-energy-could-outperform-nuclear-power [no paywall: https://archive.is/k1qZ9]

With antibiotics losing their effectiveness, one company is turning to gene editing and bacteriophages—viruses that infect bacteria—to combat infections. https://www.wired.com/story/crispr-enhanced-viruses-are-being-deployed-against-utis/ [no paywall: https://archive.is/2Wuux]

Human intelligence:

How the culture wars came for Wikipedia’s articles about human intelligence. https://quillette.com/2022/07/18/cognitive-distortions/

David Reich's Harvard team detects recent positive selection for intelligence among Europeans: "We also identify selection for combinations of alleles that are today associated with... increased measures related to cognitive performance." https://www.biorxiv.org/content/10.1101/2024.09.14.613021v1

Ukraine:

“Ukrainian forces from the 3rd Assault Brigade carried out a highly effective attack on Russian positions in the Kharkiv region. Using drones to adjust fire, they successfully targeted and eliminated a group of Russian soldiers.” https://x.com/NOELreports/status/1835346283101323624

Think back to February 24, 2022. If someone had predicted that in 2024 Ukraine would be conducting airstrikes inside Russia, how likely would you have thought it was? https://x.com/AndrewPerpetua/status/1835257576960696370

A Russian soldier filmed the impact on an ammunition depot in the Voronezh region. The footage is likely from September 9th. https://x.com/NOELreports/status/1835069767561969772

“More footage. Ukrainian forces from the 46th Airmobile Brigade, 59th Motorized Brigade, and 21st Special Battalion successfully repelled a massive mechanized assault by Russian armored vehicles in the Pokrovske direction. A total of 46 enemy vehicles attempted to break through to the village of Hostre. The convoy concentrated near the Lozova River, where it suffered heavy losses from artillery strikes and was later finished off by drones.” https://x.com/NOELreports/status/1835012443086483612